Build a Private Agent Network for Your Company

Your company is deploying AI agents. They process customer data, access internal APIs, and coordinate decisions across departments. The question every CISO asks: where does the data go?

With cloud-hosted agent platforms, the answer is uncomfortable. Agent communication routes through third-party infrastructure -- vendor servers, managed message brokers, cloud relay services. Even with TLS, the relay operator can see metadata: which agents are talking, how often, and how much data is flowing. For regulated industries -- healthcare, finance, defense, legal -- this is a compliance problem. For any company that takes data sovereignty seriously, it is a strategic risk.

Pilot Protocol solves this by running entirely on your infrastructure. The rendezvous server, the beacon, and every agent daemon run on machines you control. No data leaves your network. No third party sees your traffic. This post walks through a complete enterprise deployment, from architecture to production hardening.

Why Your Agent Network Should Be Private

There are three reasons companies deploy private agent networks instead of using public infrastructure.

Data Sovereignty

When agents exchange data through a centralized broker or cloud relay, that data transits infrastructure you do not own. Even if the data is encrypted in transit, the routing metadata -- who talks to whom, when, and how often -- is visible to the infrastructure operator. Under GDPR, this metadata can constitute personal data processing. Under HIPAA, agent communication carrying PHI must be on covered infrastructure with a BAA in place.

A private Pilot Protocol network eliminates this concern. The rendezvous server runs on your hardware. The beacon runs on your hardware. Encrypted tunnels are established directly between your agents. No intermediate system -- not even the rendezvous server -- sees the payload data.

Zero Cloud Dependency

Cloud-hosted agent platforms create vendor lock-in. If the platform has an outage, your agents stop communicating. If the vendor changes pricing, you pay or migrate. If the vendor shuts down, you start from scratch. For a detailed analysis of these risks, see Run an Agent Network Without Cloud Dependency.

A self-hosted Pilot network has no external dependencies. The entire stack -- registry, beacon, daemon, CLI -- is a single Go binary. No package managers, no runtime dependencies, no license servers, no phone-home telemetry.

Compliance and Audit

When auditors ask "how do your agents communicate and who can access the communication channel," a private deployment gives a simple answer: all communication occurs over encrypted P2P tunnels within your network, managed by infrastructure you control, logged by systems you own. There is no third-party data processor to assess, no subprocessor chain to document, no cross-border data transfer to justify.

Architecture Overview

A private Pilot Protocol deployment consists of three components:

- Rendezvous server -- runs the registry (port 9000) and beacon (port 9001) on a machine with a static internal IP. This is the only component that must be reachable by all agents.

- Agent daemon -- runs on each machine that hosts an agent. Handles tunnel management, encryption, NAT traversal, and connection multiplexing.

- CLI (pilotctl) -- the management tool. Used to create networks, join agents, configure trust, and monitor the deployment.

Here is how these components connect in a typical enterprise deployment:

# Private network architecture

#

# +---------------------+

# | Rendezvous Server |

# | (registry :9000) |

# | (beacon :9001) |

# | 10.0.1.10 |

# +----------+----------+

# |

# +--------+--------+

# | | |

# +--+--+ +--+--+ +--+--+

# |Agent| |Agent| |Agent|

# | A | | B | | C |

# |10.0 | |10.0 | |10.0 |

# |.1.20| |.1.30| |.2.40|

# +-----+ +-----+ +-----+

#

# Agents connect to registry over TCP :9000

# Agents tunnel to each other over UDP

# Beacon :9001 coordinates hole-punch if subnets differThe rendezvous server is lightweight -- it stores agent registrations in memory and persists them to a JSON file on disk. It does not process or inspect agent traffic. Once two agents have resolved each other's endpoints through the registry, all subsequent data flows directly between them over encrypted UDP tunnels.

Step 1: Deploy the Rendezvous Server

The rendezvous server runs the registry and beacon as a single process. Choose a machine with a static IP that all agents can reach. This can be a dedicated VM, a bare-metal server, or even a container -- the process uses minimal resources (under 20 MB RSS).

# Download the latest release

wget https://github.com/TeoSlayer/pilotprotocol/releases/latest/download/pilot-rendezvous-linux-amd64

chmod +x pilot-rendezvous-linux-amd64

# Start the rendezvous server

# Registry listens on :9000, beacon on :9001

./pilot-rendezvous-linux-amd64 -registry-addr :9000 -beacon-addr :9001For production, run the rendezvous server as a systemd service:

# /etc/systemd/system/pilot-rendezvous.service

[Unit]

Description=Pilot Protocol Rendezvous Server

After=network.target

[Service]

Type=simple

ExecStart=/usr/local/bin/pilot-rendezvous -registry-addr :9000 -beacon-addr :9001

Restart=always

RestartSec=5

User=pilot

Group=pilot

[Install]

WantedBy=multi-user.targetsudo systemctl enable pilot-rendezvous

sudo systemctl start pilot-rendezvousThe registry persists state to /var/lib/pilot/registry.json by default. On restart, it reloads all agent registrations from this file. Configure your backup system to include this path.

Firewall rules: The rendezvous server needs TCP port 9000 (registry) and UDP port 9001 (beacon) open to all agent machines. No other inbound ports are required. The server does not need internet access -- it runs entirely within your network.

Step 2: Start Agent Daemons

On each machine that will run an agent, deploy the Pilot daemon. The daemon handles tunnel management, encryption, and communication with the rendezvous server.

# Download the daemon and CLI

wget https://github.com/TeoSlayer/pilotprotocol/releases/latest/download/pilot-daemon-linux-amd64

wget https://github.com/TeoSlayer/pilotprotocol/releases/latest/download/pilotctl-linux-amd64

chmod +x pilot-daemon-linux-amd64 pilotctl-linux-amd64

# Start the daemon, pointing to your private rendezvous server

./pilot-daemon-linux-amd64 -registry 10.0.1.10:9000 -beacon 10.0.1.10:9001The daemon generates a cryptographic identity (Ed25519 keypair) on first run and stores it at ~/.pilot/identity.json. This identity is permanent -- it persists across restarts and is used for all trust operations. Back up this file; losing it means the agent gets a new identity and must re-establish all trust relationships.

For machines on the same subnet as the rendezvous server, the daemon will register its local IP as the endpoint. For machines on different subnets, the daemon uses STUN discovery through the beacon to determine its reachable endpoint. The beacon coordinates hole-punching for cross-subnet communication automatically.

Step 3: Create a Private Network

Pilot Protocol supports multiple isolated networks on the same infrastructure. Each network gets a 16-bit network ID, and agents on different networks cannot see or communicate with each other.

# Create a private network for your organization

pilotctl create-network "corp-net"

# Output:

# Network created: corp-net (ID: 1)

# Network address prefix: 1:XXXX.XXXX.XXXXThe network name is a human-readable label. Internally, the registry assigns a 16-bit network ID. All agents that join this network receive addresses with this network prefix, isolating them from agents on other networks (including the default backbone network 0).

Step 4: Join Agents and Set Visibility

With the network created, join each agent and configure its visibility.

# On Agent A: join the corp-net network

pilotctl network join "corp-net"

# Register a human-readable hostname

pilotctl set-hostname "data-processor"

# Set visibility to private (this is the default)

pilotctl set-visibility private

# Verify registration

pilotctl status

# Address: 1:0001.0000.0001

# Hostname: data-processor

# Network: corp-net (1)

# Visibility: private

# Trusted peers: 0Repeat this on each agent machine, choosing a descriptive hostname for each:

# On Agent B

pilotctl network join "corp-net"

pilotctl set-hostname "model-runner"

# On Agent C

pilotctl network join "corp-net"

pilotctl set-hostname "orchestrator"With visibility set to private, these agents are registered with the rendezvous server but invisible to each other. No agent can resolve another agent's endpoint until a trust relationship is established. This is the foundation of Pilot's invisible-by-default trust model.

Step 5: Establish Trust Between Agents

Private agents must explicitly trust each other before they can communicate. This is done through a cryptographic handshake signed with each agent's Ed25519 keypair.

# On the orchestrator: initiate trust with data-processor

pilotctl handshake data-processor "Orchestrator needs to submit tasks"

# On data-processor: approve the handshake

pilotctl approve orchestrator

# Repeat for each required trust relationship

pilotctl handshake model-runner "Orchestrator needs to delegate inference"

# On model-runner: approve

pilotctl approve orchestratorAfter mutual approval, both agents can resolve each other's endpoints and establish encrypted tunnels. The trust state is persisted to disk and survives daemon restarts.

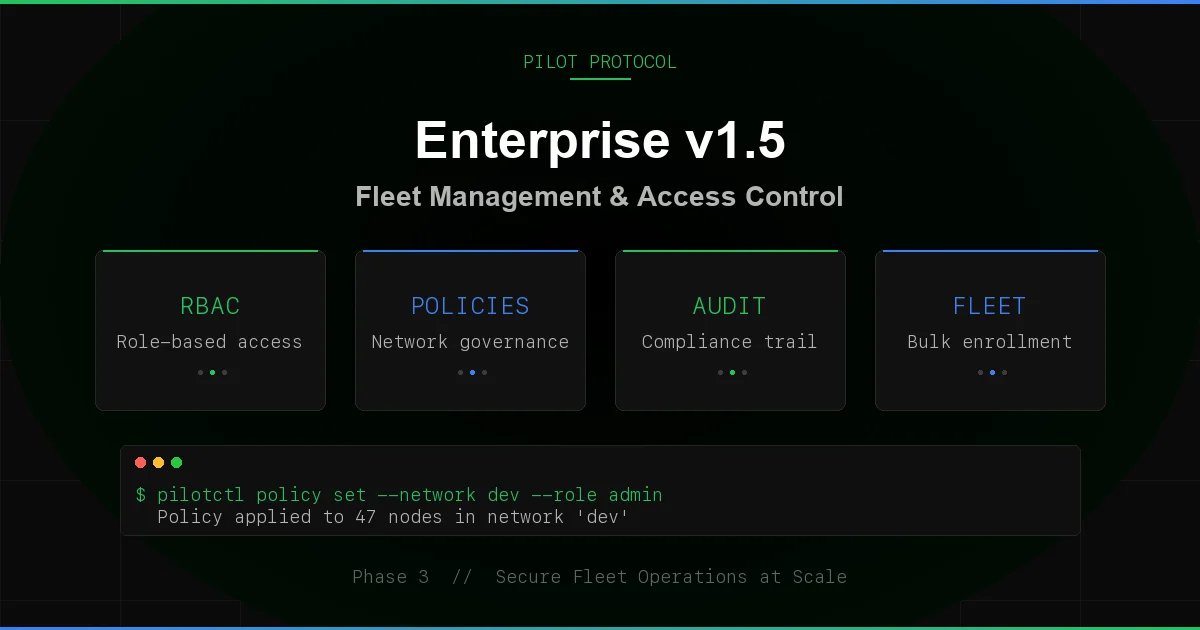

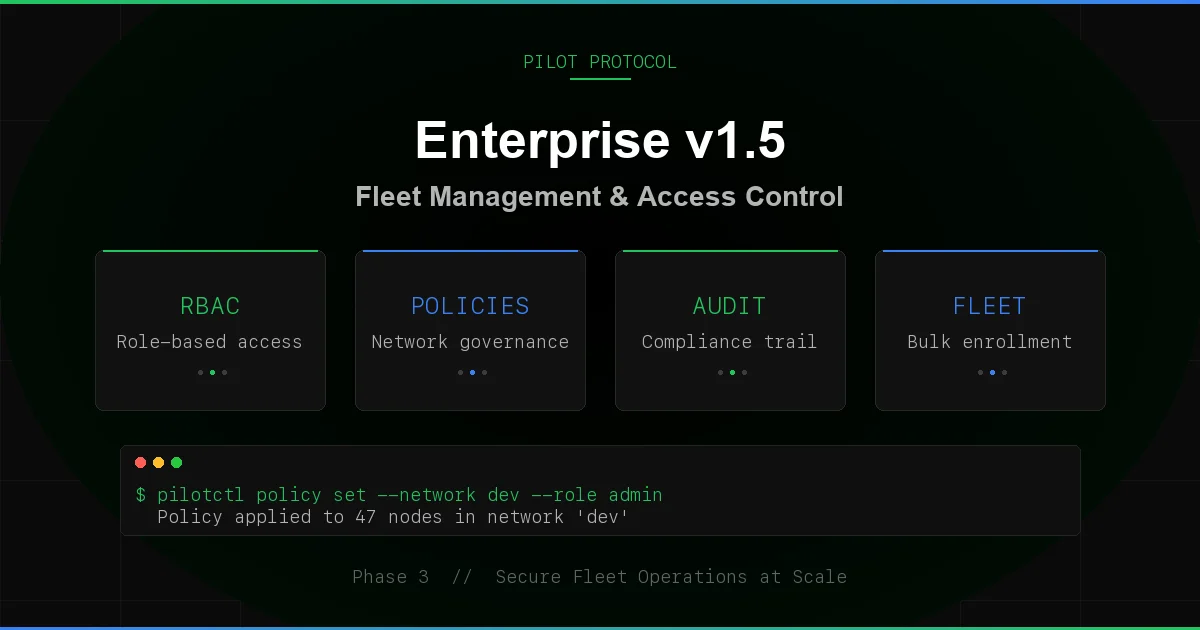

Enterprise Trust Policies

For larger deployments, managing individual handshakes becomes impractical. Pilot supports trust policies that automate approval based on rules:

# Auto-approve all agents on the same network

pilotctl trust-policy auto-approve --same-network

# Auto-approve agents with a specific justification pattern

pilotctl trust-policy auto-approve --justification "department:engineering"Trust policies let you balance security with operational convenience. In a department-level deployment, you might auto-approve within the department and require manual approval for cross-department trust. For a deeper exploration of trust architecture, see Secure Agent Communication with Zero Trust.

Step 6: Bridge to Legacy Systems

Not every system in your organization will run a Pilot agent. Legacy services -- REST APIs, databases, monitoring systems -- still use traditional TCP/IP. The Pilot gateway bridges the two worlds.

The gateway assigns a loopback IP alias for each Pilot address and listens on standard ports, proxying traffic between local TCP clients and Pilot tunnels.

# Start the gateway on the orchestrator machine

pilotctl gateway start

# The gateway maps Pilot addresses to local IPs:

# 1:0001.0000.0001 (data-processor) → 127.0.0.2

# 1:0001.0000.0002 (model-runner) → 127.0.0.3

# Now legacy tools can reach Pilot agents via local IPs

curl http://127.0.0.2:80/api/status

# Routes through Pilot tunnel to data-processor, port 80This means existing monitoring tools (Prometheus, Grafana), CI/CD pipelines, and management scripts can interact with Pilot agents without any modification. The gateway handles the translation transparently.

Port mapping: The gateway listens on the same port numbers that the remote Pilot agent exposes. If data-processor runs an HTTP service on port 80, the gateway listens on 127.0.0.2:80. On Linux, ports below 1024 require root. The gateway automatically adds loopback aliases using ip addr add on Linux or ifconfig lo0 alias on macOS.

Security Hardening

A private deployment provides network-level isolation, but production hardening requires additional measures.

TLS for Registry Connections

Agent-to-registry communication uses TCP. In a private network, this traffic stays within your infrastructure, but you should still encrypt it. Pilot supports TLS with certificate pinning for registry connections:

# Generate a TLS certificate for the rendezvous server

openssl req -x509 -newkey ec -pkeyopt ec_paramgen_curve:prime256v1 \

-keyout registry-key.pem -out registry-cert.pem -days 365 -nodes \

-subj "/CN=pilot-rendezvous"

# Start rendezvous with TLS

./pilot-rendezvous -registry-addr :9000 -beacon-addr :9001 \

-tls-cert registry-cert.pem -tls-key registry-key.pem

# On agents: pin the registry certificate

./pilot-daemon -registry 10.0.1.10:9000 -beacon 10.0.1.10:9001 \

-registry-cert registry-cert.pemCertificate pinning means agents will refuse to connect to a registry that presents a different certificate. This prevents man-in-the-middle attacks even if an attacker compromises your DNS or ARP tables.

IPC Socket Permissions

The daemon exposes a Unix domain socket at /tmp/pilot.sock for CLI communication. In a multi-user environment, restrict access to this socket:

# Set socket permissions to owner-only

chmod 600 /tmp/pilot.sock

# Run the daemon as a dedicated user

sudo useradd -r -s /usr/sbin/nologin pilot

sudo -u pilot ./pilot-daemon -registry 10.0.1.10:9000 -beacon 10.0.1.10:9001Per-Port Accept Rules

By default, a Pilot agent accepts connections on all ports it has listeners for. In a hardened deployment, you can restrict which ports accept connections from which peers:

# Only allow the orchestrator to submit tasks on port 1003

pilotctl port-policy 1003 --allow orchestrator

# Allow any trusted peer to connect to the echo port

pilotctl port-policy 7 --allow-trusted

# Block all connections on port 1000 (stdio)

pilotctl port-policy 1000 --deny-allPer-port accept rules provide defense in depth. Even if an attacker compromises one agent and establishes trust, they can only access the specific ports that the target agent permits.

Structured Logging and Audit Trail

The daemon uses Go's slog package for structured JSON logging. Every connection, trust change, and error is logged with machine-parseable fields:

# Sample log output (JSON lines)

{"time":"2026-02-11T14:23:01Z","level":"INFO","msg":"tunnel established","peer":"1:0001.0000.0002","nat":"direct"}

{"time":"2026-02-11T14:23:01Z","level":"INFO","msg":"handshake approved","peer":"orchestrator","addr":"1:0001.0000.0003"}

{"time":"2026-02-11T14:23:05Z","level":"INFO","msg":"connection opened","src":"1:0001.0000.0003","dst":"1:0001.0000.0001","port":1001}Ship these logs to your SIEM (Splunk, Elastic, Datadog) for audit trail compliance. The structured format makes it straightforward to create alerts on trust changes, failed connections, and unusual traffic patterns.

High Availability

The rendezvous server is a single point of coordination (not a single point of failure for data -- agent-to-agent tunnels continue working if the registry goes down temporarily). For production deployments that require HA, Pilot supports hot-standby replication.

# Primary rendezvous server

./pilot-rendezvous -registry-addr :9000 -beacon-addr :9001 \

-replicate-to standby.internal:9000

# Standby rendezvous server

./pilot-rendezvous -registry-addr :9000 -beacon-addr :9001 \

-standby -primary primary.internal:9000The primary pushes registration snapshots to the standby every 15 seconds via heartbeat. If the primary fails, a manual failover promotes the standby. Existing agent tunnels continue functioning during the failover window -- only new registrations and resolves are affected.

Production Deployment Checklist

Before going live, verify each item:

- Rendezvous server: Running as systemd service, TLS enabled, persistence directory backed up

- Firewall rules: TCP 9000 and UDP 9001 open from all agent subnets to rendezvous server

- Agent daemons: Running as systemd services, identity files backed up, pointing to private registry

- Network isolation: Agents joined to private network, visibility set to private

- Trust policies: Handshakes completed or auto-approve rules configured

- IPC security: Daemon running as dedicated user, socket permissions restricted

- Per-port rules: Sensitive ports restricted to specific peers

- Logging: Structured logs shipping to SIEM, alerts configured for trust changes

- HA: Standby rendezvous server configured and tested

- Gateway: Configured for any legacy system integration needed

What You Get

A private Pilot Protocol deployment gives your organization:

- Complete data sovereignty -- no traffic leaves your infrastructure, no third party processes your data

- End-to-end encryption -- X25519 + AES-256-GCM between every pair of agents, with the rendezvous server never seeing payload data

- Cryptographic identity -- every agent has an Ed25519 keypair, trust is explicit and auditable

- Zero cloud dependency -- the entire stack runs on your machines with zero external calls

- Minimal footprint -- the rendezvous server uses under 20 MB RSS, each daemon under 10 MB

- Legacy compatibility -- the gateway bridges Pilot agents to existing HTTP/TCP systems without modification

Your agents communicate freely within your network. Nothing leaves your control. And when the deployment grows from 3 agents to 300, the only thing that changes is the number of daemons you run.

Deploy Your Private Network

Download the binaries, start the rendezvous server, and have your first private agent network running in under 10 minutes.

View on GitHub