Decentralized communication protocols for AI developers

Decentralized communication protocols for AI developers

Nearly one-third of peer-to-peer connection attempts still fail in production deployments due to NAT traversal edge cases, despite a decade of protocol innovation. That single statistic shapes every architectural decision you make when building distributed AI systems. DCUtR hole-punching success sits at roughly 70%, meaning roughly 30% of your agents may silently fall back to relays or simply fail to connect. This guide walks through the core protocols, peer discovery strategies, NAT traversal techniques, and encryption options that matter most for AI agent networks today, so you can make informed choices before you write a single line of code.

Table of Contents

- Core concepts: What makes a protocol decentralized?

- Peer discovery and routing: Kademlia, DHTs, and edge cases

- NAT traversal: The persistent hurdle in decentralized networking

- Secure messaging: End-to-end encryption for distributed agents

- Protocol selection: Real-world scenarios for distributed AI systems

- Pilot Protocol: Decentralized communication for autonomous agents

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| NAT traversal remains critical | Despite protocol innovation, NAT traversal failures impact up to 30% of agent connections in decentralized networks. |

| Peer discovery shapes scalability | Kademlia DHT and similar schemes offer efficient peer routing but require robust Sybil protection to scale securely. |

| Choose E2EE wisely | Select group or pairwise encryption protocols based on agent count and trust model for optimal security. |

| Modularity enables flexibility | Modular stacks like libp2p empower developers to tailor communication solutions for distributed AI deployments. |

| Pilot Protocol offers practical resources | Pilot Protocol bridges gap between theory and implementation for decentralized agent networking. |

Core concepts: What makes a protocol decentralized?

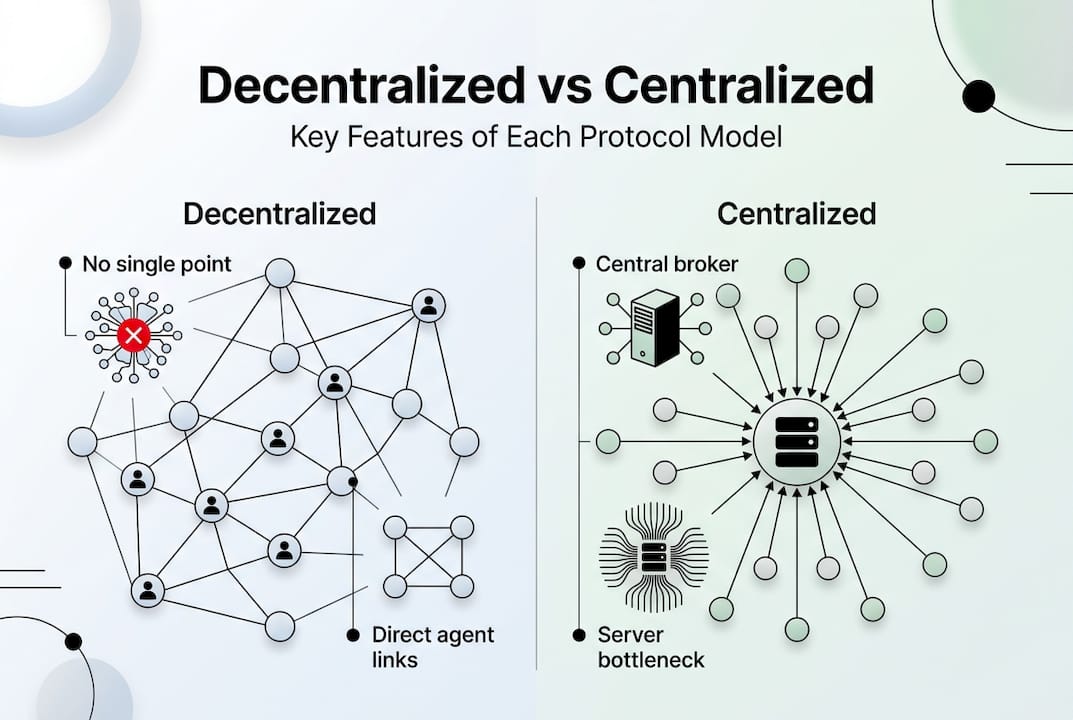

With the challenge in mind, it is essential to understand the fundamental elements that set true decentralized protocols apart from centralized or hybrid alternatives.

A decentralized communication protocol removes the need for a central authority to route, store, or validate messages. Every node participates equally in the network. In practice, this means:

- Peer identity: Each node has a cryptographic identity, not a server-assigned address.

- Transport agnosticism: The protocol works over TCP, QUIC, WebSockets, or any available transport.

- Self-describing addresses: Nodes advertise their own reachability without a registry.

- Pubsub messaging: Nodes subscribe to topics and receive messages from any publisher on the network.

Libp2p is a modular P2P networking stack underpinning projects like IPFS, and it is the clearest example of these principles in action. You can swap transports, peer discovery mechanisms, and security layers independently. That modularity matters enormously for AI agent networks, where requirements shift as your fleet scales.

Here is how decentralized and centralized architectures compare at a glance:

| Feature | Decentralized | Centralized/mixed |

|---|---|---|

| Single point of failure | None | Yes (broker/server) |

| Latency | Variable, often lower | Predictable, often higher |

| Scalability | Horizontal, organic | Vertical, planned |

| Trust model | Cryptographic, peer-based | Server-issued credentials |

| NAT traversal | Required, complex | Handled by server |

| Pubsub support | Native (gossipsub) | Broker-dependent |

For p2p file transfer protocols and agent-to-agent data streaming, the decentralized model removes the broker bottleneck entirely. The Pilot Protocol architecture builds on these same principles, adding persistent virtual addresses and encrypted overlays on top.

Peer discovery and routing: Kademlia, DHTs, and edge cases

Once protocols are established, discovering and connecting peers becomes the next architectural challenge.

Kademlia is the distributed hash table (DHT) algorithm used by most production P2P networks. It assigns each node a unique ID and uses XOR-based distance metrics to route lookups. The result is O(log n) lookups, meaning a network of one million nodes requires roughly 20 hops to find any peer. That efficiency is why Ethereum, IPFS, and BitTorrent all rely on Kademlia variants.

But Kademlia has real weaknesses you need to plan for:

- Sybil attacks: An adversary floods the DHT with fake nodes to control routing tables.

- Eclipse attacks: A targeted node sees only attacker-controlled peers, isolating it from the real network.

- Churn sensitivity: High node turnover degrades routing accuracy and increases lookup failures.

- Bootstrap dependency: New nodes must contact at least one known peer to join, creating a soft centralization point.

Blockchain P2P networks vary widely in size, reachability, and peer discovery coverage, which confirms that no single DHT configuration works universally. Bitcoin’s network maintains tens of thousands of reachable nodes, while smaller chains struggle with coverage gaps.

“Sybil resistance in DHTs is not a solved problem. Proof-of-work node admission and reputation scoring are the most practical mitigations available today, but both add operational complexity.”

For AI agent fleets, OpenClaw peer discovery offers a practical alternative that combines DHT-style routing with trust-gated admission, reducing Sybil exposure without requiring a central registry.

NAT traversal: The persistent hurdle in decentralized networking

Peer routing only works if connections succeed beyond local network barriers. NAT (Network Address Translation) is the single biggest reason they do not.

DCUtR hole-punching in libp2p achieves a 70% success rate with a margin of plus or minus 7.1%, and 97.6% of successful connections complete on the first attempt. That is genuinely impressive. But the remaining 30% still require relay fallback, which reintroduces partial centralization into your otherwise decentralized stack.

Here is a practical sequence for handling NAT traversal in production:

- Attempt direct hole punching using DCUtR or ICE (Interactive Connectivity Establishment).

- Enable UPnP or NAT-PMP on supported routers to request port mappings automatically.

- Implement self-healing UPnP to detect and recover from stale mappings. Self-healing UPnP and deduplication reduce mapping failures from 10 to 2 per session.

- Fall back to TURN-style relays only when direct connection fails, and monitor relay usage as a health metric.

- Log and alert on relay rate. If more than 15% of your connections use relays, your NAT traversal strategy needs tuning.

Pro Tip: In multi-cloud deployments, symmetric NAT is common and blocks most hole-punching attempts. Test your traversal strategy across AWS, GCP, and Azure before you deploy agents at scale. A NAT traversal deep dive can help you map out failure modes specific to each cloud provider.

For agents running behind restrictive firewalls, zero config NAT agents and approaches to connect agents behind NAT without a VPN are worth reviewing before you commit to a relay-heavy architecture. If your agents span multiple clouds, connecting agents across clouds without a VPN is achievable with the right overlay design.

Secure messaging: End-to-end encryption for distributed agents

Ensuring that communication stays both robust and private is the next piece of the puzzle.

You have three main options for end-to-end encryption (E2EE) in agent networks:

- Message Layer Security (MLS): Designed for large group E2EE. MLS uses a tree-based key schedule that scales efficiently, but it relies on an Authentication Service (AS) that is vulnerable to compromise at group initialization. If the AS is compromised, group membership cannot be trusted from the start.

- Signal Double Ratchet: Provides forward secrecy and break-in recovery for pairwise sessions. For small groups under 1000 agents, sender keys allow broadcast-style messaging with strong security guarantees and simpler trust assumptions than MLS.

- X25519 plus AES-GCM: A zero-dependency option that gives you elliptic curve key exchange and authenticated encryption without pulling in a full protocol library. It is the right choice when you control both endpoints and want minimal attack surface.

Pro Tip: Match your encryption protocol to your group size and trust model. For fewer than 1000 agents with known identities, Signal’s Double Ratchet is simpler and more auditable. For larger, dynamic groups where membership changes frequently, MLS scales better but requires careful AS design. For direct agent-to-agent tunnels, X25519 AES-GCM encryption is fast, auditable, and easy to implement.

If you are running HTTP services over encrypted overlays, wrapping your existing HTTP or gRPC traffic inside an encrypted tunnel is often simpler than retrofitting application-layer E2EE onto every service.

Protocol selection: Real-world scenarios for distributed AI systems

With the underlying components mapped, let’s look at how protocol choices play out in actual AI deployments.

Consider a fleet of 500 AI agents distributed across three cloud providers and several on-premise networks. Your requirements are low-latency agent-to-agent messaging, secure data streaming, and resilience to network churn. Here is how a practical stack looks:

- Peer identity: Ed25519 keys per agent, registered at startup.

- Discovery: Kademlia DHT with Sybil-resistant admission (proof-of-work or token-gated join).

- Transport: QUIC for low-latency connections, TCP fallback for restrictive firewalls.

- NAT traversal: DCUtR hole punching with UPnP self-healing and relay fallback.

- Encryption: X25519 plus AES-GCM for pairwise tunnels, Signal sender keys for broadcast topics.

- Pubsub: Gossipsub for topic-based agent coordination.

Libp2p implementations in Go, JavaScript, and Rust are used in IPFS and blockchain networks at this scale, which gives you production-tested code rather than greenfield risk.

Here is a quick decision table for protocol selection:

| Scenario | Recommended stack | Key trade-off |

|---|---|---|

| Small agent fleet, known peers | X25519 tunnels, mDNS discovery | Simple but does not scale beyond LAN |

| Large fleet, dynamic membership | Kademlia DHT, MLS, gossipsub | Complex trust setup |

| Cross-cloud, NAT-heavy | DCUtR, QUIC, relay fallback | Relay cost if hole punching fails |

| Legacy HTTP/gRPC integration | Encrypted overlay wrapping | Overhead of tunnel encapsulation |

For file transfer scenarios and real-world protocol integration, the overlay approach consistently outperforms point-to-point VPN tunnels in flexibility. The Pilot Protocol overview formalizes many of these patterns into a coherent network-layer specification.

Pilot Protocol: Decentralized communication for autonomous agents

For teams ready to take the next step, here is how Pilot Protocol translates these concepts into action.

Pilot Protocol is built specifically for AI agents and distributed systems that need secure, direct peer-to-peer communication without centralized brokers. It handles peer discovery, NAT traversal, encrypted tunnels, and trust establishment out of the box, so you spend less time on infrastructure and more time building agent logic.

The network-layer problem statement behind Pilot Protocol directly addresses the NAT traversal, Sybil resistance, and E2EE challenges covered in this guide. The Pilot Protocol IETF draft provides the full technical specification if you want to evaluate it against your architecture requirements. SDKs for Go and Python and a unified CLI make integration straightforward for teams already working in these ecosystems.

Frequently asked questions

What is the biggest challenge for decentralized peer-to-peer communication in AI systems?

NAT traversal failure rates remain the most persistent problem, with up to 30% of connections failing in pure P2P deployments and requiring fallback solutions like relays to maintain connectivity.

How does Kademlia DHT enable efficient peer discovery?

Kademlia uses XOR-based distance metrics for O(log n) lookups, enabling fast and scalable peer routing, but you need additional security steps like proof-of-work admission to resist Sybil attacks.

Which encryption protocol should I use for secure group messaging among distributed agents?

For groups smaller than 1000, Signal’s Double Ratchet is typically the better choice. For larger, dynamic groups, MLS offers scalability but introduces trust trade-offs at the Authentication Service layer.

Are decentralized communication protocols viable for large-scale AI agent deployments?

Yes. Proven stacks like libp2p support thousands of nodes, but real-world peer reachability varies significantly depending on network churn, NAT types, and discovery coverage across different environments.