Legacy protocol integration for secure distributed AI

Legacy protocol integration for secure distributed AI

TL;DR:

- Connecting legacy protocols to decentralized AI networks no longer requires complete system overhauls, thanks to modern middleware, protocol bridges, and P2P overlays.

- Hybrid integration approaches, combining middleware, gateways, and protocol wrapping, provide scalable, secure, and resilient solutions adaptable to complex operational environments.

Legacy protocol integration with decentralized AI networks is widely assumed to require massive re-architecture, long timelines, and specialized expertise that most teams simply don’t have. That assumption is wrong. Modern tooling including middleware layers, protocol bridges, and P2P overlay networks now lets you connect HTTP, SOAP, Modbus, and other established protocols to distributed agent systems without complete system overhauls. This article covers the frameworks, security strategies, and edge cases you need to know, so you can build resilient, production-ready integrations with confidence.

Table of Contents

- Why is legacy protocol integration needed in distributed AI?

- Core integration frameworks: Middleware, gateways, and protocol bridges

- Securing data exchange: Gateways, oracles, and privacy risks

- Edge cases and best practices: NAT, firewalls, and key management

- A candid perspective: Why hybrid integration wins for distributed AI

- Accelerate secure legacy integration with Pilot Protocol

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Integration essentials | Middleware, gateways, and protocol bridges form the backbone for secure legacy-decentralized connectivity. |

| Security best practices | Prioritize multi-gateway setups, modern cryptography, and certified oracles to mitigate vulnerabilities. |

| Key operational challenges | Address NAT, firewalls, and credential management using tools like relays, HSMs, and protocol auto-bridges. |

| Hybrid approach wins | Gradual, layered integration reduces risk versus abrupt system rewrites, supporting robust distributed AI deployments. |

Why is legacy protocol integration needed in distributed AI?

Distributed AI architectures don’t operate in a vacuum. They run alongside industrial controllers, enterprise APIs, IoT sensors, and data platforms that were built years or even decades before peer-to-peer networking became viable. You can’t simply swap those systems out. The business logic, regulatory requirements, and operational dependencies run too deep.

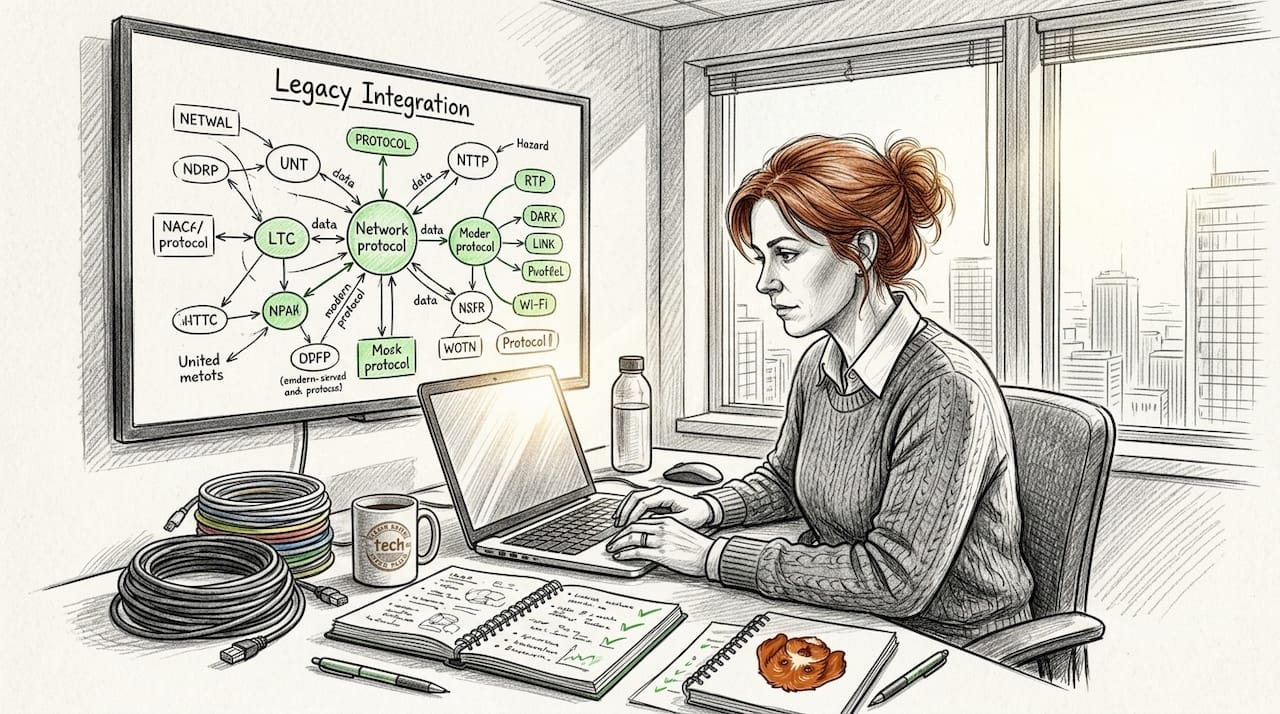

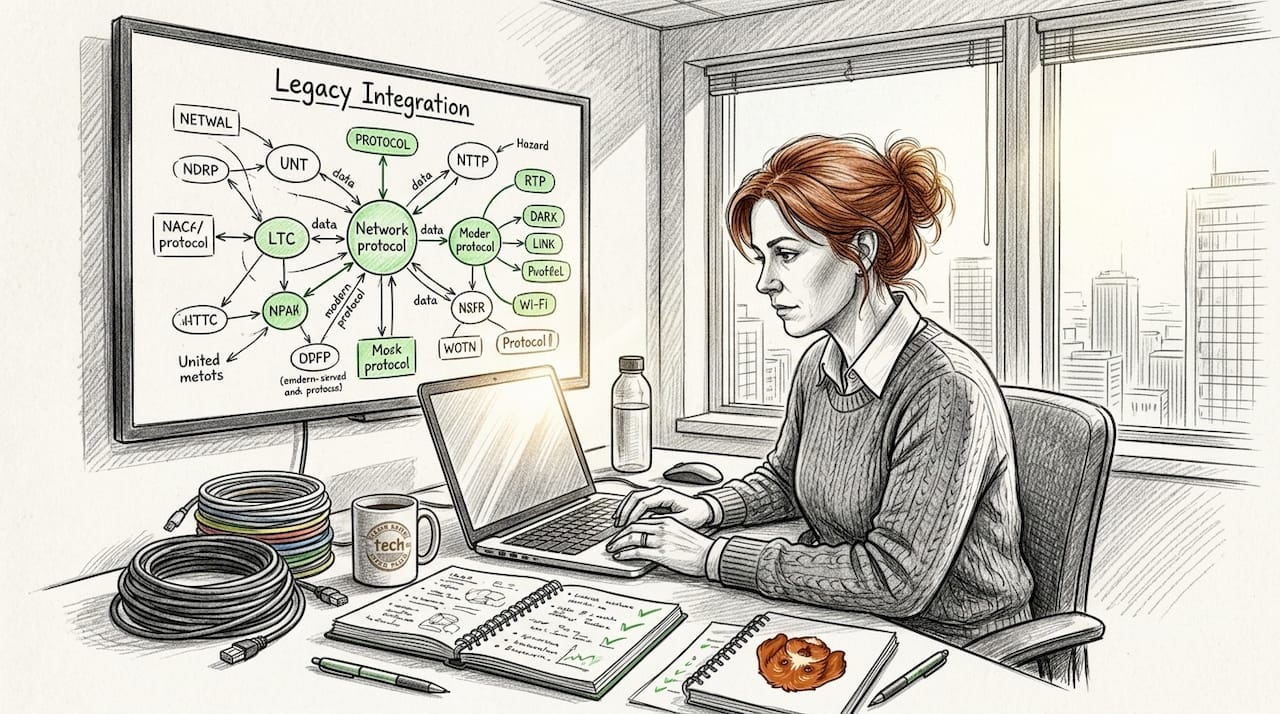

The core challenge is this: legacy protocols like HTTP, Modbus, and SOAP were designed for centralized, request-response environments. Distributed AI agent swarms, on the other hand, need dynamic discovery, mutual authentication, and resilient communication across cloud regions and network boundaries. Bridging that gap without breaking existing workflows is where integration architecture earns its value.

Here are the most common pain points engineers run into:

- Protocol mismatch: Legacy systems speak synchronous request-response; decentralized networks often use pub-sub, gossip, or event-driven messaging.

- Security gaps: Older protocols frequently rely on network-level trust rather than cryptographic identity, which creates serious exposure when you connect them to open P2P environments.

- NAT and firewall barriers: Industrial and enterprise systems sit behind restrictive networks that block peer discovery.

- Auditability: Decentralized systems require verifiable, tamper-resistant logs that legacy protocols were never designed to produce.

“P2P stacks like libp2p and Pilot enable agent swarms with legacy HTTP compatibility via proxies, enhancing secure machine-to-machine communications in distributed setups.”

This is why the integration question matters so much right now. AI agent deployments are moving from controlled cloud environments into heterogeneous infrastructure where legacy and decentralized systems must coexist. Understanding decentralized communication protocols is a necessary foundation before you pick any integration pattern.

Now that you understand the big-picture challenge, let’s clarify the frameworks and tools available.

Core integration frameworks: Middleware, gateways, and protocol bridges

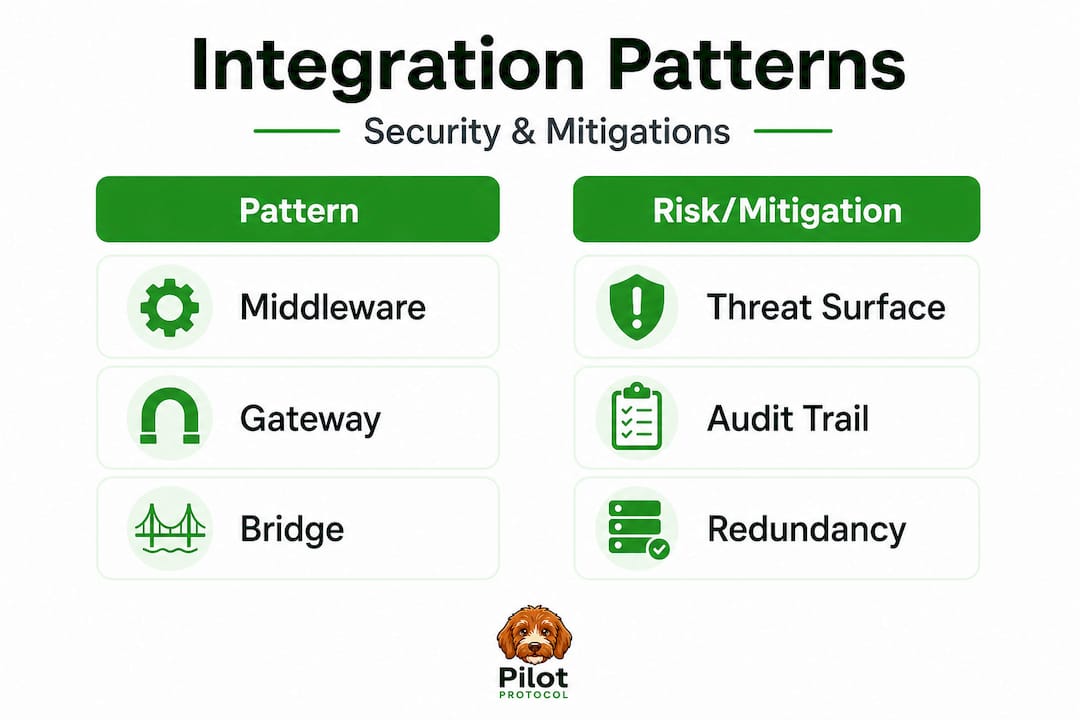

Three architectural patterns dominate real-world legacy-to-decentralized integration. Each solves a different set of problems, and each carries different tradeoffs. Understanding when to use which one is the skill that separates solid integrations from brittle ones.

Middleware sits between your legacy system and the decentralized network. It handles translation, event routing, and protocol normalization without touching either end system. Chainlink’s middleware abstraction layers like CRE and CCIP, for example, connect legacy REST and GraphQL APIs via oracles that handle blockchain events and data pushes without direct chain exposure. Middleware is flexible but adds latency and operational overhead.

Gateways act as controlled entry points that translate incoming requests from one protocol space to another. They are fast and well-understood but introduce centralization risk. If the gateway goes down, connectivity stops.

Protocol bridges wrap one protocol inside another, allowing two incompatible systems to communicate without either side changing. libp2p, for instance, enables P2P integration via hybrid transports, circuit relays for NAT traversal, and protocol bridges that wrap legacy HTTP and TCP into P2P streams, allowing OpenAI-compatible endpoints to operate over decentralized networks. This is where wrapping legacy protocols becomes a practical skill rather than a theoretical one.

Here’s a comparison to help you choose:

| Approach | Security | Flexibility | Auditability | Best for |

|---|---|---|---|---|

| Middleware | High, configurable | Very high | Strong, centralized logs | Enterprise API integration, oracle pipelines |

| Gateway | Medium, depends on config | Medium | Moderate | HTTP-to-P2P translation, browser access to decentralized storage |

| Protocol bridge | High, cryptographic | High | Distributed, verifiable | Wrapping Modbus, SOAP, or HTTP into P2P streams |

For specific scenarios, here’s a quick guide:

- Use middleware when you need event-driven workflows between blockchain smart contracts and legacy REST APIs.

- Use a gateway when legacy clients need read access to decentralized storage or P2P networks without any code changes.

- Use a protocol bridge when you need to wrap industrial protocols like Modbus for AI agent communication without upgrading hardware.

- Use hybrid combinations for high-availability deployments where a single failure mode is unacceptable.

Exploring protocol wrapping methods in detail will help you implement these patterns correctly. For teams that need external support during complex migrations, software integration solutions can provide additional engineering guidance.

Pro Tip: Gradual migration using protocol bridges is consistently safer than big-bang rewrites. Wrap your legacy endpoints in a protocol bridge first, validate behavior under production load, then incrementally replace legacy logic. This approach lets you prove correctness at each step and gives you a rollback path if something breaks.

Armed with this toolbox, it’s critical to understand the security implications of each integration pattern.

Securing data exchange: Gateways, oracles, and privacy risks

Every integration pattern introduces a specific threat surface. Engineers who treat security as a post-deployment concern end up with hard-to-fix vulnerabilities. Address them at the design stage.

The risks vary significantly by architecture. Here’s a focused breakdown:

| Architecture | Key risk | Availability concern | Recommended mitigation |

|---|---|---|---|

| Gateway | Single point of failure, data exfiltration | High if centralized | Multi-gateway deployment, DNSLink fallback |

| Oracle | Oracle self-deception, stale data feeds | Medium | Threshold consensus, multiple data sources |

| Proxy/bridge | Credential exposure, replay attacks | Low with proper config | Mutual TLS, post-quantum crypto |

| Middleware | Centralized bottleneck, auth bypass | Medium | Rate limiting, anomaly detection |

IPFS and BTFS gateways bridge HTTP clients to decentralized storage by translating CIDs to HTTP paths, enabling legacy browsers and apps to access content. However, they introduce serious centralization risk if gateways fail or are compromised. This is a design tension you need to resolve explicitly, not hope away.

Pro Tip: Deploy multiple independent gateways across separate providers and configure DNSLink to route clients to the fastest available instance. This reduces single-point-of-failure risk significantly and keeps availability high during planned maintenance or unexpected downtime.

For industrial environments, the security picture is more complex. Many legacy protocols like Modbus and SOAP were designed with zero built-in cryptographic identity. Proxies and translation tunnels now use DIDs (Decentralized Identifiers), Verifiable Credentials, post-quantum cryptography, and DHTs as Verifiable Data Registries to secure legacy industrial protocols in decentralized setups without requiring hardware upgrades. That’s a meaningful advancement.

Here are the essential practices to prevent breaches when wrapping industrial protocols:

- Assign a DID to every legacy device at the integration boundary rather than relying on IP-based identity.

- Enforce mutual authentication on every session, not just the initial handshake.

- Use post-quantum key exchange algorithms for new integrations given the advancing timeline on quantum computing threats.

- Log all cross-boundary data flows to an immutable, distributed ledger for compliance and forensic purposes.

- Rotate credentials on a fixed schedule using automated tooling rather than manual processes.

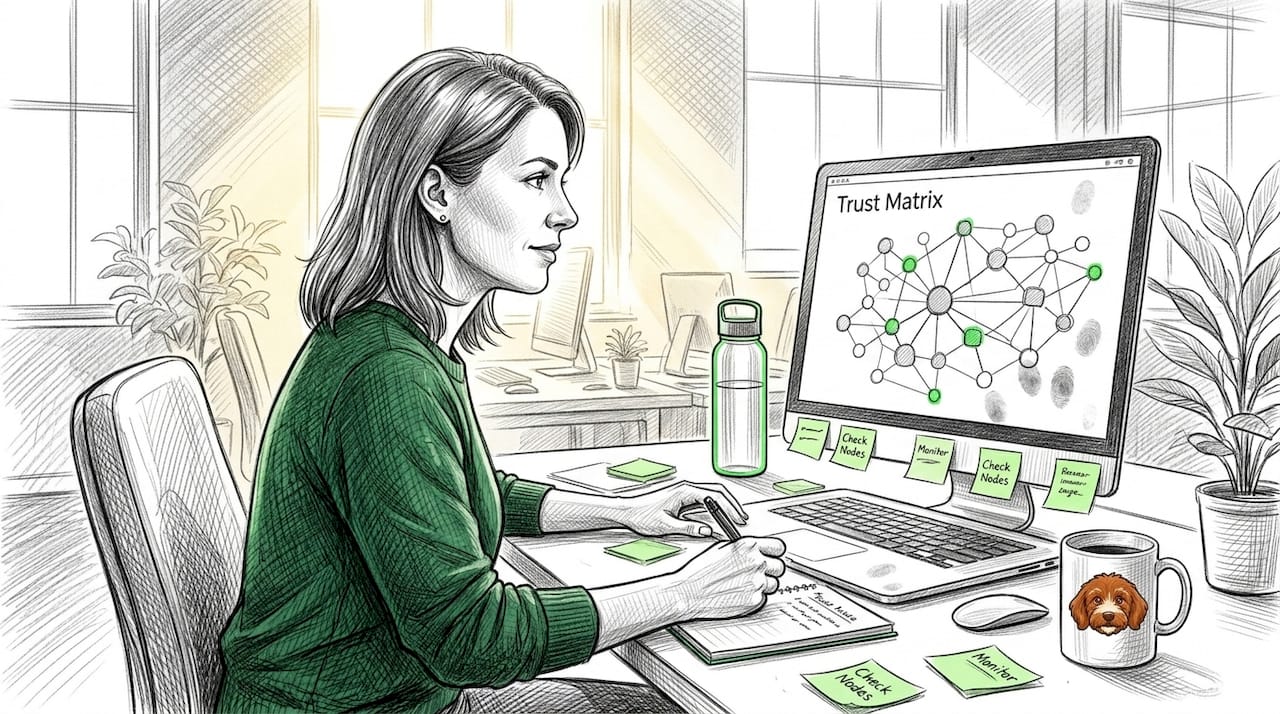

Building trust in protocol security requires layering cryptographic identity onto systems that were never designed with it in mind. That work is non-trivial but entirely achievable with the right tooling.

For teams evaluating external implementation support, system integration guidance is available from specialists with distributed systems experience.

While patterns and security are crucial, real integrations hinge on navigating architectural edge cases and operational realities.

Edge cases and best practices: NAT, firewalls, and key management

Most integration failures in production aren’t caused by architectural errors at the design stage. They’re caused by edge cases that teams didn’t plan for. These are the ones that consistently cause outages, security incidents, and performance degradation.

Here are the most common edge cases ranked by frequency:

- NAT and firewall traversal failures: Agents behind strict NAT or corporate firewalls can’t establish P2P connections without relay support. This is the most frequent production blocker.

- Gateway and relay downtime: A single gateway or relay node going offline disconnects all dependent clients. Teams often underestimate how frequently this happens in cloud environments.

- Key rotation failures: Poorly automated key rotation leads to expired credentials locking out agents mid-operation, causing cascading task failures across the fleet.

- Oracle compromise: A compromised oracle node can feed false data to smart contracts or AI decision pipelines. CVE-level vulnerabilities like oracle self-deception scenarios where nodes validate their own false claims are a documented risk.

- Protocol version drift: Legacy systems running older protocol versions may reject handshakes from upgraded bridge components, creating silent failures.

For NAT and firewall issues specifically, here are the solutions that work:

- Enable libp2p auto-relay so agents can route through available relay nodes automatically when direct connections fail.

- Use hole-punching techniques combined with STUN-style coordination to establish direct connections whenever possible, falling back to relays only when necessary.

- Configure multiple relay nodes across different cloud regions to avoid geographic single-point-of-failure scenarios.

- Use overlay networks like Pilot Protocol that handle NAT traversal natively, removing the need to configure traversal logic per agent. A deep dive on NAT traversal walks through the specifics in detail.

Pro Tip: Use hardware security modules (HSMs) for credential and key management across your agent fleet. Password-based resets are a major attack vector. HSMs provide tamper-resistant key storage and enforce access policies at the hardware level, making them significantly harder to compromise than software-based keystores.

Additional solutions worth prioritizing:

- Implement zero-config NAT solutions that handle traversal automatically without per-deployment configuration.

- For agents needing direct connectivity in restricted environments, techniques for connecting behind NAT without relying on a VPN are practical and well-documented.

Real-world configuration improvements show an 800ms time-to-first-byte reduction by avoiding unnecessary gateway hints, along with improved node reachability through libp2p auto-relay. These aren’t marginal gains. At scale across hundreds of agents, they add up to meaningful performance and reliability improvements.

Pulling together all these architectural and workflow lessons, it’s time for a candid assessment of what works and what doesn’t in the real world.

A candid perspective: Why hybrid integration wins for distributed AI

Here’s what actual deployments consistently reveal: the integrations that fail aren’t the ones with complex architectures. They’re the ones that tried to keep it too simple.

Teams reach for a single gateway because it’s fast to deploy. It works great in staging. Then in production, the gateway goes down, or gets overloaded, or sits in a geographic region with high latency for half your agents. The “simple” choice becomes the expensive one.

The pattern that holds up is hybrid integration. Use middleware for event-driven flows where you need auditability. Use protocol bridges to wrap legacy endpoints without touching them. Use P2P overlay for agent-to-agent communication where direct, encrypted tunnels matter. Layer them intentionally rather than picking one and hoping it covers all your cases.

Direct integration risks like exposing legacy authentication to blockchain-connected systems are well-documented, and the consensus is clear: middleware and oracle patterns consistently outperform native protocol changes. Hybrid modes allow gradual migration without big-bang rewrites, which is where most re-architecture projects fail anyway.

The other honest lesson from real deployments is that composability matters more than elegance. An integration that uses three well-understood patterns in combination is easier to debug, easier to replace piece by piece, and easier to hand off to a new team member than a custom solution that cleverly consolidates everything into one. Incremental, composable upgrades prevent lock-in and give you room to evolve your architecture as decentralized networking standards mature.

If you’re building peer-to-peer agent strategies for production systems, the hybrid approach isn’t a compromise. It’s the correct engineering decision.

Accelerate secure legacy integration with Pilot Protocol

If you’re ready to move from architecture planning to actual implementation, Pilot Protocol is built for exactly this use case. It provides a production-grade P2P overlay for AI agents and distributed systems, with native support for wrapping legacy protocols like HTTP, gRPC, and SSH inside encrypted peer-to-peer tunnels. NAT traversal, mutual trust establishment, persistent virtual addresses, and multi-cloud connectivity are all built in, so you spend time on your integration logic rather than networking infrastructure.

Pilot Protocol removes the operational complexity that typically slows legacy-to-decentralized integrations. You get CLI tools, Python and Go SDKs, and a web console that let you deploy, monitor, and manage agent networks without standing up centralized brokers or message queues. Explore direct P2P integration for AI agents and see how quickly you can connect your existing systems to a secure, decentralized agent network.

Frequently asked questions

What are the main methods for integrating legacy protocols with decentralized AI networks?

The core methods include middleware layers, protocol bridges, and gateways that translate or wrap legacy protocols like HTTP or Modbus for peer-to-peer and blockchain networks. Legacy protocol integration primarily uses these patterns to enable secure data exchange without requiring changes to either end system.

How do decentralized gateways pose security risks in legacy integrations?

Gateways introduce centralization and can create single points of failure or privacy risks if compromised or taken offline. IPFS and BTFS gateways specifically introduce centralization risks that undermine decentralization goals when they fail or are targeted.

What are the best practices for securing legacy industrial protocols in a decentralized setup?

Use cryptographic tools like DIDs, verifiable credentials, post-quantum encryption, and deploy multiple gateways to avoid single points of failure. Proxies and translation tunnels using DIDs, VCs, and post-quantum crypto are now the standard approach for securing industrial protocol integrations.

How can NAT and firewall issues be addressed during legacy protocol integration?

Solutions include hybrid transports, circuit relays, and auto-relay methods as provided by stacks like libp2p, which bypass restrictive networking environments. libp2p hybrid transports combined with circuit relays are the most reliable production-tested approach for NAT traversal.

Are there proven performance improvements from modern integration approaches?

Qualitative gains have been observed, including reduced latency and improved node reachability by using multi-gateway and auto-relay approaches. Specific configuration changes show an 800ms TTFB reduction and measurable reachability improvements with libp2p auto-relay enabled.