Trust in network protocols for decentralized systems

Trust in network protocols for decentralized systems

TL;DR:

- Trust in decentralized AI relies on risk assessment, not implicit goodwill.

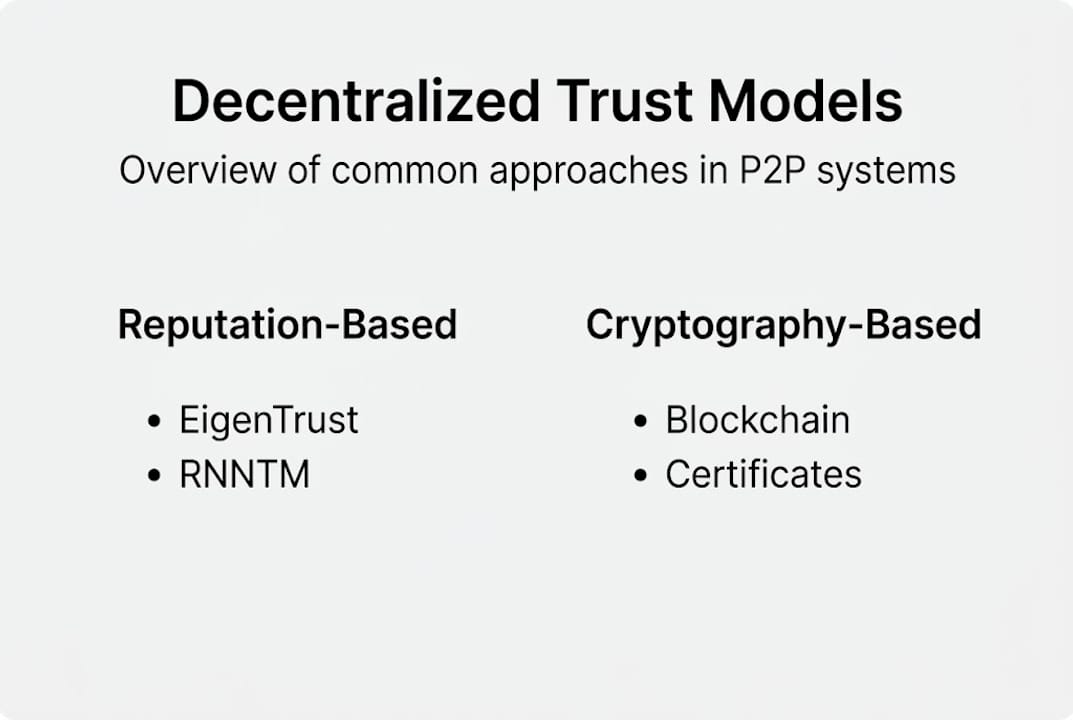

- Models like EigenTrust, RNNTM, and blockchain help evaluate peer reliability and security.

- Zero-trust principles require continuous identity verification, policy enforcement, and adaptive trust management.

Many engineers assume trust in network protocols requires a central authority to work. In decentralized AI and P2P systems, that assumption is not just wrong - it’s a liability. Every peer must independently evaluate the reliability and intentions of every other peer, with no referee to appeal to. The models and mechanisms that make this possible are not simple, but they are well understood. This guide covers what trust means in decentralized protocols, the core models in use today, advanced techniques for building resilient trust systems, and how zero-trust principles apply to real AI agent deployments.

Table of Contents

- What is trust in network protocols?

- Core models of trust in decentralized protocols

- Building and evaluating trust: Graphs, machine learning, and dynamic models

- Toward zero-trust: Hardening P2P and AI overlays

- What most guides miss about trust in decentralized protocols

- Take the next step: Accelerate secure AI communication with Pilot Protocol

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Peer-based trust | Decentralized networks rely on peer-to-peer mechanisms, not central authorities, to assess trust. |

| Diverse trust models | EigenTrust, RNNTM, and blockchain-based systems offer distinct advantages for protocol reliability. |

| Adaptive defenses | Dynamic and ML-powered trust solutions better handle adversarial attacks and evolving environments. |

| Zero-trust essentials | Zero-trust frameworks ensure each action is verified, providing strong security even with unknown peers. |

| Hybrid strategies | Combining adaptive trust with zero-trust policies yields the best resilience and flexibility for AI-driven networks. |

What is trust in network protocols?

Trust in network protocols is not about goodwill. It is about risk assessment. In a centralized system, a server or certificate authority vouches for participants. In a decentralized system, that option does not exist. Each peer must decide, on its own, whether another peer is reliable, honest, and safe to interact with.

Trust in P2P networks refers specifically to the mechanisms and models that enable peers to assess the reliability, honesty, and security of other peers without centralized authorities. This definition matters because it shifts the problem from authentication to ongoing evaluation.

Why does this matter for AI infrastructure? Because AI agents operating in distributed environments face threats that static authentication cannot handle. A peer can be authenticated and still behave maliciously. Trust mechanisms address behavior, not just identity.

Here are the core functions trust serves in decentralized protocols:

- Risk mitigation: Trust scores help peers avoid interacting with nodes that have a history of bad behavior or are suspected of being malicious.

- Collaboration enablement: Peers with high trust scores can exchange data, delegate tasks, and form coalitions without manual approval.

- Attack defense: Trust models are a primary defense against Sybil attacks, where an attacker creates many fake identities to gain influence.

- Secure communication: Trust informs encryption and access decisions, ensuring sensitive data only flows to verified peers.

The methods used to establish trust include direct observation of peer behavior, recommendations from other trusted peers, reputation systems that aggregate historical data, and cryptographic proofs that verify identity and intent.

“Zero trust by default” is increasingly the baseline for agent trust models in production AI systems. Every interaction starts with suspicion and earns trust through verifiable evidence.

This is a fundamental shift from legacy network design. In traditional systems, trust was often implicit once a connection was established. In decentralized protocols for AI, trust is explicit, earned, and continuously re-evaluated. That distinction shapes every architectural decision you make.

Core models of trust in decentralized protocols

Knowing that trust must be engineered is one thing. Knowing which model to use is another. Several well-tested approaches exist, each with distinct strengths.

1. EigenTrust

EigenTrust is a foundational reputation algorithm that computes global trust values using eigenvector centrality on a normalized local trust matrix. In plain terms, it aggregates how much each peer trusts every other peer, then propagates that trust transitively across the network. Peers with consistently good behavior accumulate high trust scores that influence the whole system.

EigenTrust works well in stable networks where peer interactions are frequent enough to build reliable history. It struggles when peers join and leave rapidly or when attackers coordinate to inflate each other’s scores.

2. Random Neural Network Trust Model (RNNTM)

RNNTM models dynamic trust evolution using voting permits and peer opinions. It is designed for mobile and wireless P2P environments where conditions change fast. Peers cast votes on each other’s trustworthiness, and the model adapts its policy based on incoming signals.

RNNTM handles novel attacks and message failures better than static models. It is more complex to implement but more resilient in unpredictable environments.

3. Blockchain-based trust

Blockchain trust uses cryptographic proofs recorded on an immutable ledger to establish and verify peer credentials. Blockchain-based trust shows error rates as low as 2.1% at 10,000 nodes, outperforming DAG-based and PKI-based models in large-scale deployments.

| Model | Best for | Key strength | Key weakness |

|---|---|---|---|

| EigenTrust | Stable, high-interaction networks | Transitive trust propagation | Vulnerable to collusion |

| RNNTM | Mobile, dynamic P2P environments | Adaptive to novel attacks | Higher implementation complexity |

| Blockchain trust | Large-scale, high-assurance systems | Low error rate, cryptographic proof | Latency and resource overhead |

Pro Tip: For zero trust communication in AI agent fleets, consider layering EigenTrust for reputation scoring with blockchain-based identity proofs. This gives you both behavioral history and cryptographic assurance without relying on either alone.

Choosing the right model depends on your network’s size, stability, and threat profile. There is no universal answer, but the comparison above gives you a clear starting point.

Building and evaluating trust: Graphs, machine learning, and dynamic models

To make trust practical at scale, you need more than a single algorithm. You need infrastructure for representing, computing, and updating trust across thousands of peers in real time.

Trust graphs are the most common representation layer. A trust graph encodes direct and indirect relationships between peers as directed edges with probability weights. If peer A trusts peer B with 0.9 confidence, and peer B trusts peer C with 0.8 confidence, the graph can compute an indirect trust value for A’s relationship with C.

Trust graphs represent directed probabilistic relationships, and machine learning models including graph neural networks (GNNs) can infer trust from empirical data. GNNs are particularly effective because they learn patterns from the graph structure itself, not just individual node attributes.

Here is how these techniques compare in practice:

| Technique | Scalability | Attack resilience | Adaptability |

|---|---|---|---|

| Static trust graphs | Medium | Low | Low |

| ML-based inference | High | Medium | Medium |

| GNN trust models | High | High | High |

| RNNTM dynamic models | Medium | Very High | Very High |

Key advantages of advanced trust modeling:

- Scale: ML models handle millions of peer relationships without manual tuning.

- Inference: GNNs detect anomalous behavior patterns that rule-based systems miss.

- Resilience: Dynamic models like RNNTM recover faster from targeted attacks and message loss.

- Automation: Trust updates happen continuously without human intervention.

For secure protocols for AI, the ability to automate trust inference is critical. Manual trust management does not scale to hundreds of autonomous agent networking scenarios.

Understanding how AI agents communicate also informs trust design. Agents exchange structured messages, call APIs, and delegate tasks. Each of these interactions is a potential trust event that your model needs to evaluate and record.

Toward zero-trust: Hardening P2P and AI overlays

Zero-trust is not a product. It is a design principle. The core idea is simple: never assume a peer is trustworthy based on network location or prior authentication alone. Every request is validated from scratch, every time.

Zero-trust in P2P meshes requires continuous identity-bound verification, policy evaluation, and delegation semantics. This contrasts sharply with traditional networks where trust was often implicit after the initial handshake.

Here are the critical steps for implementing zero-trust in a decentralized AI network:

- Establish strong identity. Every peer needs a cryptographically verifiable identity. Certificates, public keys, or blockchain-anchored credentials all work. No identity, no interaction.

- Enforce policy at every layer. Access decisions must be made at the data layer, not just the network layer. A peer that can connect to your network should not automatically be able to read your data.

- Verify continuously. Trust scores and permissions must be re-evaluated at regular intervals or after any behavioral anomaly. A peer that was trusted yesterday may not be trusted today.

- Minimize trust scope. Each peer should only have access to the resources it explicitly needs. Broad permissions create systemic vulnerabilities.

- Log and audit everything. Zero-trust depends on visibility. If you cannot observe what peers are doing, you cannot enforce policy effectively.

Pro Tip: When deploying zero-trust for AI agent fleets, start with strict deny-by-default policies and open access incrementally. It is much easier to loosen a policy than to recover from a breach caused by overly permissive defaults.

Zero-trust can be resource intensive, especially for high-frequency agent communication. The overhead of continuous verification adds latency. Mitigate this by caching short-lived trust tokens and using lightweight cryptographic proofs where possible. Your secure infrastructure guide and a solid understanding of how Pilot Protocol works can help you balance security with performance in practice.

What most guides miss about trust in decentralized protocols

Most documentation treats trust models as static choices. Pick EigenTrust or blockchain, deploy it, and move on. That approach fails in production.

Purely algorithmic trust cannot keep up with attacker creativity. Adversaries adapt. A Sybil attack that fails against EigenTrust today may succeed tomorrow with a coordinated collusion strategy. Static models do not learn from new attack patterns.

Zero-trust alone is not always the answer either. Applied rigidly without adaptive trust layers, it creates bottlenecks and operational overhead that slow down legitimate agent communication. The systems that perform best in live, large-scale networks combine adaptive trust scoring with policy-based access control. Neither replaces the other.

Peer trust also evolves over time. A node that behaves well for months may be compromised in a single incident. Successful systems build in mechanisms to detect sudden behavioral shifts and respond automatically, not just at scheduled review intervals.

The engineers who build the most resilient networks treat trust as a living system. They instrument it, measure it, and tune it continuously. Private discovery for agents is one area where this iterative approach pays off immediately. The real edge comes from treating trust as an operational discipline, not a one-time architectural decision.

Take the next step: Accelerate secure AI communication with Pilot Protocol

For teams serious about building secure, trusted networks, actionable resources and solutions are available right now.

Pilot Protocol provides ready-to-implement zero-trust overlays, encrypted tunnels, and robust trust mechanics built specifically for decentralized AI environments. You get virtual addresses, NAT traversal, and mutual trust establishment out of the box, so your agents can find, verify, and communicate with each other without relying on centralized brokers. If you want to go deeper on the principles covered here, start with the guide on zero trust communication for AI agents. It connects the theory directly to implementation steps you can act on today.

Frequently asked questions

How does trust work without a central authority in a P2P network?

Trust is managed through reputation systems, direct peer observations, recommendations from other trusted peers, and cryptographic proofs that verify identity and behavior. These mechanisms work together to help peers assess reliability without centralized authorities and make informed decisions about every interaction.

Which trust model works best for large-scale decentralized AI systems?

Blockchain-based trust offers the lowest observed error rates at scale, with 2.1% error at 10k nodes outperforming DAG and PKI alternatives. EigenTrust and dynamic neural network models each have strengths for specific network conditions and threat profiles.

What is the zero-trust principle in network protocol security?

Zero-trust means every identity and request is always verified, eliminating default trust assumptions and reducing the risk of internal breaches or impersonation attacks. It requires continuous identity-bound verification and policy evaluation at every interaction point.

Can machine learning improve trust inference in P2P systems?

Yes. Machine learning models, including graph neural networks, analyze peer behavior and probabilistic trust relationships to infer trust at a scale and accuracy that rule-based systems cannot match.