Understanding autonomous agent networking for distributed AI

Understanding autonomous agent networking for distributed AI

TL;DR:

- Autonomous agent networking requires decentralized protocols supporting discovery, communication, and trust.

- Scaling beyond 100 agents introduces significant challenges in consensus, fault tolerance, and protocol adaptability.

- Building resilient multi-agent systems demands adaptive routing, hybrid governance, and proactive protocol evolution strategies.

Most engineers assume that once you deploy multiple AI agents across cloud infrastructure, they will naturally find each other, coordinate tasks, and scale gracefully. That assumption is wrong, and it costs teams months of rework. Autonomous agent networking is a distinct engineering discipline, not a byproduct of running distributed services. According to MIT NANDA, it refers to decentralized architectures enabling independent AI agents to discover, communicate, collaborate, and transact securely without central coordinators. This article breaks down what that actually means, where most architectures fail, and what you need to design agent networks that hold up in production.

Table of Contents

- What is autonomous agent networking?

- Decentralized vs. centralized architectures: Methods and trade-offs

- Core challenges: Scalability, consensus, and real-world failure modes

- Key methodologies and real-world frameworks

- What most teams miss about large-scale agent networking

- Build resilient agent networks with Pilot Protocol

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Autonomous agent networking defined | It enables decentralized, secure, and adaptive communication among AI agents without a central controller. |

| Architectural trade-offs matter | Choosing between centralized and decentralized methods directly impacts performance, privacy, and fault tolerance. |

| Scalability is a core challenge | Network performance and consensus deteriorate sharply as agent count increases, especially for LLM-based systems. |

| Hybrid and evolutionary strategies win | Techniques like evolutionary adaptation and hybrid governance frameworks boost resilience and scalability in production. |

| Practical frameworks are emerging | Architectures like AgentNet demonstrate practical solutions for deploying robust, decentralized AI agent networks. |

What is autonomous agent networking?

Autonomous agent networking is not simply distributed computing with AI on top. Traditional distributed systems move data between known endpoints using fixed protocols and central orchestrators. Agent networking is fundamentally different. Each agent acts independently, makes decisions, discovers peers dynamically, and negotiates communication without a master controller telling it what to do.

The MIT NANDA project defines this as decentralized architectures where agents discover, communicate, collaborate, and transact securely without central coordinators. That definition carries real engineering weight. It means your network design must support peer discovery, identity verification, and trust establishment at the protocol level, not bolted on afterward.

Autonomous agent networking places coordination logic inside the agents themselves, not in a centralized broker. This shifts complexity from infrastructure to protocol design.

The core principles that distinguish this model include:

- Agent autonomy: Each agent decides when and how to communicate based on its own state and goals.

- Peer discovery: Agents locate each other dynamically using distributed registries or gossip protocols, not hardcoded endpoints.

- Decentralized collaboration: Task coordination happens through negotiation between agents, not top-down assignment.

- Adaptive communication: Agents adjust their messaging patterns based on network conditions and peer availability.

The practical benefits are significant. Removing a central coordinator eliminates a single point of failure. Agents can continue operating even when parts of the network go offline. Privacy improves because no single node has visibility into all agent activity. And the system can grow without requiring you to re-architect the control plane every time you add capacity.

For developers building P2P solutions for AI, understanding these principles early prevents the most common design mistakes. If you are choosing communication protocols for AI agents, the protocol must support all four of these properties natively, not as optional extensions.

Decentralized vs. centralized architectures: Methods and trade-offs

Choosing between centralized and decentralized coordination is not just a philosophical preference. It determines how your system behaves under load, partial failure, and network partitions.

| Dimension | Centralized | Decentralized |

|---|---|---|

| Failure tolerance | Single point of failure | Redundant, fault-tolerant |

| Privacy | Central node sees all traffic | No global observer |

| Scaling | Orchestrator becomes bottleneck | Scales with agent count |

| Protocol flexibility | Easier to update centrally | Risk of protocol ossification |

| Coordination speed | Fast for small fleets | More rounds for consensus |

Two real-world protocols illustrate the contrast clearly. AgentNet uses DAG routing for task delegation, allowing agents to form directed acyclic graphs of collaboration without a hub. AgentConnect takes a different approach, using hubs that sign and relay messages between agents, which simplifies trust but reintroduces a coordination dependency.

Here is how to evaluate which approach fits your system:

- Assess your failure tolerance requirements. If downtime is unacceptable, decentralized routing is the safer default.

- Map your privacy constraints. If agents handle sensitive data, centralized hubs create audit and exposure risk.

- Estimate your fleet size. Centralized systems work well under 50 agents. Beyond that, orchestrator bottlenecks appear quickly.

- Evaluate protocol update frequency. Decentralized networks are harder to update uniformly, which leads to ossification over time.

The networking challenges for decentralized AI are real, but they are solvable with the right design decisions upfront. For secure AI infrastructure, the trade-off analysis should happen before you write a single line of agent code.

Pro Tip: Build evolutionary adaptation into your protocol selection from day one. Static role assignment and fixed routing tables are the most common cause of production network stagnation in multi-agent deployments.

Core challenges: Scalability, consensus, and real-world failure modes

Deploying autonomous agent networks at scale exposes failure modes that small-scale testing never reveals. You need to know these before you hit them in production.

Performance drops sharply above 100 agents, and Byzantine faults, partial observability, non-stationarity from concurrent actions, and protocol ossification in static networks all compound the problem. These are not edge cases. They are the normal operating conditions of any serious multi-agent deployment.

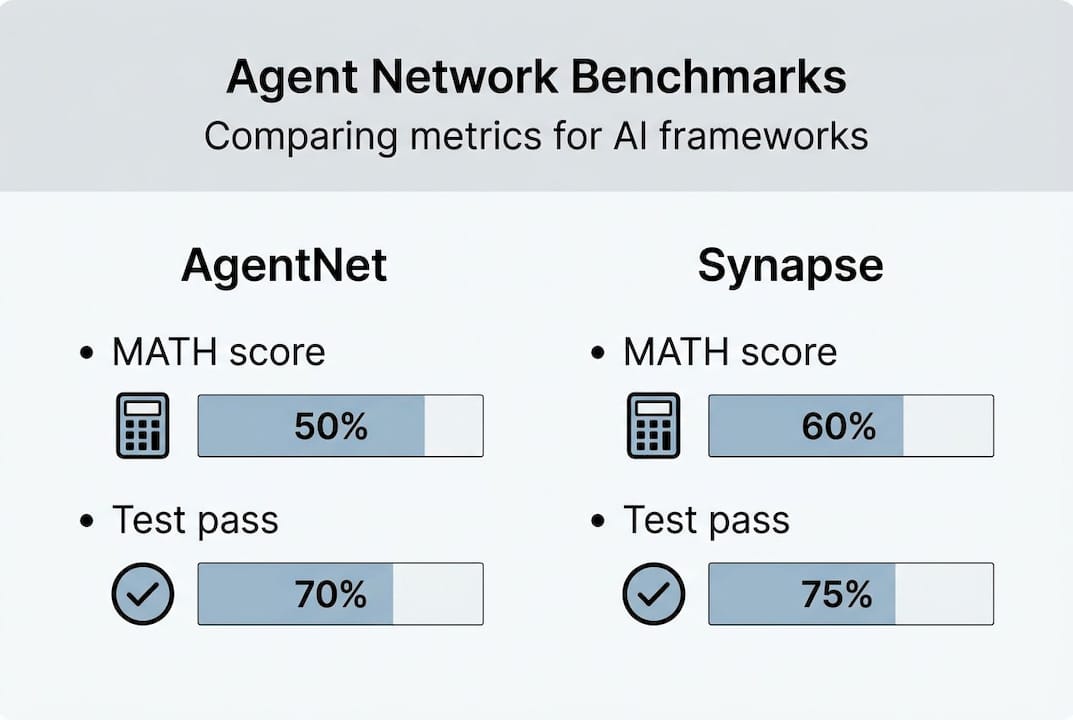

The benchmark data is instructive. AgentNet achieves 92.86% on MATH compared to 77% for Synapse, and 94% test pass@1 versus 79%, with 30 average test cases versus 22. These gains come directly from better routing and adaptive role assignment, not from more powerful base models.

The hardest challenges in production agent networks:

- Scalability above 100 agents: Coordination overhead grows non-linearly. Message volume, consensus rounds, and state synchronization all spike.

- Byzantine fault tolerance: Malicious or malfunctioning agents can corrupt coordination. Most LLM-based agent frameworks have no native defense against this.

- Non-stationarity: When multiple agents act concurrently, the environment each agent perceives keeps changing. This breaks assumptions baked into most planning algorithms.

- Partial observability: Agents rarely have full visibility into network state. Decisions made on incomplete information compound errors across the fleet.

- Protocol ossification: Static networks freeze into rigid communication patterns. When conditions change, the protocol cannot adapt without a coordinated update across all nodes.

| Metric | AgentNet | Synapse |

|---|---|---|

| MATH benchmark | 92.86% | 77% |

| Test pass@1 | 94% | 79% |

| Avg test cases | 30 | 22 |

For teams scaling agent networks beyond proof-of-concept, these numbers matter. And for secure comms for agents, Byzantine fault tolerance needs to be a first-class design requirement, not an afterthought.

Key methodologies and real-world frameworks

Knowing the challenges is only useful if you have concrete methods to address them. Here is a practical framework for deploying resilient decentralized agent networks.

- Start with DAG-based routing. Directed acyclic graph routing, as used in AgentNet, allows agents to delegate tasks along structured paths without central coordination. This eliminates hub bottlenecks and supports parallel execution.

- Implement adaptive role assignment. Evolutionary adaptation boosts performance 20-30% over static roles. Agents should be able to shift between coordinator, executor, and verifier roles based on current network conditions.

- Use hybrid intent-governance for cloud environments. Decentralization enhances resilience and privacy but introduces coordination hardness. Hybrid intent-governance, where agents operate autonomously within policy boundaries set by a lightweight governance layer, mitigates this in enterprise and cloud deployments.

- Design for protocol evolution. Build versioning and negotiation into your messaging layer from the start. Agents should be able to advertise supported protocol versions and negotiate a common dialect with peers.

- Instrument consensus rounds. Track the number of rounds required to reach agreement as a key performance indicator. Rising round counts signal scaling problems before they become outages.

For secure protocols in distributed AI, mutual authentication and encrypted channels are non-negotiable. Pair this with zero trust in AI networking principles: every agent verifies every peer on every connection, regardless of network position. The agent communication infrastructure you choose must support this natively.

Pro Tip: Frontier LLMs perform well with 4 to 8 agents but degrade significantly at scale. Plan your architecture for the fleet size you will reach in 12 months, not the size you are starting with today.

What most teams miss about large-scale agent networking

Most teams focus on getting agents to communicate. Fewer focus on what happens when the communication patterns themselves become the bottleneck. Protocol ossification is the silent killer of agent networks. A network that works at 10 agents often freezes into rigid patterns that cannot adapt when you reach 50 or 100.

Highly autonomous agents also create a counterintuitive problem: more autonomy means more coordination overhead. Each agent making independent decisions generates more negotiation, more consensus rounds, and more state divergence. LLM-based agents break down at consensus beyond 16 nodes, even with evolutionary adaptation in place. That is a hard ceiling most teams do not plan for.

The lesson is this: design for protocol evolution and hybrid governance from the start. Do not treat full decentralization as the end goal. Treat it as a spectrum, and position your system where autonomy and coordination costs are in balance for your specific workload. Revisiting P2P agent architectures with this lens often reveals that a modest governance layer prevents the most expensive scaling failures.

Build resilient agent networks with Pilot Protocol

The principles covered in this guide, peer discovery, encrypted tunnels, adaptive protocols, and NAT traversal, are exactly what Pilot Protocol delivers as purpose-built infrastructure for autonomous agent networks.

Pilot Protocol gives your agents persistent virtual addresses, mutual trust establishment, and direct encrypted P2P connections across any cloud or region. You do not need to rebuild networking primitives from scratch. The platform wraps HTTP, gRPC, and SSH inside its overlay, so your existing agent code integrates without a full rewrite. If you are building or scaling a multi-agent AI system, explore the Pilot Protocol network stack and see how fast you can move from architecture to production.

Frequently asked questions

How does autonomous agent networking differ from traditional distributed systems?

Autonomous agent networking allows AI agents to coordinate, communicate, and act without central orchestrators, whereas traditional distributed systems typically depend on centralized control. The MIT NANDA definition confirms this: agents must discover, collaborate, and transact securely without central coordinators.

Why is consensus especially difficult in large decentralized agent networks?

Consensus requires multiple rounds of communication between agents, and coordination costs grow non-linearly with fleet size. LLM agents fail at 16+ nodes for consensus and leader election, making scale planning critical.

What are the main failure modes in agent networking deployments?

The most common failures are performance collapse above 100 agents, Byzantine faults, protocol ossification, and errors caused by partial observability and non-stationarity. These challenges in distributed coordination are well-documented and require proactive design to avoid.

Which frameworks are most effective for autonomous agent networking?

AgentNet is among the strongest options, using DAG routing and evolutionary adaptation to outperform static protocols at scale. Its 20-30% performance advantage over static role assignment is consistent across benchmarks.

How does decentralization enhance privacy for agent networks?

Removing central coordinators means no single node observes all agent traffic, which reduces surveillance risk and eliminates centralized identity stores. Decentralization enhances resilience and privacy but requires careful coordination design to avoid new bottlenecks.