Decentralized networking: P2P solutions for AI architectures

Decentralized networking: P2P solutions for AI architectures

TL;DR:

- Peer-to-peer networks achieve 97.6% NAT traversal success in production environments.

- Decentralized systems improve resilience and scalability compared to centralized architectures.

- Protocols like libp2p, DHT, and gossip enable direct, fault-tolerant, multi-cloud AI communication.

Peer-to-peer networking has crossed a critical threshold. NAT traversal success rates now reach 97.6% on first attempt using libp2p’s hole-punching, with near-100% reliability when fallback relays kick in. That is not a lab result. That is production-grade performance. For AI developers and system architects building distributed agent fleets, multi-cloud pipelines, and cross-region data streams, decentralized networking is no longer an experimental option. It is the practical foundation for resilient, scalable infrastructure. This article breaks down the core protocols, mechanics, real-world challenges, and actionable strategies you need to deploy decentralized networking with confidence.

Table of Contents

- What is decentralized networking?

- Core mechanics of decentralized networking

- Advanced methodologies in distributed networking

- Edge cases, performance, and connectivity in decentralized networks

- The perspective: What most guides miss about decentralized networking for AI

- Next steps: Unlocking secure decentralized networking with Pilot Protocol

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Peer-to-peer communication | Direct node interaction eliminates central bottlenecks, improving scalability and resilience. |

| NAT traversal techniques | Hole-punching and relay protocols enable high connectivity across distributed AI networks. |

| Mesh and consensus methods | Mesh networking and BFT consensus ensure reliable performance and robustness for AI. |

| Mitigating edge cases | Relay fallback, retention strategies, and reputation systems help address network churn and security risks. |

| Practical deployment | Pilot Protocol offers a ready solution for secure decentralized networking in AI environments. |

What is decentralized networking?

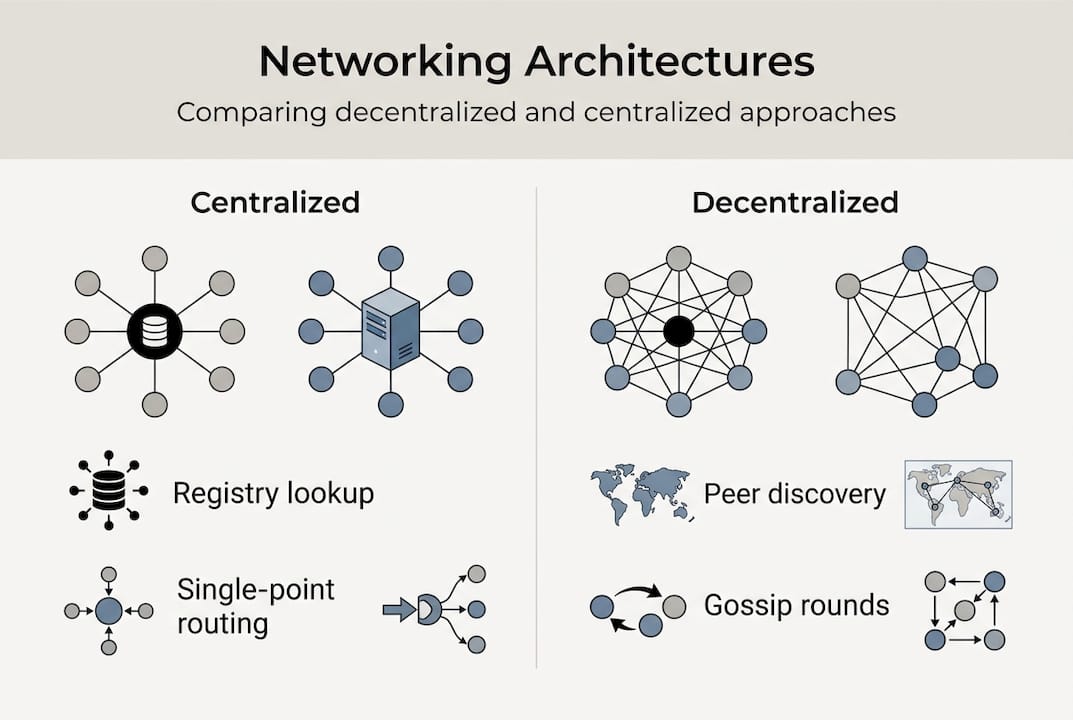

Decentralized networking describes peer-to-peer systems where nodes communicate directly without central servers, using protocols like libp2p for discovery, NAT traversal, and data exchange. There is no single server routing traffic, storing state, or acting as a gatekeeper. Every node is both a client and a server.

The foundational protocols you will encounter most often are:

- libp2p: A modular networking stack used in IPFS and Ethereum, handling peer discovery, transport, and multiplexing.

- DHT/Kademlia: A distributed hash table algorithm that lets nodes find each other dynamically without a directory server.

- Gossip protocols: Epidemic-style message propagation where each node shares updates with a random subset of peers, spreading information exponentially.

Contrast this with centralized approaches. Centralized networks introduce single-point failures and censorship risks that distributed architectures are specifically designed to avoid. When your central broker goes down, every agent in your fleet loses connectivity. In a decentralized model, the network routes around the failure.

“Decentralized networks reduce single-point failures and censorship, enabling resilience and scalability that centralized systems cannot match at scale.”

For AI workloads specifically, the advantages are significant:

- No central bottleneck: Agents communicate directly, reducing latency and eliminating broker capacity limits.

- Fault tolerance: Node failures do not cascade across the entire network.

- Horizontal scalability: Add nodes without reconfiguring a central server.

- Reduced infrastructure cost: Fewer managed services, less operational overhead.

- Geographic flexibility: Nodes in any region connect without VPN tunnels or cloud-specific networking rules.

Exploring core decentralized protocols gives you a practical foundation before you commit to a stack. For broader context on how these principles apply across industries, distributed system frameworks like Holochain offer useful reference architectures.

With context established, let’s move into the core mechanics that make decentralized networks effective for AI environments.

Core mechanics of decentralized networking

Understanding the mechanics is what separates architects who deploy successfully from those who troubleshoot indefinitely. Core mechanics include peer discovery via DHT/Kademlia, hole-punching for NAT traversal, gossip protocols for propagation, and fallback relays or TURN servers for connectivity.

Here is how each layer works in practice:

- Peer discovery: A node joins the DHT and queries for peers by XOR distance (Kademlia routing). Within seconds, it has a list of reachable nodes without any central registry.

- NAT traversal: Most agents sit behind NAT. Hole-punching coordinates simultaneous outbound packets to open a direct path. When that fails, a relay forwards traffic.

- Gossip propagation: Each node fans out messages to a fixed number of peers. Information reaches the full network in logarithmic time relative to network size.

- Consensus: For coordination tasks, Byzantine Fault Tolerant (BFT) algorithms ensure agreement even when a fraction of nodes behave incorrectly.

| Mechanic | Centralized | Decentralized |

|---|---|---|

| Peer discovery | DNS/registry lookup | DHT/Kademlia |

| Routing | Server-mediated | Direct P2P or relay |

| Failure handling | Single point of failure | Multi-path rerouting |

| Scalability | Vertical (add server capacity) | Horizontal (add nodes) |

| Latency | Adds broker hop | Direct when NAT allows |

Key stat: libp2p NAT traversal achieves 70% direct P2P success, with WebRTC swarms reaching near-100% using incentivized TURN relays. For AI agent fleets, that means the vast majority of agent-to-agent connections are direct and low-latency.

For a technical breakdown of when and why NAT traversal fails, the deep NAT traversal guide covers symmetric NAT edge cases in detail. You can also review how peer discovery and relays work together in serverless agent architectures, and explore mesh discovery methods for more advanced topologies. For deeper NAT traversal research, peer-reviewed benchmarks provide solid grounding.

Now that the mechanics are clear, it is important to understand the methodologies used to optimize performance and security in real-world applications.

Advanced methodologies in distributed networking

Mechanics tell you how the network operates. Methodologies tell you how to make it work reliably under production conditions. Advanced methodologies for distributed AI include mesh networks for multi-hop relaying, gossip and epidemic dissemination, reputation-weighted task allocation, and BFT consensus for AI coordination.

Mesh networking is particularly valuable in multi-cloud environments. When direct paths between clouds are blocked or slow, multi-hop relaying through intermediate nodes maintains connectivity without manual VPN configuration. Each node participates in routing, distributing the load.

Gossip and epidemic dissemination scale naturally. As you add agents, message propagation stays efficient because each node only contacts a fixed subset of peers. The information spreads exponentially without any central coordinator.

Reputation-weighted task allocation is a practical security layer. Nodes that consistently behave correctly accumulate reputation scores. When your orchestration layer assigns compute tasks, it routes work to high-reputation nodes first, reducing exposure to malicious or unreliable participants.

BFT consensus handles the hardest problem: agreement under adversarial conditions. In a 20-node simulation, BFT algorithms maintain consensus even when 20% of nodes behave incorrectly.

Frameworks actively using these patterns include:

- Hyperspace AGI: Uses libp2p with gossip and CRDT for distributed AI coordination.

- OpenCLAW-P2P: Implements cognitive mesh networking with reputation and BFT layers.

- ARIA: Optimized for efficient CPU inference across decentralized nodes.

For AI developers building on these frameworks, libp2p provides the most robust foundation for distributed AI, with Hyperspace AGI demonstrating gossip and CRDT patterns at scale.

Reviewing AI networking challenges helps you anticipate where these methodologies break down. For production deployments, secure infrastructure tips provide concrete hardening steps.

Pro Tip: Use sharding to partition your agent network into smaller gossip domains, and combine it with federated learning so agents share model updates without centralizing training data. This keeps both compute and data distributed.

Having addressed methodologies, let’s tackle common edge cases and performance nuances AI architects regularly encounter.

Edge cases, performance, and connectivity in decentralized networks

Production deployments surface problems that benchmarks often miss. Edge cases include symmetric NATs that block direct P2P, high node churn with over 90% daily retention in blockchain networks, Byzantine faults tolerated up to 20% by BFT consensus, and variable performance from malicious nodes.

Symmetric NAT is the hardest connectivity problem. Unlike full-cone or port-restricted NAT, symmetric NAT assigns a different external port for each destination. Hole-punching fails here, and relay fallback becomes mandatory. Designing your relay capacity around worst-case NAT scenarios is not optional.

Node churn is a constant in large agent networks. Nodes join and leave continuously. Effective retention mechanisms include persistent peer lists, aggressive reconnection logic, and incentivized relay nodes that stay online because they earn rewards for uptime.

Empirical benchmarks from 20-node simulations show knowledge propagation completing in 3 gossip rounds, under 10 seconds, with consensus achieved in 60 seconds even at 20% Byzantine node presence.

| Metric | Benchmark result |

|---|---|

| Gossip propagation (20 nodes) | 3 rounds, under 10 seconds |

| BFT consensus (20% Byzantine) | 60 seconds |

| Daily node retention (blockchain P2P) | Over 90% |

| Direct NAT traversal (libp2p) | 70% success |

| NAT traversal with relays | Near 100% |

Mitigation strategies for the most common failure modes:

- Malicious nodes: Implement reputation scoring and blacklisting at the protocol layer.

- Symmetric NAT: Pre-provision TURN relay capacity, do not rely solely on hole-punching.

- High churn: Use persistent peer IDs and aggressive DHT refresh intervals.

- Variable performance: Monitor per-node latency and route tasks away from slow peers.

- Byzantine faults: Set BFT quorum thresholds conservatively, below the 33% theoretical maximum.

For specific NAT edge case solutions in zero-config deployments, the linked guide covers agent-behind-NAT scenarios directly. You can also review overlay protocol benchmarks to understand transport-layer trade-offs.

Pro Tip: Path-aware networking protocols like SCION let you select routes based on measured latency and reliability rather than BGP shortest-path. Combined with incentivized relays, this gives multi-cloud deployments predictable performance even across hostile network conditions.

With challenges understood and mitigated, here is what seasoned practitioners know that most guides skip.

The perspective: What most guides miss about decentralized networking for AI

Most articles present decentralized networking as a straightforward upgrade from centralized systems. It is not. Centralized networks are faster and more reliable under ideal conditions. Decentralized networks win on resilience and scalability, but they introduce complex setup, variable performance, and exposure to malicious nodes.

The real lesson from production deployments is that decentralization requires more discipline, not less. You cannot set up a gossip network and walk away. NAT traversal rates, node churn, and Byzantine behavior all demand ongoing monitoring and tested fallback paths.

“You cannot rely on ideal conditions. Resilience comes from tested fallback paths and incentivized relays, not from assuming the network will self-heal.”

Protocol selection matters more than most architects realize. Choosing libp2p because it is popular is not a strategy. You need to evaluate it against your specific NAT environment, churn rate, and consensus requirements. Understanding why autonomous agents need private discovery is a good starting point for making that evaluation grounded in real agent behavior rather than theoretical benchmarks.

Balance the trade-offs deliberately. Decentralization is the right choice when resilience and scale outweigh operational complexity. Know when that threshold applies to your architecture.

Next steps: Unlocking secure decentralized networking with Pilot Protocol

Enhancing your decentralized networking strategy is now easier than ever.

Pilot Protocol gives you a production-ready decentralized networking stack built specifically for AI agents and distributed systems. You get virtual addresses, encrypted tunnels, NAT punch-through, and relay fallback out of the box, without building these layers yourself.

Pilot Protocol supports multi-cloud and cross-region connectivity, wraps existing protocols like HTTP, gRPC, and SSH inside its overlay, and integrates with your existing infrastructure through the CLI, Python SDK, or Go SDK. Whether you are deploying autonomous agent fleets, securing data streams, or orchestrating workloads across clouds, Pilot Protocol handles the networking layer so you can focus on your agents.

Explore Pilot Protocol for AI networking and start building secure, direct peer-to-peer infrastructure today.

Frequently asked questions

How does peer-to-peer networking improve AI scalability?

Peer-to-peer networks eliminate central bottlenecks, letting distributed AI agents communicate directly and scale horizontally by adding nodes rather than upgrading central servers.

What protocols are commonly used for decentralized networking?

Popular protocols include libp2p, DHT/Kademlia, gossip, and mesh network frameworks like OpenCLAW-P2P, each addressing different layers of peer discovery and data propagation.

How do decentralized networks handle NAT traversal challenges?

They use hole-punching for direct connections and relay fallback when that fails, with libp2p achieving 97.6% efficiency on first attempt and near-100% overall with relays.

What are the biggest challenges for decentralized AI networking?

The main issues are symmetric NAT blocking direct P2P, high node churn, variable performance from malicious nodes, and maintaining BFT consensus when up to 20% of nodes behave incorrectly.

How does reputation-weighted task allocation improve reliability?

By routing work to high-reputation nodes first, your orchestration layer reduces exposure to malicious or unreliable participants and maintains communication integrity across the network.