Secure data exchange for multi-cloud AI systems

Secure data exchange for multi-cloud AI systems

TL;DR:

- Traditional encryption protects data in transit but fails to secure metadata and internal communication channels, risking sensitive information leaks in multi-agent AI networks. Implementing layered frameworks like AgentCrypt’s multi-level encryption, coupled with comprehensive audit coverage, trust boundary enforcement, and secure multi-cloud connectivity, is essential for safeguarding data across distributed environments. Continuous policy enforcement, mutual authentication, and secure computation protocols further strengthen security in autonomous agent systems.

Encryption is standard practice, yet autonomous AI agent networks still expose sensitive data every day. The real problem is not whether you encrypt data in transit. It is whether your security model accounts for the entire surface area of a distributed, multi-agent environment. Metadata leaks, inter-agent message channels, misconfigured cloud gateways, and incomplete audit coverage create gaps that standard TLS or end-to-end encryption cannot close. This guide walks you through the threats, the frameworks, and the practical steps you need to secure data exchange across multi-cloud AI deployments at every layer.

Table of Contents

- Why traditional encryption isn’t enough for AI agent data exchange

- Key frameworks and protocols for secure data exchange

- Securing data transfer in multi-cloud and distributed networks

- Granular controls: Authentication, RBAC, and endpoint trust

- Advanced data privacy: Secure computation and multi-party protocols

- What most AI teams misunderstand about secure data exchange

- Next steps: Accelerate secure agent data exchange with Pilot Protocol

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Encryption alone is insufficient | Traditional encryption does not address metadata, internal channels, or endpoint risks in agent networks. |

| Layered frameworks boost security | Adopting multi-level frameworks like AgentCrypt is key to balancing privacy, speed, and usability. |

| Inter-cloud security needs depth | Secure data transfer requires robust controls over connectivity, key management, and access policies. |

| Granular controls safeguard AI agents | Continuous authentication and fine-grained RBAC help prevent unauthorized or lateral agent access. |

| Monitor internal communication | Auditing both internal and external agent channels is essential to prevent overlooked leaks. |

Why traditional encryption isn’t enough for AI agent data exchange

Most AI teams deploy encryption and assume their data is protected. That assumption is costly.

End-to-end encryption protects data content in transit but does not cover metadata or endpoint security. In agent networks, metadata is just as dangerous as raw content. It reveals interaction patterns, agent identities, call frequencies, and coordination structure. An attacker who cannot read your messages can still map your entire agent topology from metadata alone.

In agent networks, what agents say to each other is sensitive. But who contacts whom, when, and how often can be just as revealing.

The risk compounds in multi-agent systems. The AgentLeak benchmark found that multi-agent LLM systems leak private data through internal inter-agent message channels at a 68.8% leakage rate, compared to 27.2% for single-agent output. Output-only audits miss 41.7% of violations because internal message channels are simply not monitored.

Common leakage vectors in multi-agent networks include:

- Inter-agent message payloads that carry sensitive context between reasoning steps

- Message metadata including sender IDs, timestamps, and routing headers

- Side-channel signals such as response timing or token consumption patterns

- Incomplete audit scope that logs final outputs but ignores internal chain-of-thought or tool calls

- Unencrypted coordination channels between orchestrator and worker agents

Understanding encryption protocols for AI is your starting point, but it only covers one layer.

Pro Tip: Treat internal agent communications with the same rigor as external outputs. Audit inter-agent messages separately and apply data classification policies to tool call results, not just final responses.

Key frameworks and protocols for secure data exchange

Choosing the right framework is where most engineering teams stall. The options range from basic policy enforcement to advanced cryptographic computation, and each involves real trade-offs.

AgentCrypt defines four levels of communication security for multi-agent systems:

- Level 1: Plaintext — No encryption. Only appropriate for sandboxed development environments with no sensitive data.

- Level 2: Policy-based encrypted retrieval — Agents retrieve encrypted data based on defined access policies. This is the minimum viable tier for production agent systems.

- Level 3: Policy-based computation privacy — Encryption extends to the computation layer, so agents can process data without seeing its plaintext. This balances strong privacy with manageable performance overhead.

- Level 4: Fully Homomorphic Encryption (FHE) — Agents compute directly on encrypted data. Maximum privacy guarantees at significant computational cost.

Adopting a multi-level framework matters because no single encryption mode fits all workloads. High-frequency coordination messages between agents need low latency, while sensitive inference results on regulated data need strong cryptographic guarantees.

Here are the key steps to choose and adopt a secure framework for your agent system:

- Classify your data by sensitivity: differentiate between agent coordination metadata, user-facing outputs, and regulated data like PII or financial records.

- Map your trust boundaries: determine which agents communicate directly and which route through an orchestrator or broker.

- Select the framework tier that matches your sensitivity classification without over-engineering low-risk flows.

- Validate your audit coverage by testing whether your logging captures inter-agent messages, not just final outputs.

- Review your direct communication protocols for AI agents to confirm encryption is applied at every hop.

| Level | Encryption method | Strengths | Typical use case |

|---|---|---|---|

| Level 1 | None | Zero overhead | Dev/test sandboxes only |

| Level 2 | Policy-based encrypted retrieval | Balances access control with performance | Agent memory, knowledge base access |

| Level 3 | Policy-based computation privacy | Strong privacy, moderate overhead | Sensitive inference pipelines |

| Level 4 | FHE | Maximum privacy guarantees | Regulated data computation, financial AI |

For secure agent networking workflow integration, choosing Level 2 or Level 3 as your baseline is the right call for most production deployments.

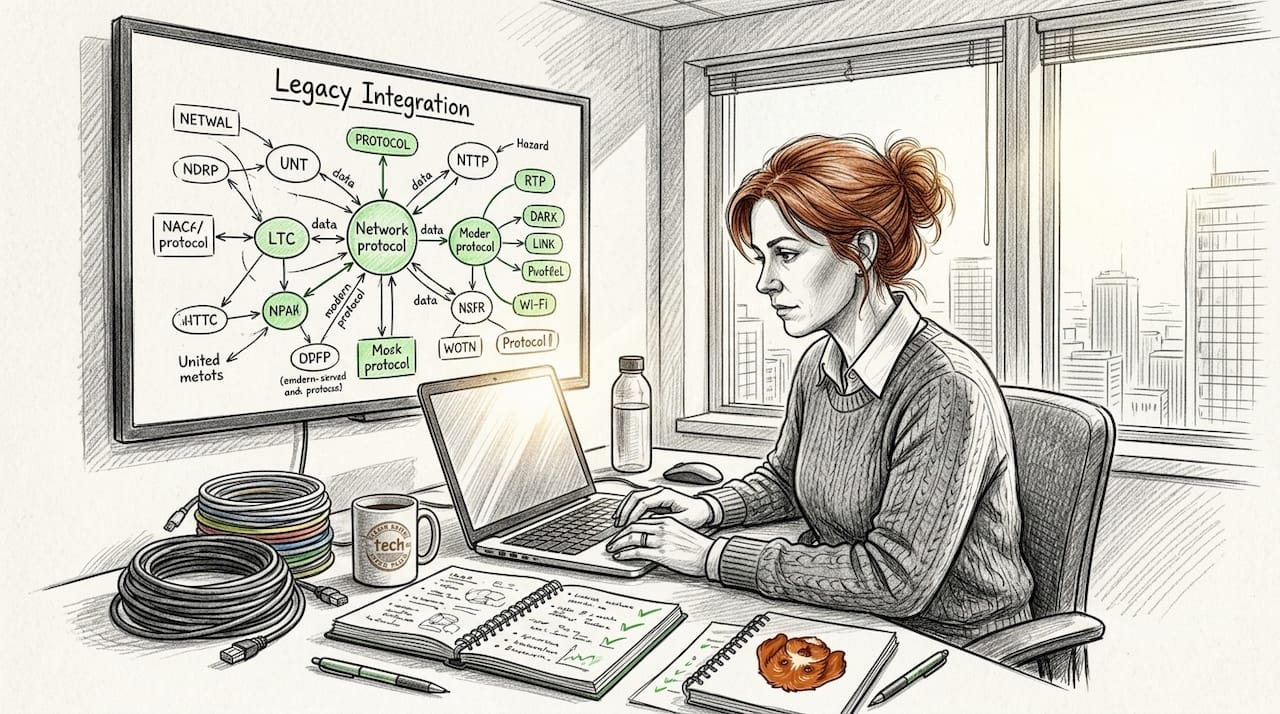

Securing data transfer in multi-cloud and distributed networks

Cross-cloud connectivity is where security architecture meets infrastructure reality. Your agents may span AWS, GCP, and Azure simultaneously, and securing the pipes between them requires more than a single VPN configuration.

Multi-cloud connectivity typically relies on three primary methods: IPsec VPNs for encrypted internet transit, private interconnects via colocation facilities like Equinix for dedicated circuits, and cloud transit gateways for routing traffic between cloud regions. Each method has a distinct trust and performance profile.

| Connectivity method | Use case | Trust level | Notes |

|---|---|---|---|

| IPsec VPN | Internet-based cross-cloud traffic | Medium | Encrypted but traverses public internet |

| Private interconnect | High-throughput, low-latency agent traffic | High | Dedicated circuit, no public internet exposure |

| Cloud transit gateway | Intra-cloud or regional routing | High with config | Managed by cloud provider, scalable |

| P2P overlay network | Direct agent-to-agent over any network | High | NAT traversal, mutual authentication |

Practical tips for securing cross-cloud traffic:

- Separate storage and processing clouds so that a breach in one environment does not expose both data at rest and data in process.

- Use cross-cloud KMS and HSM for key management, and route sensitive data transfers through DLP or token exchange gateways.

- Apply network segmentation so that agents in different trust zones cannot freely communicate without policy enforcement.

- Rotate credentials automatically and avoid static API keys embedded in agent runtime environments.

- Enforce TLS 1.3 minimum on all agent-to-agent connections, including internal service mesh traffic.

Data Loss Prevention (DLP) gateways add an important layer. They intercept data flows between agents or across cloud boundaries and enforce classification policies in real time. Paired with tokenization, which replaces sensitive values with non-sensitive stand-ins, they reduce the blast radius of any single agent compromise. Optimizing cloud scalability and security together requires treating these controls as standard infrastructure, not optional add-ons.

For teams connecting agents across AWS, GCP, and Azure without legacy VPN overhead, peer-to-peer overlay networks with built-in NAT traversal and mutual authentication are an increasingly practical alternative. They eliminate the complexity of managing IPsec tunnels across multiple cloud providers while maintaining strong encryption guarantees. You can learn more about network tunnels for AI agents to see how overlay approaches compare to traditional VPN setups in agent deployments.

Pro Tip: Use cloud-native key management services (KMS) with automatic rotation policies instead of embedding static credentials in agent configurations. Static credentials are a single point of failure and a frequent root cause of multi-cloud data exposure.

Granular controls: Authentication, RBAC, and endpoint trust

Encryption secures the channel. Authentication and access control determine who can use it. In agent networks, this distinction is critical because the entities making requests are not human users. They are autonomous processes with varying permission requirements.

Agent-to-agent authentication differs from user-to-agent authentication in a key way. Users authenticate once and establish a session. Agents authenticate on every request and in high-frequency systems, every few milliseconds. Multi-agent security requires continuous authentication, granular RBAC, and trusted network enforcement to protect data during processing.

Practical controls to implement across your agent fleet:

- IAM policies per agent identity: assign each agent a unique identity with scoped permissions, not shared service accounts.

- Role-based access control (RBAC): define roles by function such as retriever, executor, or orchestrator, and restrict each role to the minimum data access required.

- Network trust boundaries: enforce that agents in different trust zones cannot communicate without passing through an authenticated policy enforcement point.

- Mutual TLS (mTLS): require both sides of every agent-to-agent connection to present valid certificates, not just the server side.

- Short-lived credentials: use tokens with expiry windows measured in minutes, not hours, for agent runtime authentication.

Endpoint trust is especially critical in distributed and Multi-Party Computation (MPC) systems. An agent that appears to hold a valid credential but runs on a compromised host can exfiltrate data during processing. Continuous authentication solves part of this. Attestation, verifying the integrity of the runtime environment itself, solves the rest.

Review network security for multi-agent systems to see how these controls map to specific network topology configurations.

Pro Tip: Use automated policy enforcement tools that detect privilege escalation in real time. An agent that suddenly requests access to data outside its assigned role is a strong signal of compromise or misconfiguration.

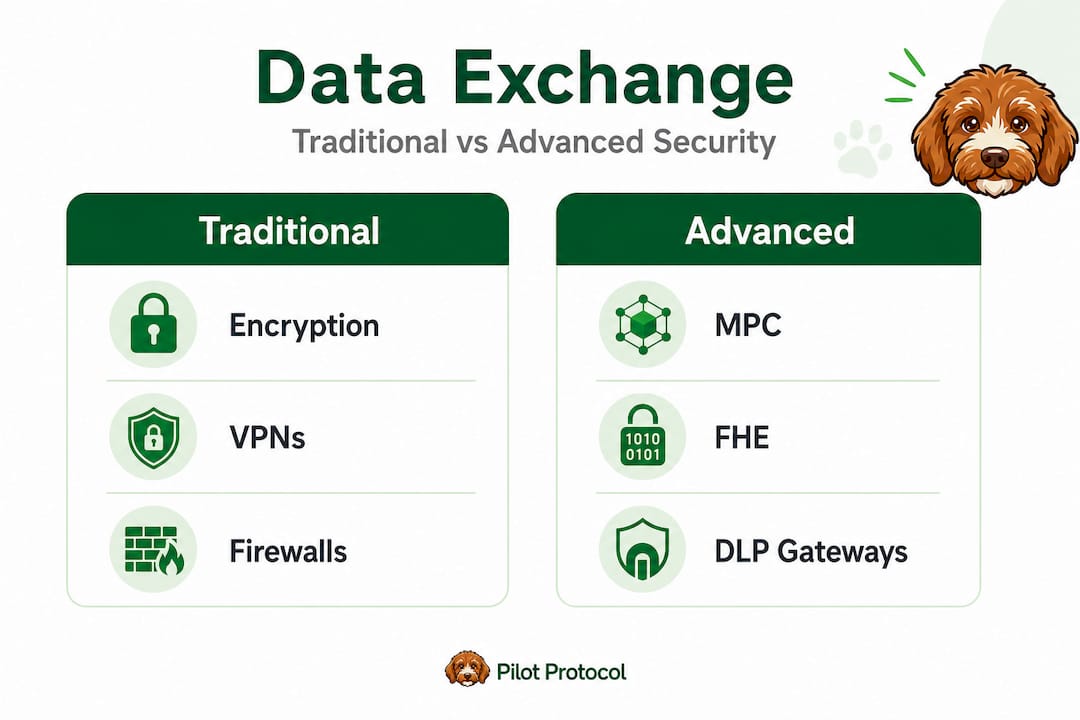

Advanced data privacy: Secure computation and multi-party protocols

When agents must process sensitive data without exposing it in plaintext, standard encryption is not sufficient. This is where secure computation techniques become relevant.

Multi-Party Computation (MPC) allows multiple agents or nodes to jointly compute a result over combined inputs without any single party seeing the others’ raw data. This is useful for federated learning scenarios, joint analytics across organizations, and privacy-preserving inference on regulated datasets.

Fully Homomorphic Encryption (FHE) enables an agent to compute directly on encrypted data and return an encrypted result. The compute node never sees the plaintext. FHE is the strongest privacy guarantee available but carries significant computational overhead.

Modern MPC benchmarks show impressive progress. MP-SPDZ and similar frameworks achieve millions of gates per second on LAN environments, with newer protocols reaching over 1 billion 32-bit multiplications per second on 25 Gbit/s LAN connections. WAN performance remains lower, so deployment topology matters.

Practical considerations for MPC and FHE in agent deployments:

- Latency sensitivity: MPC adds round-trip overhead at every computation step. It is best suited to batch operations and asynchronous workflows, not real-time agent loops.

- Hardware requirements: FHE in particular benefits significantly from purpose-built accelerators. Budget for specialized infrastructure before committing to Level 4 encryption.

- Data life-cycle policies: even encrypted data has a life-cycle. Define retention, deletion, and re-encryption schedules for agent memory and state stores.

- Use case fit: federated learning, joint fraud detection, and cross-organization analytics are strong candidates for MPC deployment. Real-time conversational agents generally are not.

Explore secure protocols for distributed AI to see how these computation models integrate with agent communication architectures in practice.

What most AI teams misunderstand about secure data exchange

Here is the uncomfortable truth: most AI teams treat encryption as a checklist item rather than a system property. They configure TLS on their API endpoints, enable encryption at rest, and call the architecture secure. But security is not a feature you enable. It is a property you maintain across every layer, every channel, and every agent interaction.

The biggest blind spot is audit scope. Output-only audits miss 41.7% of privacy violations in multi-agent systems because they only examine final responses. The violations happen upstream, in the inter-agent messages, tool calls, and intermediate reasoning steps that never surface in final outputs. Safety-aligned models reduce leakage but do not eliminate it. Monitoring the output alone gives you false confidence.

The second misconception is that technology solves the problem. It does not. Technology enforces the policies you define. If your RBAC policies are too permissive, mTLS will not save you. If your audit logging does not cover internal agent channels, your SIEM will not catch the breach. Process and policy rigor are not optional additions to your security stack. They are the foundation.

A mature approach to agent security looks like this: define trust boundaries first, then select the framework and protocols that enforce them, and then audit every channel, not just the last one. The secure network infrastructure for AI agents you build is only as strong as the policies you apply to it and the completeness of your monitoring coverage.

Next steps: Accelerate secure agent data exchange with Pilot Protocol

Secure agent communication does not have to mean building complex custom infrastructure from scratch. Pilot Protocol is purpose-built for exactly this problem: enabling autonomous AI agents to communicate securely, directly, and across any cloud environment without centralized brokers or complex VPN configurations.

With Pilot Protocol, you get virtual addresses, encrypted tunnels, NAT traversal, and mutual trust establishment out of the box. Every agent connection uses peer-to-peer encryption with persistent identities, so your agents can find each other, verify each other, and exchange data securely whether they run on AWS, GCP, Azure, or on-premise. The platform wraps protocols like gRPC and HTTP inside its encrypted overlay, so you integrate with existing agent frameworks without rewriting communication logic. Start building with peer-to-peer agent data exchange and see how Pilot Protocol simplifies secure, scalable agent networking for real production workloads.

Frequently asked questions

What is the biggest risk when exchanging data between autonomous AI agents?

The primary risk is leakage through internal inter-agent channels. Multi-agent systems show 68.8% leakage rates through inter-agent messages, which is more than double single-agent output leakage and largely invisible to output-only audit tools.

Does end-to-end encryption alone fully secure AI agent data exchanges?

No. E2EE protects content in transit but does not cover metadata or endpoint security, both of which can expose interaction patterns, agent identities, and coordination structure in multi-agent deployments.

Which frameworks enable end-to-end secure agent communication?

AgentCrypt’s four-level framework covers everything from basic policy-based encrypted retrieval to fully homomorphic encryption, making it a strong reference architecture for matching security level to workload sensitivity in agent systems.

How are keys and credentials managed securely in multi-cloud AI deployments?

Cross-cloud KMS and HSM solutions combined with DLP and token exchange gateways handle key management securely, eliminating the need for static credentials and reducing exposure across cloud boundaries.