Encryption protocols for secure AI systems: A practical guide

Encryption protocols for secure AI systems: A practical guide

TL;DR:

- Standard TLS and AES prevent data interception during transit and at rest, but they do not secure active AI computations across distributed nodes. Homomorphic encryption, zero-knowledge proofs, TEEs, and post-quantum cryptography address compute-in-use threats, each protecting different data states and surfaces within decentralized AI systems. Effective security requires layered protocols tailored to specific risks, continuous threat modeling, and avoiding architectural oversights that compromise overall system integrity.

Standard TLS and AES encryption will protect data in transit and at rest, but they do not address what happens when your AI agents are actively computing across distributed nodes, cloud boundaries, and untrusted peers. Homomorphic encryption and ZKPs are now required for compute-in-use scenarios in decentralized AI, where traditional approaches simply cannot reach. If you are building or operating distributed AI systems that span multiple clouds or orchestrate autonomous agent fleets, this guide breaks down exactly which encryption protocols apply to which threat surfaces, how they perform under real workloads, and how to implement them correctly.

Table of Contents

- Key challenges in encrypting decentralized AI systems

- Protocol overview: Advanced encryption technologies for AI systems

- Implementation and performance benchmarks

- Best practices and pitfalls for secure AI networking

- Perspective: Why most encryption strategies for AI fall short (and how to improve them)

- Ready to implement secure encryption in your AI agents?

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Beyond basic encryption | Decentralized AI security demands protocols that protect data during computation as well as in transit and at rest. |

| Protocol agility is critical | Regularly update and audit PQC-readiness and encryption libraries to stay secure against evolving threats. |

| Benchmark and optimize | Test protocol and hardware combinations to balance performance with security in distributed AI workloads. |

| System-wide integration | Encryption must be matched to the overall system architecture for real protection, not just layered on top. |

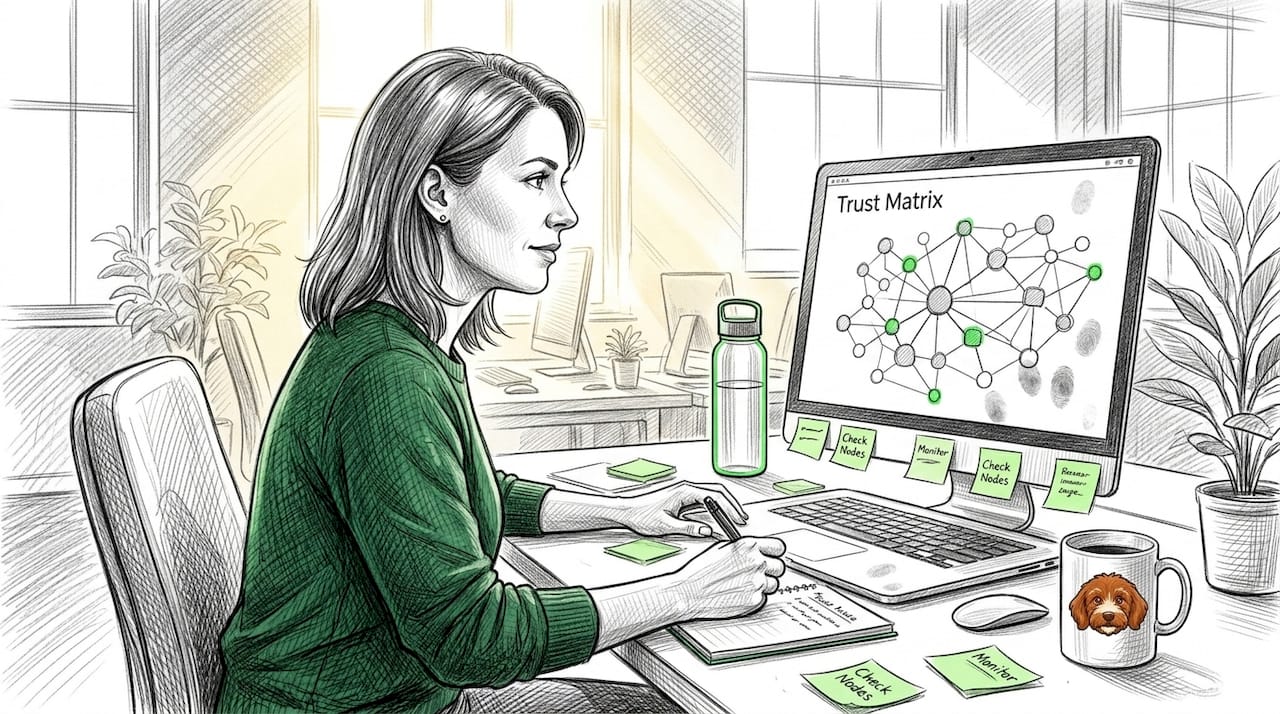

Key challenges in encrypting decentralized AI systems

Building on the need for stronger security, it is essential to understand what makes decentralized AI different from conventional scenarios. In a centralized system, you control the perimeter. In a decentralized network, your attack surface expands with every node, region, and cloud provider you add.

The threat model shifts fundamentally. You are no longer just protecting packets moving between two endpoints. You are protecting:

- Data in use: Gradients, activations, and intermediate model states that exist only during computation

- Model integrity: Ensuring that aggregated updates from distributed clients are not poisoned or tampered with

- Multi-cloud exposure: Data moving between AWS, GCP, and Azure may cross jurisdictions and untrusted network segments

- Agent identity: Verifying that the agent sending a gradient update is the agent it claims to be

Byzantine faults add another layer of difficulty. These occur when nodes behave arbitrarily or maliciously rather than simply failing. Robust aggregation methods like coordinate-wise median and threshold decryption schemes address client dropouts and Byzantine behaviors in federated learning environments. Without these mechanisms, a small number of compromised nodes can corrupt the entire training process.

Understanding decentralized communication protocols helps clarify why these fault types matter so much. Federated learning and secure P2P for distributed AI introduce collusion risks, where multiple coordinated nodes attempt to reconstruct private data from partial updates.

Pro Tip: Map your threat model to your data states before choosing a protocol. If you are only encrypting transit, you are leaving compute-in-use completely unprotected in distributed training runs.

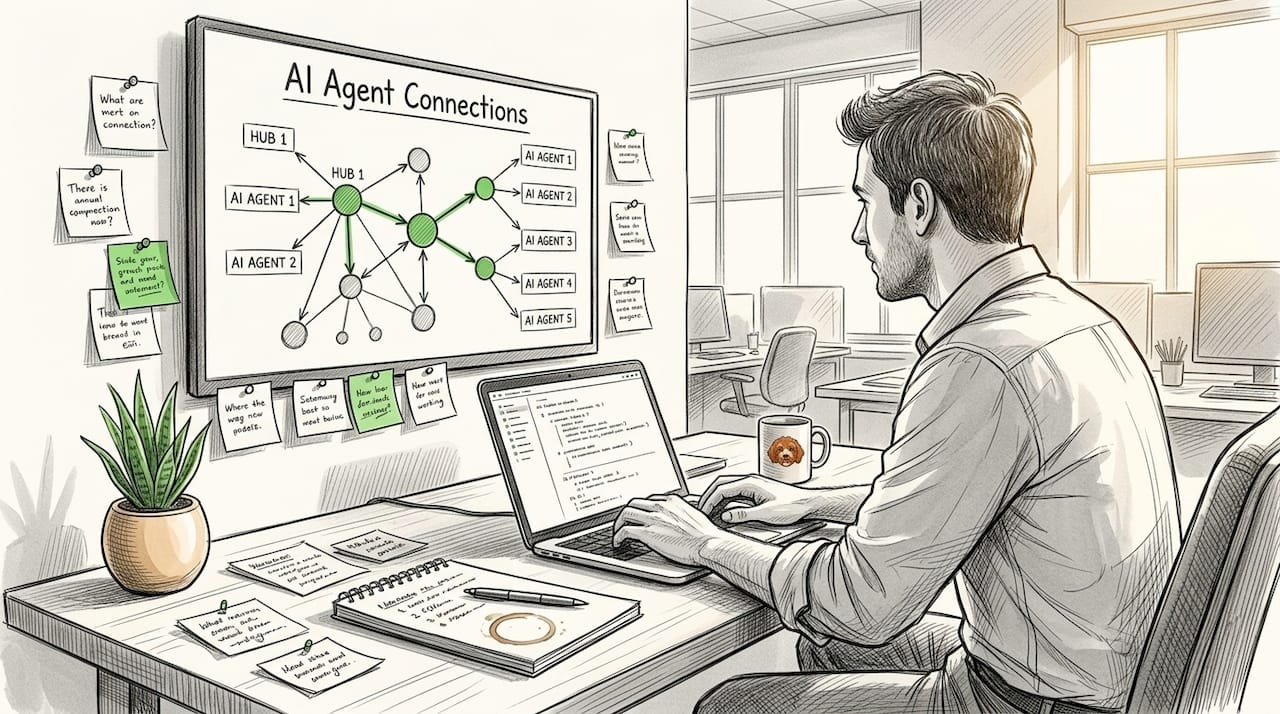

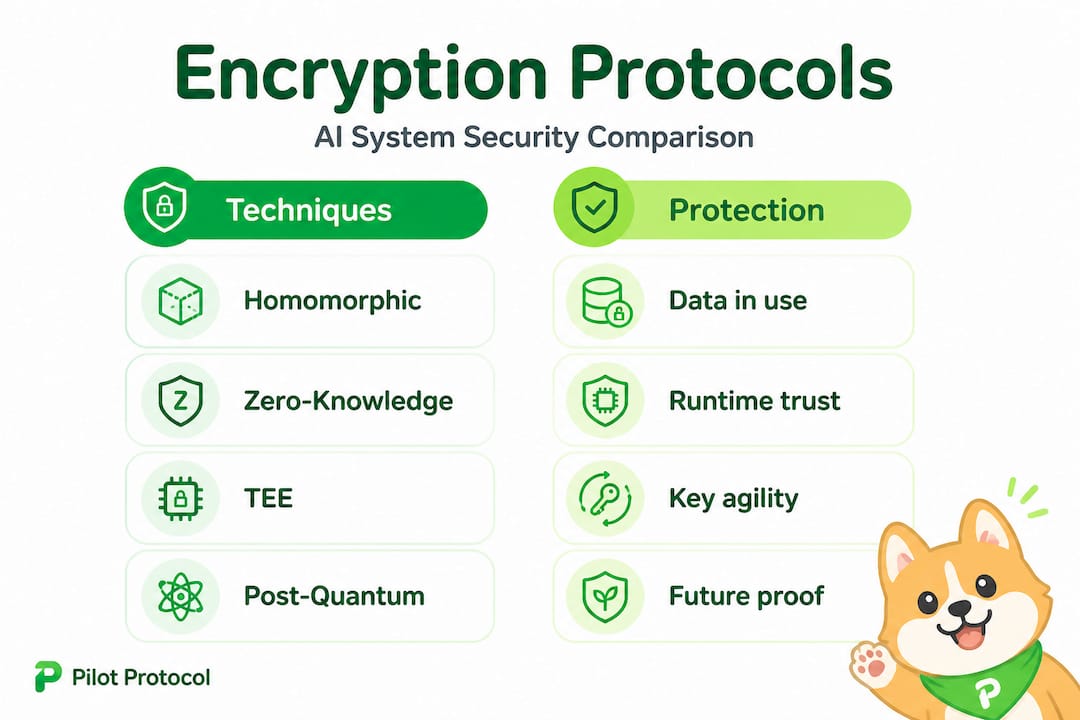

Protocol overview: Advanced encryption technologies for AI systems

With these risks in mind, let’s break down the specific encryption techniques leading-edge AI systems employ. Each protocol targets a different vulnerability surface, and selecting the right combination requires knowing what each one actually does under the hood.

Homomorphic encryption (HE) allows computation directly on ciphertext. The result, when decrypted, matches what you would get from running the same computation on plaintext. For federated learning, this means gradient updates never need to be decrypted on the aggregation server. ChainML uses HE and zk-SNARKs for privacy-preserving gradient verification and Byzantine-resilient aggregation in decentralized AI training, demonstrating that these are production-viable techniques, not just academic proposals.

Zero-knowledge proofs (ZKPs) let one party prove knowledge of a value without revealing the value itself. In AI contexts, ZKPs verify model integrity, prove that a gradient update was computed honestly, or confirm that a client’s local dataset meets certain properties without exposing the data. This is critical when you need to audit distributed computation without centralizing sensitive information.

Confidential computing uses trusted execution environments (TEEs) such as Intel SGX, AMD SEV-SNP, and Intel TDX to create hardware-enforced enclaves. Code and data inside an enclave are protected even from the host OS and hypervisor. This directly addresses cloud provider trust assumptions that most teams quietly ignore.

Post-quantum cryptography (PQC) prepares your protocols for the eventual capability of quantum computers to break current asymmetric schemes. NIST ML-KEM standards are now available and should be integrated with cryptographic agility in mind, meaning your system can swap algorithms without full protocol rewrites.

Here is a comparison of these four approaches:

| Protocol | Protects | Overhead | Best for AI use case |

|---|---|---|---|

| Homomorphic encryption | Data in use | High (10x to 1000x) | Federated learning gradients |

| Zero-knowledge proofs | Computation integrity | Medium (prover-heavy) | Model verification, auditing |

| Confidential computing (TEEs) | Runtime environment | Low (3 to 7%) | Inference, multi-party compute |

| Post-quantum cryptography | Key exchange, signatures | Low to medium | Long-lived key infrastructure |

You can explore secure communication protocols for distributed AI to see how these map to real networking layers. For a broader view of AI networking best practices including key rotation and trust establishment, additional context is available from resources covering cybersecurity in AI risk management.

The key insight is that no single protocol covers all surfaces. You need HE or ZKPs for compute-in-use, TEEs for runtime protection, PQC for key infrastructure, and TLS for transit. Skipping any layer creates exploitable gaps.

Implementation and performance benchmarks

Knowing the cryptographic options, the next step is understanding how they perform in real deployments and what to expect in real-world workflows. Performance data drives architectural decisions at scale, and the numbers here may surprise you.

HE for federated learning preserves privacy during model updates but introduces significant computation costs. The overhead varies dramatically by scheme and implementation. Partially homomorphic schemes are much faster but support only one operation type. Fully homomorphic schemes are slower but support arbitrary computation.

Library selection matters significantly. OpenFHE outperforms Microsoft SEAL in execution time and memory usage for BGV and CKKS schemes in empirical testing. If you are starting a new HE integration, benchmark both libraries against your specific gradient dimensions and batch sizes before committing.

TEEs with Intel TDX, SGX, and AMD SEV-SNP show a 3 to 7% latency overhead in AI inference workloads. That is an acceptable trade-off for most production systems and dramatically better than software-only alternatives. TEEs are currently the most practical path to confidential inference at scale.

| Protocol | Latency overhead | Memory impact | Hardware acceleration |

|---|---|---|---|

| BGV/CKKS (HE) | 10x to 100x | High | GPU/FPGA viable |

| zk-SNARKs (ZKPs) | 5x to 50x (prover) | Medium | ASIC accelerators emerging |

| TEE (Intel TDX) | 3 to 7% | Low | Native CPU support |

| PQC (ML-KEM) | Under 5% | Very low | Software sufficient |

“Encryption adds 5 to 10% latency in real benchmarks of encrypted inference; FHE remains viable for small models with hardware acceleration, and confidential AI workloads are production-ready today.”

Understanding encrypted tunnels in AI networks helps contextualize where these overheads appear in the overall system. For a checklist-based approach to securing your entire stack, the AI network security checklist covers both protocol selection and operational concerns.

Pro Tip: Use CKKS for approximate arithmetic on real-valued data like gradients. It trades exact precision for significantly faster performance, which is acceptable in most ML training contexts where gradient noise is already present.

The engineering decision framework comes down to this: start with TEEs for runtime protection because the overhead is minimal and the security gain is immediate. Add HE or ZKPs selectively for specific high-risk computations. Build PQC into your key exchange layer now, before migration becomes urgent.

Best practices and pitfalls for secure AI networking

To go from theory to robust deployment, it is critical to follow proven best practices and avoid the traps that often stymie even experienced engineers. The most common failure is not picking the wrong algorithm. It is applying the right algorithm in the wrong place.

Here are the core practices to follow:

-

Layer your encryption by data state. In-transit encryption (TLS 1.3), at-rest encryption (AES-256), and in-use encryption (HE/TEEs) must all be active simultaneously. Each layer addresses a distinct attack vector. Leaving any layer out creates a gap that adversaries will find.

-

Audit PQC readiness on a fixed schedule. Cryptographic agility means your system can rotate algorithms without full rewrites. Securing multi-cloud AI networks requires planning for algorithm rotation across AWS KMS, Azure Key Vault, and GCP Cloud KMS simultaneously. Put this on a quarterly review cycle.

-

Benchmark before you scale. Overhead numbers change significantly based on your specific hardware, batch sizes, and network topology. Run your own benchmarks against your actual model dimensions before committing to a library or approach. The 5 to 10% latency range cited in literature is a baseline, not a guarantee.

-

Automate key rotation and revocation. Manual key management breaks down at scale. Build key rotation into your CI/CD pipeline and treat key expiry events like deployment events. Expired or reused keys are among the most common real-world failures in distributed AI security.

-

Validate agent identity at the protocol level. Do not rely only on API keys or IP allowlists. Use mutual TLS (mTLS) and cryptographic identity proofs so that each agent in your fleet is verifiable by other agents, not just by a central server. AI communication tunnels built on overlay networks provide persistent, verifiable addresses that simplify this considerably.

-

Threat model continuously, not once. Your threat model from six months ago is outdated. New agents, new cloud integrations, and new attack techniques emerge constantly. Treat threat modeling as an ongoing engineering activity, not a one-time architecture review.

Pro Tip: When integrating secure P2P for AI agents, verify that your NAT traversal and hole-punching mechanisms do not inadvertently expose plaintext metadata like agent identifiers or route paths. Even encrypted payloads can leak operational data through traffic analysis.

The most overlooked pitfall is key sprawl in multi-cloud deployments. Each provider uses different key management abstractions, and teams often end up with inconsistent rotation schedules across regions. Consolidate key management into a single control plane and push provider-specific keys as derived keys from a central root.

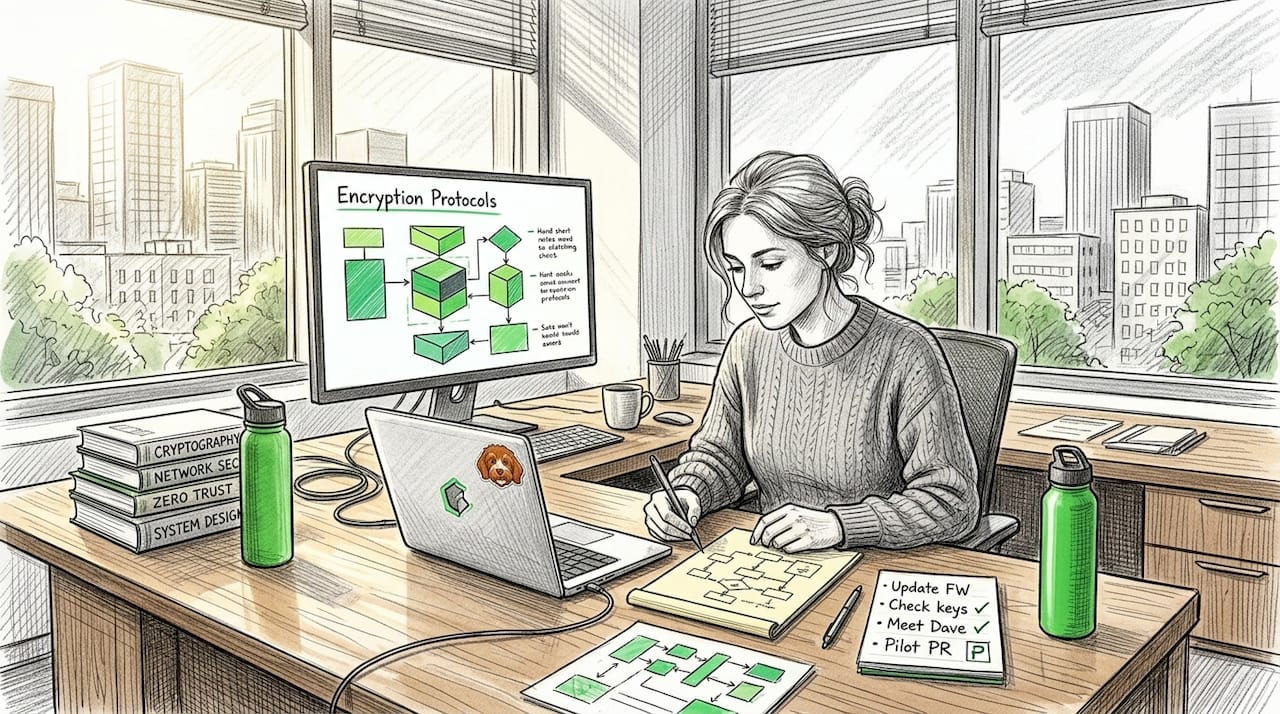

Perspective: Why most encryption strategies for AI fall short (and how to improve them)

Reflecting on these best practices and technical options, it is worth stepping back to consider why even well-resourced teams often come up short, and what actually makes a difference.

The honest answer is that most encryption failures in AI systems are not cryptographic failures. They are architectural failures. Teams add HE to a federated learning pipeline but forget that the aggregation server still receives decrypted weights post-training. They implement TEEs for inference but leave the model loading step outside the enclave. They adopt PQC for key exchange but continue using RSA-2048 for signing certificates. The math is sound. The integration is not.

Privacy technologies for AI are computationally intensive in their raw forms, but optimized approaches using lazy relinearization and GPU acceleration make them practical. ZKPs are succinct for verifiers but remain prover-heavy. Knowing this, smart teams optimize the prover path aggressively and accept higher prover costs in exchange for fast verification at the aggregation layer. Most teams do not make this distinction explicitly, and they end up with systems that are either too slow to be useful or too permissive to be secure.

The second major gap is treating encryption as a static checklist rather than a living capability. A cipher suite that was strong in 2023 may be deprecated by 2027. Protocol agility, the ability to swap algorithms without full rewrites, is not optional in long-lived AI systems. It is a first-class engineering requirement that needs to be built into your architecture from day one.

The third gap is threat modeling that stops at the network boundary. P2P AI architectures introduce trust relationships between agents that have no central authority to arbitrate disputes. If you do not model collusion risks, gradient poisoning, and identity spoofing at the agent level, your network-level encryption does not protect you where it matters most.

The fix is straightforward in principle: start with a complete data-state inventory, assign the correct protocol to each state, build protocol agility into your key infrastructure from the beginning, and treat threat modeling as a recurring engineering event rather than a pre-launch ritual.

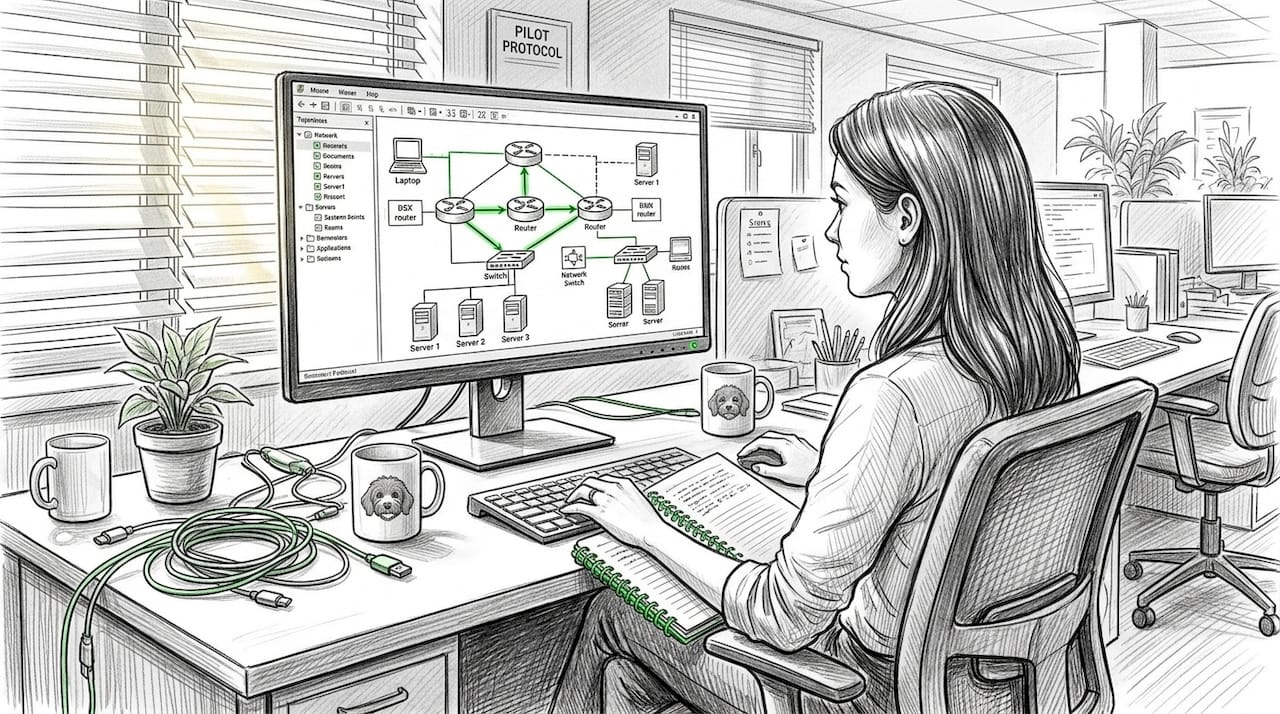

Ready to implement secure encryption in your AI agents?

You now have a clear picture of which encryption protocols apply to which threat surfaces, how they perform, and where teams go wrong. Putting this into practice requires an infrastructure layer that supports encrypted, direct, and verifiable agent communication without adding unnecessary complexity to your architecture.

Pilot Protocol is built specifically for this use case. It provides encrypted tunnels, NAT traversal, mutual trust establishment, and persistent virtual addresses for AI agents and distributed systems communicating across multi-cloud and cross-region environments. You can wrap your existing HTTP, gRPC, and SSH protocols inside the Pilot Protocol overlay without rewriting your agents. Explore the autonomous agent network infrastructure that underpins the platform, or get started with direct P2P for AI agents to see how encrypted peer-to-peer connectivity works in practice.

Frequently asked questions

What is the main advantage of homomorphic encryption in AI systems?

Homomorphic encryption enables AI computations on encrypted data, so model updates and gradients remain private throughout federated learning without compromising accuracy.

How do TEEs differ from software-based encryption?

TEEs enforce hardware-level boundaries around AI workloads, protecting them from the host OS and hypervisor with only 3 to 7% latency overhead, far less than software-only alternatives.

Is post-quantum cryptography necessary today for AI protocols?

Yes. NIST ML-KEM and related standards are available now, and integrating PQC with cryptographic agility today avoids costly migrations as quantum threats mature.

How much latency do advanced encryption protocols add to AI workloads?

Encryption adds 3 to 10% latency for TEE-based approaches, while FHE can add much more depending on model size and hardware acceleration availability.

What is the most common integration mistake with AI encryption?

The most common mistake is adding new cipher suites without revisiting system architecture and threat modeling, which leaves critical gaps in data-in-use protection even when transit and rest encryption are correctly implemented.

Recommended

- AI networking best practices for secure, scalable systems

- Secure communication protocols for distributed AI systems

- Top encrypted tunnel advantages for P2P AI networks

- Secure network infrastructure for AI agents: A practical guide

- What is AI Finance in Crypto? A Plain-English Guide | Crypto Watchdog