Why secure direct P2P connections matter for AI agents

Why secure direct P2P connections matter for AI agents

TL;DR:

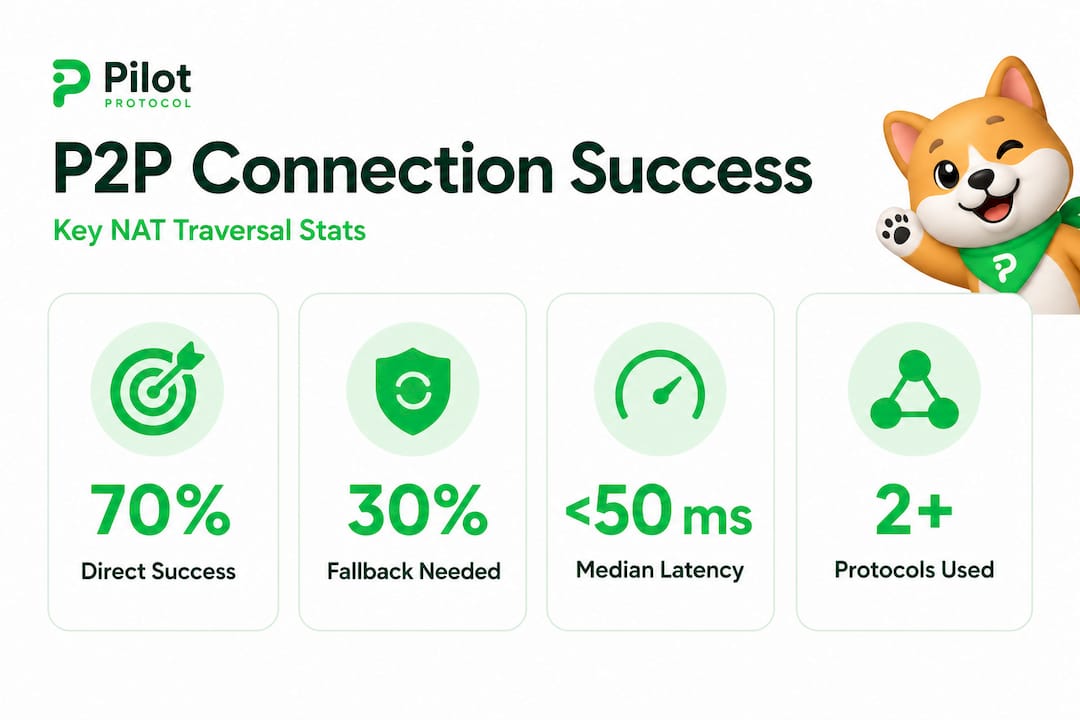

- NAT traversal techniques achieve approximately 70% success in establishing direct peer-to-peer connections for AI systems.

- Direct, encrypted connections reduce latency, eliminate single points of failure, and enhance data security.

- Building resilient systems involves implementing fallback relays, monitoring connection quality, and planning for NAT limitations.

Most engineers assume that firewalls and NATs (Network Address Translators) make direct peer-to-peer connections unreliable at scale. The reality is different. NAT traversal succeeds roughly 70% of the time for decentralized protocols, even across complex network boundaries. That number surprises many teams who have spent years routing AI agent traffic through centralized brokers and message queues. If you are building distributed AI systems, understanding how secure direct connections work, where they succeed, and how to handle the cases where they don’t, is foundational to designing agents that are fast, private, and resilient.

Table of Contents

- The challenge: NAT, firewalls, and direct peer-to-peer

- Why secure direct connections are vital for distributed AI systems

- How direct connections are achieved: Protocols, NAT traversal, and success rates

- Nuances and edge cases: What blocks direct communication?

- Real-world application: Building resilient, distributed AI with secure direct connections

- The reality behind secure direct connections: What most engineers overlook

- Take the next step: Building secure direct peer-to-peer with Pilot Protocol

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Most connections succeed | About 70% of NAT traversal attempts achieve direct secure communication for AI agents. |

| Edge cases persist | Symmetric NATs and firewalls block about 30% of attempts, needing smart workarounds. |

| Security remains foundational | Direct connections offer lower latency, stronger privacy, and compliance for distributed AI systems. |

| Resilience over perfection | Best practice is to build adaptive systems that thrive even when direct connections fail. |

The challenge: NAT, firewalls, and direct peer-to-peer

Building on that surprising 70% connection success, let’s examine what makes these connections so challenging and why many engineers still assume they’re impractical.

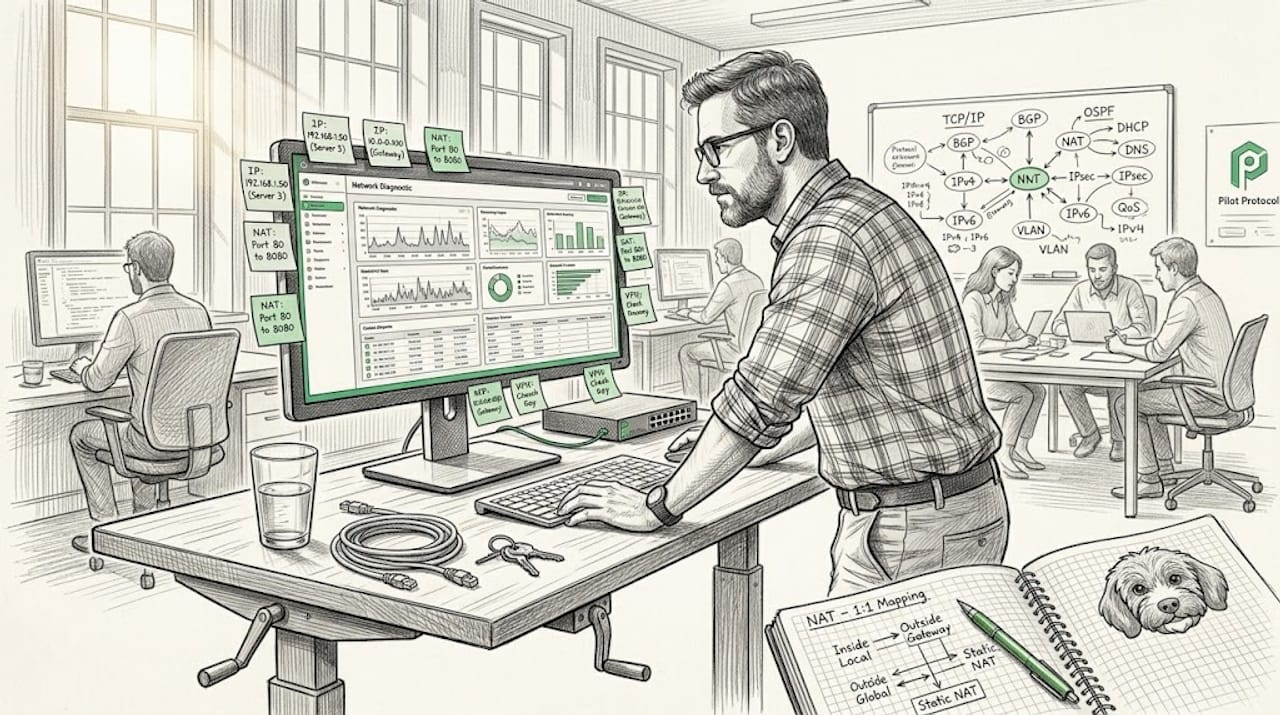

NAT and firewall traversal have long been considered the primary obstacles to reliable peer-to-peer networking. When an AI agent sits behind a NAT device, its private IP address is invisible to the outside world. Any inbound connection attempt from a remote agent gets dropped unless the NAT has already seen outbound traffic from that endpoint.

The barriers stack up quickly:

- Full-cone NATs are the most permissive. Once a mapping is created, any external host can send packets to the mapped port. These are easy to traverse.

- Restricted-cone and port-restricted NATs allow inbound packets only from hosts and ports that the internal device has previously contacted. Traversal requires coordination but is achievable.

- Symmetric NATs are the hardest. They assign a unique external port for every destination address and port combination. Predicting the port mapping is nearly impossible, which blocks most standard hole-punching techniques.

- Stateful firewalls add another layer. Policies vary widely across cloud providers, enterprise networks, and edge deployments, making it difficult to predict which techniques will work in any given environment.

“The widespread assumption that peer-to-peer is inherently unreliable for AI-scale systems is not supported by the data. With modern traversal techniques, direct connections succeed ~70% of the time across millions of real-world attempts.”

Despite this, many engineering teams default to centralized brokers because the failure cases are visible and painful. A single failed connection in a demo or a production incident gets remembered. The 70% of connections that succeed quietly never get celebrated. This perception gap leads to over-engineering around centralized infrastructure when a well-designed P2P layer would serve the system better.

For a deep dive on NAT traversal mechanics and how they apply specifically to AI agent networks, that resource covers the technical details in depth.

Why secure direct connections are vital for distributed AI systems

Now that the technical barriers are clear, let’s address why overcoming them through secure direct connections unlocks critical advantages in real-world AI deployments.

Centralized message brokers introduce latency, single points of failure, and data exposure risks. For AI agents that need to exchange model outputs, sensor readings, or task results in near real-time, those costs add up fast. Here is why direct, encrypted connections change the equation:

-

Lower latency. Every hop through a broker or relay adds round-trip time. For agents coordinating on time-sensitive tasks, direct connections reduce that overhead significantly. A connection that travels broker-to-broker across regions can add 50 to 200 milliseconds of unnecessary delay.

-

No centralized point of failure. If your message broker goes down, every agent in your fleet loses its communication channel. Direct P2P connections mean that two agents can continue exchanging data even if your central infrastructure is unreachable.

-

Reduced attack surface. When agent traffic passes through a central server, that server becomes a high-value target. A direct encrypted tunnel between two agents means the data never touches third-party infrastructure. The encrypted tunnel advantages for agent networks are well documented, covering both security and performance dimensions.

-

Data sovereignty and compliance. Regulations like GDPR and HIPAA place strict requirements on where data can travel and who can access it. Routing sensitive agent communications through a shared broker in an uncontrolled region can create compliance violations. Direct connections let you enforce data paths explicitly.

-

Scalability without bottlenecks. A centralized broker scales vertically until it doesn’t. Direct P2P connections scale horizontally by design. Each new agent pair creates its own channel without adding load to a shared component.

Pro Tip: When evaluating whether your agent architecture needs direct connections, measure the actual latency cost of your current broker path under load. Many teams discover that broker overhead accounts for 30 to 40 percent of total round-trip time, especially in cross-region deployments.

For teams exploring no-server agent communication patterns, this approach removes the broker entirely and lets agents discover and connect to each other directly using overlay addressing.

How direct connections are achieved: Protocols, NAT traversal, and success rates

To make the abstract tangible, let’s examine how these secure connections are created in practice and what the evidence reveals about their effectiveness.

The core techniques for NAT traversal rely on coordinating simultaneous connection attempts from both sides of a NAT boundary. The most widely used approach is UDP hole punching, where both peers send packets to each other at the same time, creating NAT mappings that allow subsequent packets to pass through.

Here is how the main techniques compare:

| Technique | NAT types supported | Latency overhead | Fallback required |

|---|---|---|---|

| UDP hole punching | Full-cone, restricted-cone | Very low | Rarely |

| TCP hole punching | Full-cone, restricted-cone | Low | Sometimes |

| TURN relay | All NAT types | Medium to high | Always for symmetric |

| RTT-optimized sync | Restricted-cone, some symmetric | Low | Sometimes |

The protocol mechanics behind these techniques involve a signaling channel (often a lightweight rendezvous server) that helps both peers exchange their external addresses and synchronize their connection attempts. The rendezvous server does not carry agent data. It only facilitates the initial handshake.

The 70% figure in context. Research across decentralized protocols shows a NAT traversal success rate of ~70% across millions of real connection attempts. That means roughly 7 in 10 agent pairs behind NATs can establish a direct connection without any relay. For large agent fleets, this is a significant operational win.

The remaining 30% require fallback strategies. Symmetric NATs and aggressive firewall policies block direct hole punching. In these cases, a TURN relay (Traversal Using Relays around NAT) carries the traffic, adding latency but maintaining connectivity.

For teams running zero-config agent deployment behind NATs, modern overlay networks handle the traversal logic automatically, so your agents don’t need custom networking code. And for environments where VPN overhead is unacceptable, you can connect without VPN using lightweight overlay tunnels that achieve similar security with lower overhead.

Nuances and edge cases: What blocks direct communication?

While high success rates are impressive, edge conditions do cause failures. Here’s how to recognize and resolve them.

The 30% failure rate in edge cases is not random. It clusters around specific network configurations that you can identify and plan for in advance.

| Failure cause | Frequency | Recommended workaround |

|---|---|---|

| Symmetric NAT | Most common | TURN relay or RTT-optimized sync |

| Carrier-grade NAT (CGNAT) | Common in mobile/ISP | Relay with persistent virtual address |

| Firewall blocks all UDP | Moderate | TCP fallback or HTTPS tunnel |

| Firewall blocks unsolicited inbound | Common in enterprise | Relay or outbound-only connection model |

| Port randomization | Less common | Coordinated retry with timing adjustment |

Symmetric NAT is the primary culprit in most failure scenarios. Unlike other NAT types, symmetric NATs assign a new external port for every unique destination. This makes it impossible for a remote peer to predict which port to target, which breaks standard UDP hole punching.

The expert-recommended workaround is RTT-optimized synchronization. Instead of relying on port prediction, this technique uses precise round-trip time measurements to synchronize the timing of connection attempts, improving the odds of punching through even restrictive NATs. It doesn’t solve every case, but it meaningfully reduces the failure rate for restricted-cone and some symmetric configurations.

Pro Tip: Before deploying agents into a new network environment, run a NAT type detection check. Tools like STUN (Session Traversal Utilities for NAT) can classify the NAT type in seconds and tell you upfront whether hole punching will work or whether you need to provision a relay path.

For teams evaluating protocol choices, the HTTP vs UDP overlay benchmark comparison shows concrete latency and throughput differences across NAT environments. And for a broader look at how these strategies fit into modern AI architectures, P2P solutions for AI architectures covers design patterns for distributed agent systems.

Real-world application: Building resilient, distributed AI with secure direct connections

Understanding both the power and limits of direct connections, let’s explore how you can architect robust distributed systems with them at the core.

The best distributed AI systems don’t treat NAT traversal as an all-or-nothing bet. They design for the 70% success case while building intelligent fallbacks for the 30% that fail. Here is how that looks in practice:

-

Layer your connection strategy. Attempt direct hole punching first. If that fails within a configurable timeout (typically 2 to 5 seconds), fall back to a relay. Log which path was taken so you can analyze your fleet’s direct connection rate over time.

-

Use persistent virtual addresses. Agents that change IP addresses (due to restarts, scaling events, or cloud migrations) need a stable identifier that survives network changes. Virtual overlay addresses decouple agent identity from physical IP, so connections can be re-established automatically after a network change.

-

Monitor connection quality continuously. A direct connection that degrades due to NAT mapping expiration or route changes can silently increase latency. Build health checks that measure actual round-trip times and trigger reconnection when thresholds are exceeded.

-

Plan relay capacity for the 30%. If your fleet has 1,000 agent pairs and 70% connect directly, you need relay capacity for roughly 300 pairs. Provision this in advance rather than discovering the gap during a scaling event.

-

Encrypt all paths equally. Whether a connection is direct or relayed, it should carry the same mutual TLS or noise protocol encryption. Don’t treat relay paths as inherently less sensitive. Relay traffic is just as exposed to interception as direct traffic if it’s unencrypted.

-

Test across cloud regions. NAT behavior varies significantly between AWS, GCP, Azure, and on-premise environments. A traversal strategy that works in one cloud may fail in another due to different default firewall policies. Run integration tests across all target environments before deploying agent fleets.

For teams building cloud P2P for AI across multiple providers, overlay networks that abstract the underlying transport make cross-cloud agent communication significantly simpler to operate and maintain.

The reality behind secure direct connections: What most engineers overlook

You’ve seen the data and strategies. Let’s step back and consider what really makes secure direct connections practical in production.

Most discussions about NAT traversal focus on maximizing the direct connection success rate. Get it above 70%. Tune the timing. Optimize the protocol. That’s useful work, but it misses the more important design question: what happens when a connection fails?

The teams that run the most reliable distributed AI systems are not the ones who achieved the highest hole-punching success rates. They are the ones who built the most intelligent fallback behavior. A system that succeeds 70% of the time with graceful, low-latency relay fallback for the other 30% outperforms a system that succeeds 85% of the time but hangs for 30 seconds on failure before giving up.

The 30% of cases blocked by symmetric NATs and firewalls are not a bug in your architecture. They are a feature of the network environment you operate in. Treating them as expected, planned-for scenarios rather than exceptional failures changes how you build. You provision relay capacity in advance. You make fallback paths fast. You monitor which agents are using which paths and optimize accordingly.

There is also a deeper point about trust. Secure direct connections are not just a performance optimization. They are a trust boundary. When two agents communicate directly over a mutually authenticated encrypted tunnel, you have a strong guarantee about who is on each end of the connection. When traffic passes through a shared relay or broker, that guarantee weakens unless the relay itself is part of your trust model.

The best practitioners design for redundancy and adaptive routing from day one. They don’t chase 100% direct connection rates. They build systems where every connection path, direct or relayed, carries the same security guarantees and where the system routes intelligently based on real-time conditions. For a holistic NAT traversal strategy that covers both the technical and architectural dimensions, that resource is worth reviewing before you finalize your agent communication design.

Take the next step: Building secure direct peer-to-peer with Pilot Protocol

Equipped with a clear understanding of NAT traversal, secure direct connections, and fallback strategies, here’s how you can put these principles into practice.

Pilot Protocol is built specifically for this problem. It handles NAT traversal, mutual trust establishment, encrypted tunnels, and intelligent relay fallback automatically, so your agents connect securely whether they’re in the same data center or across cloud regions.

With Pilot Protocol for P2P AI, you get persistent virtual addresses for every agent, automatic hole punching with relay fallback, and support for wrapping HTTP, gRPC, and SSH traffic inside the overlay. You don’t need to write custom NAT traversal code or manage relay infrastructure manually. The platform handles the edge cases so you can focus on building your agent logic. Start with the free tier, connect your first agents, and see direct P2P in action without any server setup required.

Frequently asked questions

What is the success rate of NAT traversal for AI agent P2P connections?

About 70% of NAT traversal attempts for decentralized protocols succeed, allowing direct connections even through challenging network setups including restricted-cone NATs and standard enterprise firewalls.

Why do some peer-to-peer connection attempts still fail?

Roughly 30% fail due to restrictive NAT types like symmetric NAT and firewalls that block all unsolicited inbound connections, making port prediction and hole punching ineffective without relay assistance.

What are effective strategies for handling failed direct connections?

Fallback solutions include TURN relay servers, adaptive protocol switching between UDP and TCP, and RTT-optimized synchronization to improve timing accuracy for connection attempts through restrictive NATs.

Is secure direct connection necessary if encryption is used?

Yes. Even with encryption, direct connections reduce the number of infrastructure components that handle your data, shrink the attack surface, and make it easier to enforce data sovereignty requirements for compliance.

Recommended

- Direct Peer-to-Peer for AI Agents — Pilot Protocol

- Top encrypted tunnel advantages for P2P AI networks

- Network security checklist for decentralized P2P AI systems

- Cloud networking: Secure peer-to-peer for distributed AI

- AI Operating System for Humans: Automate with AI Agents

- How AI transforms security reviews: efficiency, accuracy, challenges