Cloud networking: Secure peer-to-peer for distributed AI

Cloud networking: Secure peer-to-peer for distributed AI

TL;DR:

- Centralization is increasing in decentralized networks, with cloud nodes hosting over 87% of IPFS data.

- Hybrid cloud and P2P architectures combine control, resilience, and scalability for distributed AI systems.

- Effective networking involves managing NAT traversal, encryption, and monitoring centralization to ensure secure, reliable connections.

Most AI developers assume that choosing a decentralized protocol automatically means resilience and security. The reality is more complicated. 87.33% of IPFS data is now hosted on cloud nodes, a dramatic shift that exposes how centralization quietly creeps into systems designed to resist it. NAT traversal failures, IP collisions, and protocol mismatches add more friction than most teams anticipate. This article breaks down the core networking concepts, the protocols that matter, the real-world challenges you will face, and the hybrid strategies that experienced teams use to build secure, scalable distributed AI systems.

Table of Contents

- Core cloud and peer-to-peer networking concepts

- Protocols powering secure peer-to-peer communication

- Real-world networking challenges: NAT traversal, centralization, and cloud trade-offs

- Hybrid cloud–P2P strategies: Best practices for distributed AI systems

- The uncomfortable truth: Decentralization isn’t automatic—what experts really do

- Build secure, scalable networking for AI agents—start here

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Decentralization is nuanced | Most P2P protocols face real centralization and operational challenges, requiring careful monitoring and design. |

| Hybrid networking is optimal | Combining cloud VPCs with decentralized overlays achieves resilience, speed, and security for AI systems. |

| NAT traversal remains a hurdle | Direct peer connections fail up to 30% of the time, so fallback mechanisms like relays are essential. |

| Multipath and path validation | Protocols such as SCION boost speed and guard against hijacks using multipath transport and cryptographic validation. |

| Chunking strategies matter | Dedup-optimized chunking improves IPFS storage efficiency and reduces redundancy compared to fixed-size chunking. |

Core cloud and peer-to-peer networking concepts

Now that you’ve seen how centralization is quietly reshaping decentralized networks, let’s break down what makes cloud and peer-to-peer fundamentally different.

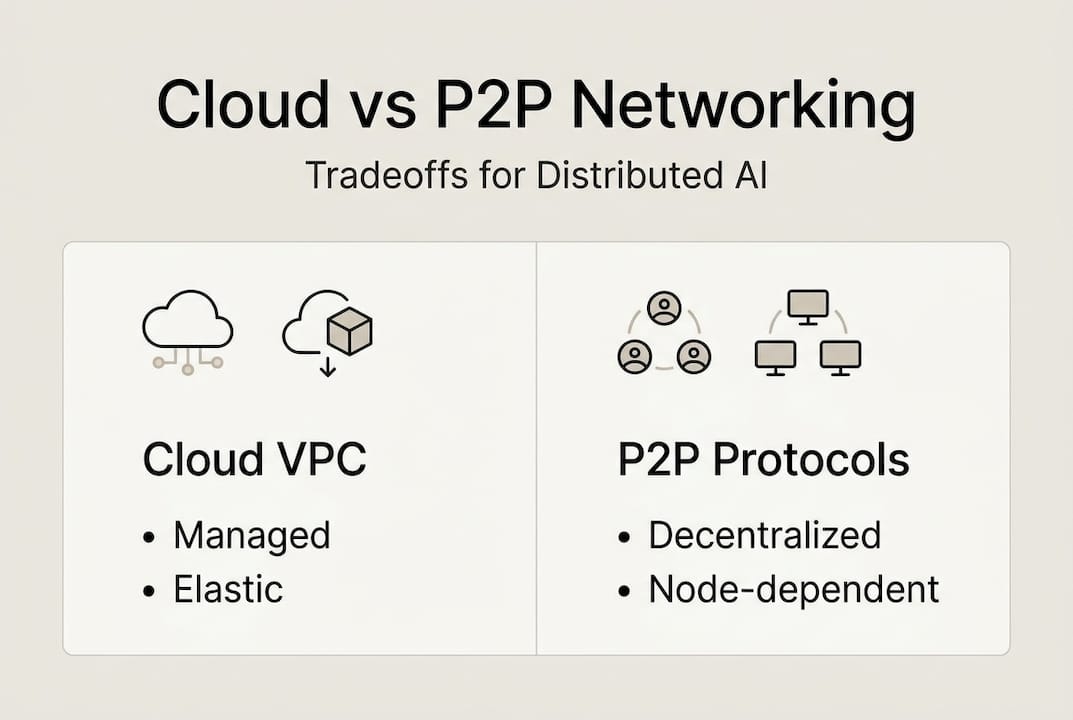

Cloud networking centers on managed infrastructure. Virtual Private Clouds (VPCs) give you isolated, software-defined networks with fine-grained access controls, predictable latency, and built-in redundancy. The tradeoff is real: centralized cloud networking offers scalability and strong security guarantees while introducing provider lock-in and single points of failure (SPOF). If your cloud provider has an outage, your agents go dark.

Peer-to-peer (P2P) overlays like libp2p and IPFS take a different approach. Nodes discover each other, route around failures, and share data without a central coordinator. This design promises censorship resistance and resilience. However, as we’ve seen, the promise doesn’t always match reality in production deployments.

Key features and drawbacks at a glance:

- Cloud VPC: Managed security groups, auto-scaling, SLA-backed uptime, but vendor lock-in and SPOF risk

- libp2p/IPFS: Permissionless, censorship-resistant, no central server, but centralization drift and NAT traversal complexity

- Hybrid: Combines control-plane reliability with P2P resilience, but adds architectural complexity

For teams building multi-cloud networking across regions, neither pure approach is sufficient on its own.

| Feature | Cloud VPC | libp2p/IPFS |

|---|---|---|

| Scalability | Managed, elastic | Manual, node-dependent |

| Security | Centralized policy enforcement | Cryptographic, peer-validated |

| Resilience | Provider-dependent | Theoretically distributed |

| Lock-in risk | High | Low |

| Centralization risk | Inherent | Growing (87.33% cloud nodes) |

| NAT traversal | Handled by provider | Requires DCUtR or relays |

For P2P solutions for AI architectures, understanding these tradeoffs before you commit to a stack is essential. Switching protocols mid-deployment is expensive and disruptive.

Protocols powering secure peer-to-peer communication

With the core concepts defined, it’s crucial to understand the specific protocols your distributed systems rely on for secure and efficient communication.

Libp2p is the modular networking stack used by IPFS, Ethereum, and many AI agent frameworks. It handles peer discovery, connection multiplexing, and encryption. The Noise protocol within libp2p provides authenticated key exchange and forward secrecy, while mplex and yamux handle stream multiplexing so multiple logical channels share one connection. For NAT traversal, libp2p uses DCUtR (Direct Connection Upgrade through Relay), which achieves roughly 70% direct hole-punch success with 97.6% first-attempt efficiency. The remaining 30% fall back to relay nodes.

IPFS adds content addressing on top of libp2p. Instead of locating data by server address, you locate it by content hash. This makes data tamper-evident but also introduces replication complexity. If few nodes host your content, retrieval latency spikes.

SCION (Scalability, Control, and Isolation On next-generation Networks) offers multipath transport with cryptographic path validation. Unlike traditional IP routing, SCION lets you select and verify the exact path your packets take, which matters enormously for encrypted tunnel advantages in high-security AI deployments.

| Protocol | NAT traversal success | Latency improvement | Path validation |

|---|---|---|---|

| libp2p DCUtR | ~70% direct | Moderate | No |

| SCION multipath | N/A (path-aware) | High | Yes (cryptographic) |

| IPFS (libp2p base) | ~70% direct | Variable | Content hash only |

Steps to initiate secure peer-to-peer communication with libp2p:

- Generate a persistent peer identity (Ed25519 keypair)

- Configure multiaddresses for all available transports (TCP, QUIC, WebSocket)

- Enable the Noise protocol handshake for all connections

- Register with a DHT bootstrap node for peer discovery

- Activate DCUtR for NAT hole-punching

- Set relay fallback addresses for the ~30% of connections that fail direct punch

- Open multiplexed streams for each agent communication channel

Pro Tip: Combine SCION multipath routing with DCUtR hole-punching for the best combination of reliability and speed. SCION handles path-level resilience while DCUtR maximizes direct connectivity for decentralized P2P networking.

Real-world networking challenges: NAT traversal, centralization, and cloud trade-offs

Protocols are only as good as their implementation. Here are the ongoing obstacles practitioners encounter and how data exposes nuance behind popular assumptions.

NAT traversal is the first wall you hit. Roughly 30% of NAT traversal attempts fail outright, requiring relay fallback. Symmetric NAT configurations, common in enterprise and mobile environments, block hole-punching entirely. If you don’t build relay infrastructure into your design from day one, you will debug mysterious connection failures at 2 a.m.

IP address collisions are another underappreciated problem. When agents span multiple VPCs or on-premise networks, overlapping CIDR blocks cause silent routing failures. Data appears to send but never arrives.

Centralization in P2P networks is the most counterintuitive challenge. IPFS cloud nodes now host 87.33% of data, up from roughly 50% just three years ago. This means your “decentralized” storage may actually depend on a handful of cloud providers.

“The assumption that P2P equals decentralization is no longer safe. Empirical data shows IPFS has centralized faster than most practitioners realize, with cloud nodes dominating data hosting in 2026.”

Cloud security mismatches add another layer of complexity. Security Groups (SGs) are stateful and track connection state, while Network ACLs (NACLs) are stateless and evaluate every packet independently. Mixing these without understanding the difference causes asymmetric traffic drops that are notoriously hard to diagnose.

Common networking challenges you will encounter:

- NAT traversal failures in symmetric NAT environments

- Overlapping IP ranges across VPCs and on-premise networks

- Unexpected data transfer costs between availability zones

- Centralization drift in IPFS and similar P2P networks

- Stateful vs. stateless security policy mismatches

- Latency spikes from suboptimal peer selection

Pro Tip: Always build relay fallback mechanisms into your NAT traversal design. Treat relays as a first-class component, not an afterthought. Explore AI networking challenges and secure network infrastructure guides to map your specific risk surface before deployment.

Hybrid cloud–P2P strategies: Best practices for distributed AI systems

Understanding challenges lets you build future-proof architectures. Here’s how pros merge cloud and P2P models for scalable, secure distributed AI.

The most effective teams don’t choose between cloud and P2P. They use cloud VPC as the control plane for reliability, authentication, and policy enforcement, while P2P overlays handle data distribution, agent communication, and cross-region resilience. Hybrid approaches combining VPC control planes with P2P overlays consistently outperform pure implementations in both reliability and flexibility.

Deduplication strategy also matters more than most teams expect. FSC chunking outperforms CDC (Content Defined Chunking) and fixed-size chunking for storage efficiency in distributed systems. Optimizing your chunking method directly reduces storage costs and retrieval latency at scale.

Best practices for implementing hybrid cloud–P2P architectures:

- Use cloud VPC for identity management, access control, and audit logging

- Deploy P2P overlays (libp2p or custom) for agent-to-agent data exchange

- Implement SCION or equivalent multipath transport for path-aware routing

- Configure DCUtR with relay fallback as a standard pattern, not an edge case

- Apply FSC deduplication chunking to minimize storage overhead

- Monitor centralization metrics regularly and redistribute data when concentration exceeds thresholds

- Use AI networking best practices to validate your architecture against known failure modes

Common pitfalls and how to avoid them:

- Assuming P2P equals decentralization: Monitor node distribution continuously

- Skipping relay infrastructure: Budget for relay nodes from the start

- Ignoring CIDR planning: Allocate non-overlapping ranges before any deployment

- Using fixed-size chunking: Switch to FSC for meaningful efficiency gains

- Treating security groups and NACLs as equivalent: Understand stateful vs. stateless behavior

Following a secure networking workflow from the start saves significant rework later.

The uncomfortable truth: Decentralization isn’t automatic—what experts really do

Here’s what most articles won’t tell you: the word “decentralized” has become marketing language as much as a technical description. The data is clear. Cloud node share in IPFS jumped from 50% to 87.33% in three years. A network designed to resist centralization has centralized faster than most of its users know.

Experienced teams don’t chase ideological purity. They build hybrid systems that are pragmatically resilient. They monitor centralization metrics the same way they monitor latency and error rates. They treat relay fallback as infrastructure, not a bug fix. They apply dedup-optimized chunking because storage efficiency compounds at scale.

The teams that struggle are the ones who adopt a P2P protocol and assume the architecture handles itself. It doesn’t. Decentralization requires active maintenance, measurement, and adaptation.

Pro Tip: Set automated alerts for centralization drift in your P2P storage layer. If more than 60% of your data concentrates on fewer than five nodes, redistribute proactively. Pair this with multi-cloud strategies to avoid single-provider dependency at the infrastructure level.

The best distributed AI systems in production today are hybrid by design, not by accident.

Build secure, scalable networking for AI agents—start here

Applying these concepts in production requires more than theory. You need tooling that handles NAT traversal, encrypted tunnels, peer identity, and multi-cloud connectivity out of the box.

Pilot Protocol is built specifically for AI agents and distributed systems that need secure, direct peer-to-peer communication without centralized brokers. It handles virtual addressing, NAT punch-through, mutual trust establishment, and encrypted tunnels across cloud regions and on-premise environments. You can wrap existing HTTP, gRPC, and SSH traffic inside the overlay without rewriting your stack. Start with the secure AI networking workflow to see how a real deployment comes together, step by step.

Frequently asked questions

What is NAT traversal and why does it matter for decentralized AI networks?

NAT traversal lets peers connect directly across private networks without a central server. It matters because roughly 30% of attempts fail, requiring relay fallback infrastructure to maintain reliable connectivity.

How does centralization affect peer-to-peer protocols like IPFS?

Centralization concentrates data on a small number of nodes, reducing resilience and increasing cloud dependency. IPFS cloud node share rose to 87.33%, meaning most data now lives on a few providers rather than a distributed network.

What are the best practices for combining cloud and P2P networking in distributed AI systems?

Use cloud VPC for control-plane reliability and P2P overlays for data distribution and resilience. Hybrid approaches using SCION and DCUtR with dedup-optimized chunking deliver the best balance of security and scalability.

What security risks exist in permissionless P2P networking?

Without cryptographic path validation, P2P networks are vulnerable to route hijacking and trust failures. SCION’s cryptographic path validation addresses this by letting nodes verify the exact path their traffic takes.

How does deduplication chunking impact storage efficiency in IPFS?

FSC chunking outperforms fixed-size methods and CDC by reducing duplicate content stored across nodes, which directly lowers storage costs and improves retrieval speed at scale.