AI networking best practices for secure, scalable systems

AI networking best practices for secure, scalable systems

TL;DR:

- Modern AI networks require decentralized or peer-to-peer architectures for security and resilience.

- Secure communication relies on encryption, consensus protocols, and proactive threat detection tools.

- Real-world deployment emphasizes testing under load, hybrid evaluation, and next-generation tools like Pilot Protocol.

Modern AI systems push well beyond what traditional client-server networking was built to handle. When you’re coordinating autonomous agent fleets across multiple clouds and regions, the network layer is not just infrastructure. It’s a core part of your system’s security posture, resilience, and performance. Decentralized and peer-to-peer (P2P) designs remove single points of failure, but they introduce new challenges around trust, encryption, and knowledge propagation. This article delivers actionable, research-backed practices so you can build agent networks that are secure, scalable, and ready for real-world conditions.

Table of Contents

- Define your architecture: Decentralized vs. peer-to-peer

- Secure data exchange: Encryption and consensus essentials

- Optimize knowledge propagation and consensus

- Proactive network security for autonomous agents

- Hard lessons from real-world AI networking

- Explore next-gen AI networking tools

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Choose resilient architectures | Dynamic DAGs and P2P DHTs like Kademlia are top options for building robust, scalable AI networks. |

| Prioritize strong data security | Layer policy-based encryption, homomorphic computation, and consensus safeguards for secure agent communication. |

| Optimize learning and resilience | Use proven frameworks like OpenCLAW and AgentNet to accelerate consensus and resist adversarial faults. |

| Enforce continuous network security | Automate threat detection and zero-trust enforcement with specialized tools such as A2A Scanner. |

Define your architecture: Decentralized vs. peer-to-peer

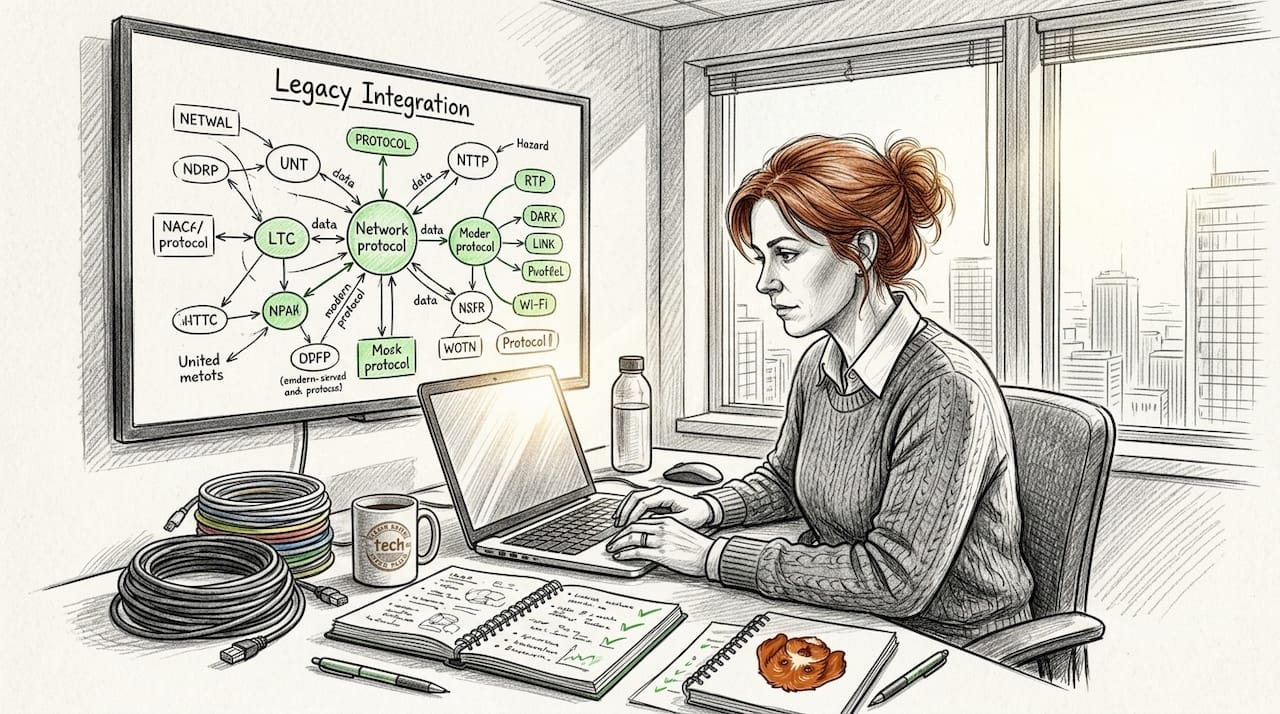

With the need for robust network foundations established, let’s break down your architectural options for agent networks. The first decision you’ll face is choosing between a decentralized topology and a pure P2P topology. These terms are often used interchangeably, but they have meaningful differences that affect how your agents discover each other, share state, and recover from failure.

Decentralized architectures distribute control across multiple nodes without requiring a single coordinator. Common patterns include directed acyclic graphs (DAGs) and distributed hash tables (DHTs). A DAG-based design lets agents record state transitions without a central ledger, while a DHT like Kademlia assigns each agent a position in a structured key-value space for fast, scalable lookup. Dynamic DAGs and DHTs are proven for fault-tolerant, scalable agent coordination without central orchestrators.

Pure P2P topologies connect agents directly, with no privileged nodes. This maximizes resilience but makes discovery and trust harder to manage at scale.

Here’s a quick comparison of the two primary protocol approaches for enterprise AI networking:

| Feature | A2A (Google/Cisco-backed) | ANP (Community) |

|---|---|---|

| Discovery model | Centralized/enterprise registry | Fully decentralized |

| Trust model | Federated identity | Trust-minimized, open |

| Best fit | Enterprise, managed environments | Open, multi-party networks |

| Governance | Vendor-led | Community-driven |

A2A vs ANP protocols shows that A2A suits enterprise and centralized discovery, while ANP excels in fully decentralized, trust-minimized open networks. Choose A2A when you control the environment and need managed identity. Choose ANP when you’re building open, multi-party agent ecosystems.

Key factors to weigh when selecting your architecture:

- Fault tolerance: DHTs handle node churn well; DAGs preserve causal history under partitions

- Scalability: Kademlia-style DHTs scale to millions of nodes with O(log n) lookups

- Discovery latency: Centralized registries (A2A) are faster; decentralized discovery (ANP) is more resilient

- Compliance: Enterprise environments often require auditable, federated identity, favoring A2A

For deeper context on P2P solutions for AI architectures, and for a review of communication protocols for AI developers, both are worth reading before you finalize your design.

Pro Tip: If you’re unsure which to pick, start with a DHT-based design for flexibility. You can layer enterprise identity controls on top later without redesigning your routing layer.

Secure data exchange: Encryption and consensus essentials

Once you know your architectural direction, securing agent communication is essential for trustworthy operations. Encryption is not optional in agent networks. Agents exchange model outputs, task assignments, and sensitive state data. A breach at the network layer can compromise your entire pipeline.

Policy-based and homomorphic encryption combined with consensus mechanisms are the recommended approach for preventing collusion and ensuring computation privacy in AI agent networks. Here’s what each method gives you:

- Policy-based encryption ties decryption rights to verifiable attributes (role, clearance, context). Only agents meeting the policy can decrypt a message, even if they intercept it.

- Homomorphic encryption lets agents compute on encrypted data without decrypting it first. This is critical when agents from different organizations share a computation task but cannot expose raw data.

- Byzantine fault-tolerant (BFT) consensus ensures your network reaches agreement even when a subset of agents behaves maliciously or fails unpredictably.

Implementing secure exchange in practice involves these steps:

- Define your trust boundaries. Identify which agents can communicate directly and which require mediated, policy-enforced channels.

- Select your encryption layer. Use TLS 1.3 for transport, policy-based encryption for access control, and homomorphic schemes where computation privacy is required.

- Choose a consensus protocol. For most agent fleets, Practical BFT (PBFT) or a variant like HotStuff provides strong guarantees with acceptable latency.

- Rotate keys automatically. Key rotation reduces blast radius if a single agent is compromised. Automate this in your CI/CD pipeline.

- Audit all inter-agent messages. Log message hashes and policy evaluations for post-incident forensics.

“Consensus mechanisms are not just about agreement. They are your primary defense against coordinated agent manipulation at scale.”

For a full walkthrough of secure AI network infrastructure and a step-by-step AI agent networking workflow, both resources give you concrete implementation guidance.

Pro Tip: Homomorphic encryption carries significant compute overhead. Reserve it for high-sensitivity computations and benchmark your latency budget before deploying it broadly.

Optimize knowledge propagation and consensus

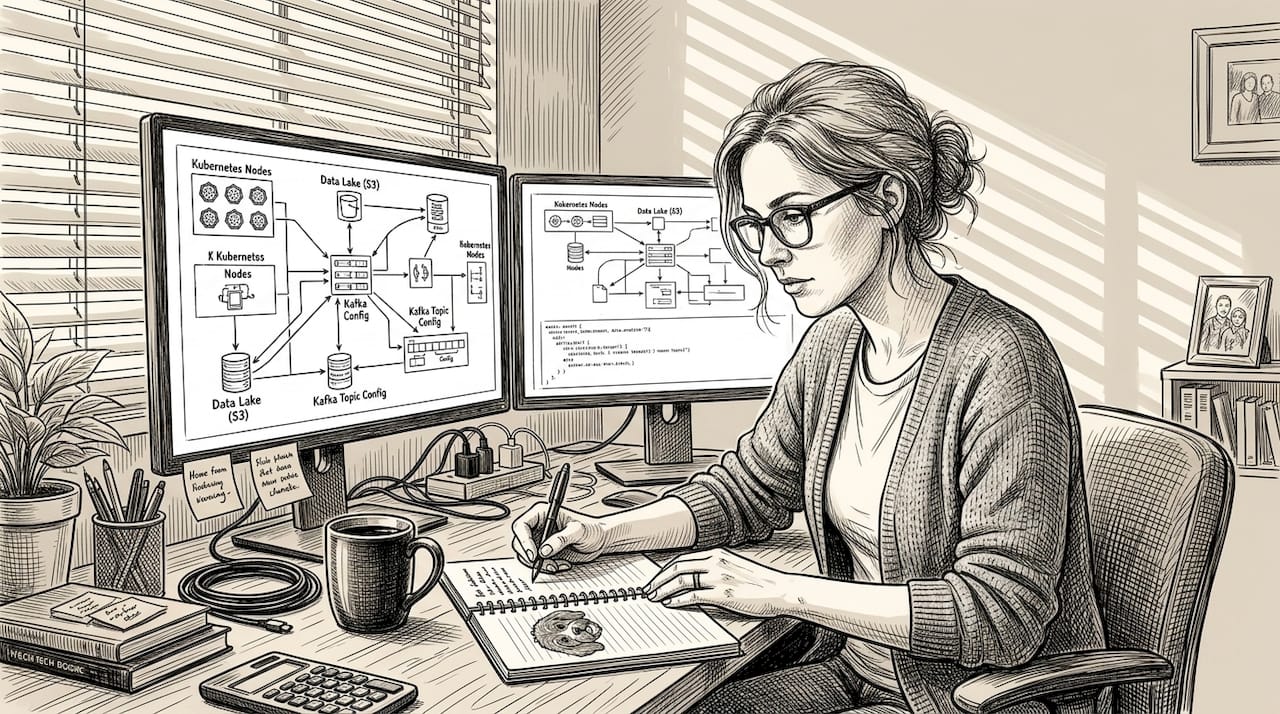

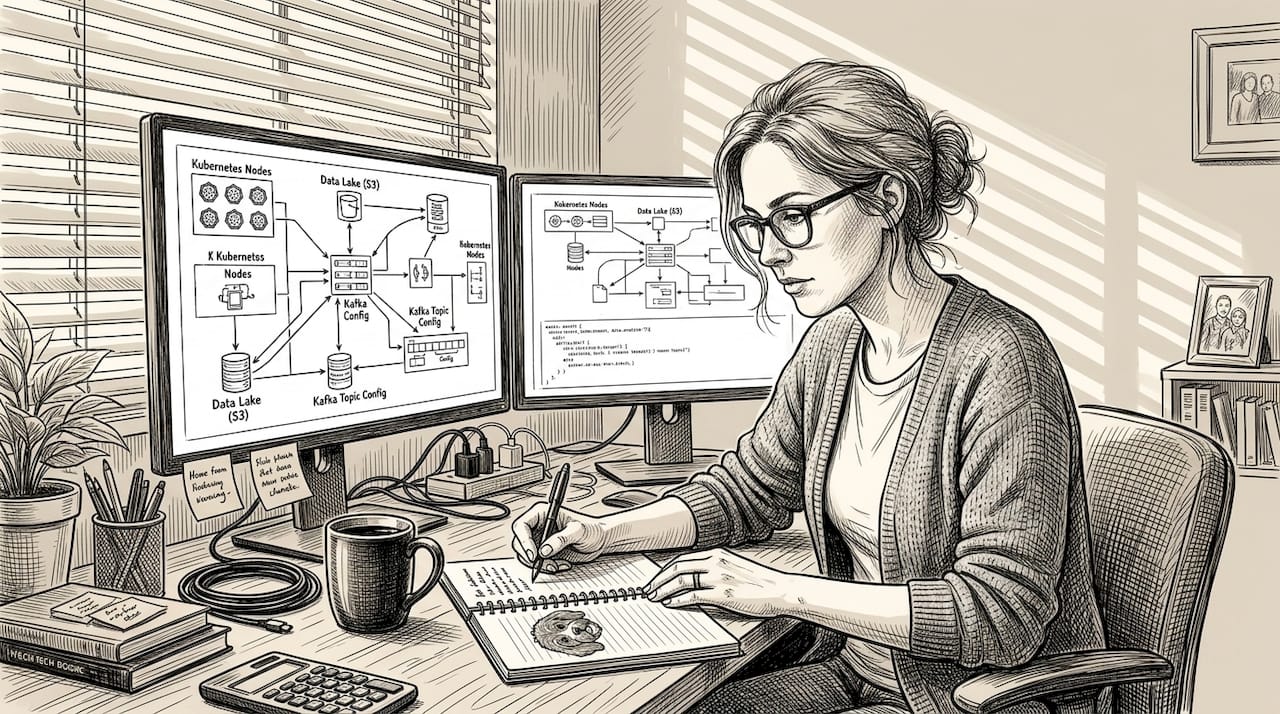

The effectiveness of your encryption and architecture sets the stage for how fast and reliably agents learn together. Knowledge propagation is the process by which agents share learned state, model updates, or task results across the network. In large agent fleets, this needs to be fast, bandwidth-efficient, and resilient to adversarial disruption.

Gossip protocols are the standard mechanism here. Each agent periodically shares its state with a small, random subset of peers. Information spreads exponentially, reaching all nodes in O(log n) rounds. OpenCLAW-P2P results show that knowledge propagation converges in just 3 gossip rounds (under 10 seconds), with full consensus achieved in 60 seconds even under 20% Byzantine faults. That’s a strong baseline for production deployments.

AgentNet takes this further. It outperforms traditional multi-agent frameworks in dynamic task efficiency, specialization stability, and adaptive learning speed, making it a strong reference architecture for high-throughput agent networks.

Here’s a performance comparison based on published benchmarks:

| Metric | OpenCLAW-P2P | Traditional Multi-Agent |

|---|---|---|

| Convergence rounds | 3 gossip rounds | 8+ rounds |

| Convergence time | Under 10 seconds | 30 to 60 seconds |

| Consensus under 20% Byzantine faults | 60 seconds | Fails or degrades |

| Adaptive learning speed | High | Moderate |

Key practices for optimizing propagation in your own deployments:

- Tune gossip fan-out based on network size. A fan-out of 3 to 5 works well for most fleets.

- Use vector clocks or CRDTs (conflict-free replicated data types) to merge state without coordination overhead.

- Partition your network into regional clusters. Propagate locally first, then sync globally to reduce cross-region latency.

- Simulate Byzantine conditions in staging. Inject 10 to 20% faulty nodes to validate your consensus layer before production.

For more on autonomous agent networking for distributed AI, including how to size your gossip parameters, that resource covers production-grade tuning in detail.

Proactive network security for autonomous agents

Now that consensus processes are streamlined, keeping your network safe from evolving threats becomes a top priority. Autonomous agents introduce a unique attack surface. They make decisions, call external APIs, and exchange data continuously. A compromised agent can exfiltrate data, inject false state, or disrupt consensus.

The A2A Scanner tool from Cisco AI Defense detects threats using YARA rules, spec compliance checks, heuristic analysis, and LLM-based scanning. It also enforces HTTPS and security headers across agent endpoints, giving you automated, continuous threat detection without manual review overhead.

Top network security actions for autonomous agent deployments:

- Enforce zero-trust networking. Every agent must authenticate before communicating, regardless of network location. Use mutual TLS (mTLS) for all agent-to-agent connections.

- Apply YARA rules to scan agent payloads for known malicious patterns before they reach your orchestration layer.

- Validate spec compliance on all agent messages. Reject malformed or out-of-spec payloads at the network edge.

- Monitor for anomalous behavior. Baseline normal agent communication patterns and alert on deviations in message frequency, payload size, or destination.

- Use LLM-based heuristic scanning for novel threats that signature-based rules miss.

- Enforce HTTPS and strict security headers on all agent endpoints, including internal ones.

For guidance on network tunnels for AI security and implementing Zero Trust for AI agent communication, both resources provide step-by-step implementation details.

Pro Tip: Set up automated rollback triggers. If an agent’s behavior score crosses a threshold during LLM scanning, automatically quarantine it and restore the last known-good state. This cuts mean time to remediation dramatically.

Hard lessons from real-world AI networking

Theory gives us a solid foundation, but real-world networking success depends on context and flexibility. Most teams underestimate the gap between benchmark performance and production behavior. Gossip protocols converge in 3 rounds in a lab. In production, network jitter, agent heterogeneity, and unexpected load spikes change everything.

The biggest lesson we’ve seen repeated: teams optimize for accuracy and throughput, then get surprised by latency and cost. Evaluating AI agents holistically means going beyond accuracy to measure resilience, safety, latency, and cost together, using hybrid LLM-judge and human review to catch what automated metrics miss.

“The agents that fail in production are rarely the ones with the weakest models. They’re the ones built on networks that weren’t tested under real adversarial and load conditions.”

Hybrid evaluation shifts your priorities. You start asking not just “does this agent produce correct outputs?” but “does this agent behave safely under network stress, partial failure, and adversarial input?” That reframe changes how you design your networking layer from day one. For a solid grounding in AI networking terminology before your next architecture review, that resource is a practical starting point.

Explore next-gen AI networking tools

Building from hard-won lessons and industry best practices, here’s where you can take the next step. The practices in this article are only as effective as the infrastructure you run them on.

Pilot Protocol gives you the decentralized networking infrastructure to put these practices into production. You get encrypted P2P tunnels, NAT traversal, virtual addresses, and mutual trust establishment out of the box, without relying on centralized brokers or message queues. It supports multi-cloud and cross-region agent connectivity, wraps HTTP, gRPC, and SSH inside its overlay, and integrates cleanly with existing systems. Explore more on decentralized AI networking to see how teams are building production-grade agent fleets today.

Frequently asked questions

What are the most resilient P2P architectures for AI networking?

Dynamic DAGs and DHTs such as Kademlia are proven for fault tolerance and scalability in AI systems, handling node churn and network partitions without a central coordinator.

How can you prevent collusion and ensure privacy in agent networks?

Apply policy-based and homomorphic encryption for computation privacy and use Byzantine fault-tolerant consensus mechanisms to prevent coordinated manipulation across your agent fleet.

What is OpenCLAW-P2P and why is it relevant?

OpenCLAW-P2P is a decentralized framework where knowledge propagation converges in 3 gossip rounds and achieves consensus in 60 seconds even with 20% Byzantine faults, making it a strong benchmark for production agent networks.

How do you monitor and enforce network security for AI agents?

Use A2A Scanner for automated threat detection through YARA rules, spec compliance, and LLM analysis, combined with enforced HTTPS and security headers across all agent endpoints.