How mutual trust secures decentralized AI agent networks

How mutual trust secures decentralized AI agent networks

TL;DR:

- Decentralized networks are not truly “trustless” because establishing reliable peer trust remains essential to prevent manipulation and attacks. Utilizing reputation systems, blockchain-based records, and adaptive trust models enhances system resilience, scalability, and attack resistance. Building trust as a core, evolving engineering component is crucial for secure, scalable AI agent deployments in dynamic environments.

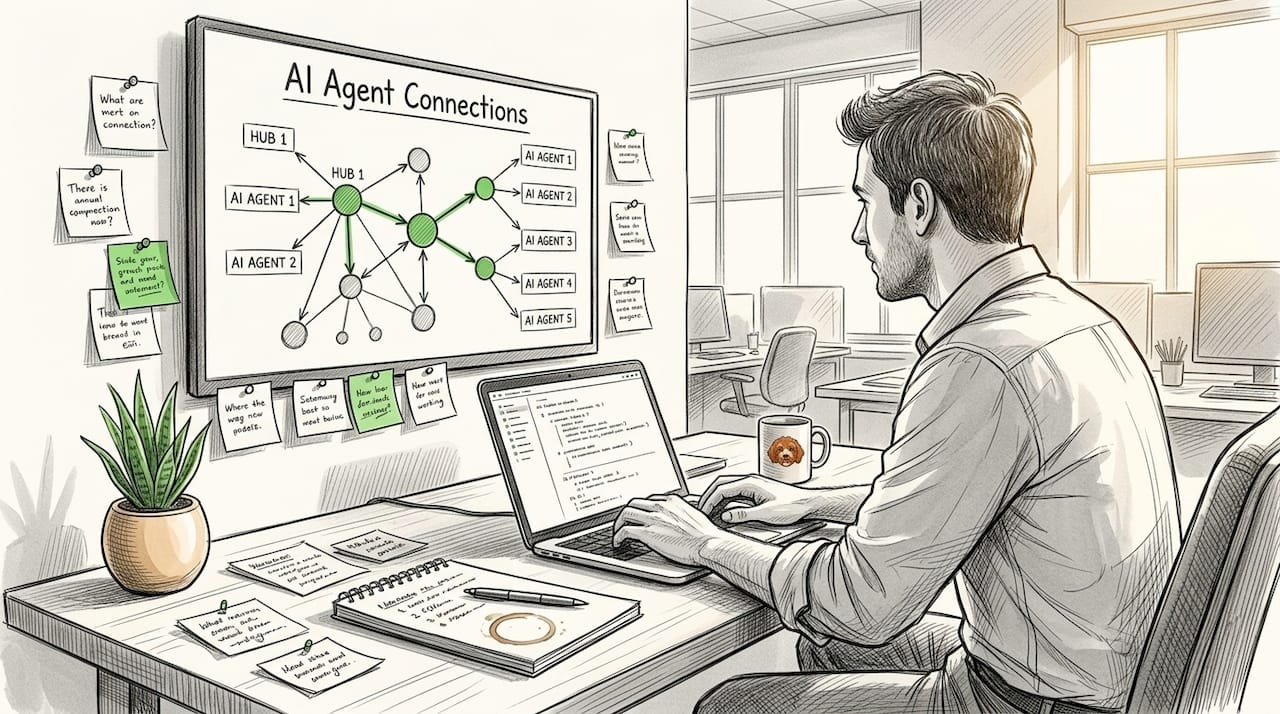

Decentralized networks carry a reputation for being “trustless,” but that label is misleading in practice. When AI agents operate autonomously across peer-to-peer (P2P) infrastructure, the absence of a central authority does not eliminate the need for trust. It makes trust harder to establish and far more critical to get right. Agents that cannot reliably identify safe peers become targets for manipulation, data poisoning, and denial-of-service attacks. This guide covers how mutual trust actually works in decentralized AI systems, which models perform best, and what you need to do to build resilient trust into your deployments from day one.

Table of Contents

- Why mutual trust matters in decentralized P2P networks

- Core models: How mutual trust is built and measured

- Decentralization, blockchain, and combating collusion

- Adaptation and resilience: Trust under attack and changing conditions

- Best practices for establishing mutual trust in AI-driven networks

- Our take: Why trust frameworks are more complex—and critical—than most believe

- Ready to deploy resilient P2P networks? See how Pilot Protocol accelerates trust

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Trust is foundational | Decentralized networks cannot function securely or efficiently without robust mechanisms for mutual trust. |

| Advanced trust models | Modern AI networks leverage reputation, blockchain, and adaptive algorithms to resist attacks and adapt to change. |

| Resilience to threats | Trust metrics can drop quickly in adverse conditions but are designed to recover and maintain system integrity. |

| Practical implementation | Selecting and tuning the right trust frameworks is critical for reliable, secure AI-driven automation. |

Why mutual trust matters in decentralized P2P networks

The word “trustless” describes a system where no single party holds privileged authority. It does not mean agents can interact freely without evaluating each other. In any automated P2P environment, an agent that skips peer evaluation risks accepting corrupted data, routing through compromised nodes, or falling victim to Sybil attacks where one adversary controls many fake identities.

Trust protects your network at three levels:

- Communication integrity: Agents only exchange data with verified peers, reducing man-in-the-middle exposure.

- Resilience: A well-designed trust model isolates misbehaving nodes before they cascade failures across your fleet.

- Scalability: Trust-filtered connections reduce unnecessary traffic, keeping bandwidth and compute costs predictable as networks grow.

“Trustless” means no central authority. It does not mean no trust model. Every production-grade P2P network still requires agents to assess, record, and act on peer reputation.

The underlying mechanism is the distributed reputation system. Rather than querying a central server, agents rely on distributed reputation systems that aggregate direct interactions, peer recommendations, real-time feedback, and collective trust scores. No single node holds the authoritative record, which removes the single point of failure that plagues centralized designs.

Understanding network protocol trust at this foundational level is essential before you pick a trust model or write a single line of agent code.

Pro Tip: Start small. Run a controlled subset of agents under a reputation-based model before scaling. Early data collection on peer behavior makes your trust parameters far more accurate at production scale.

Core models: How mutual trust is built and measured

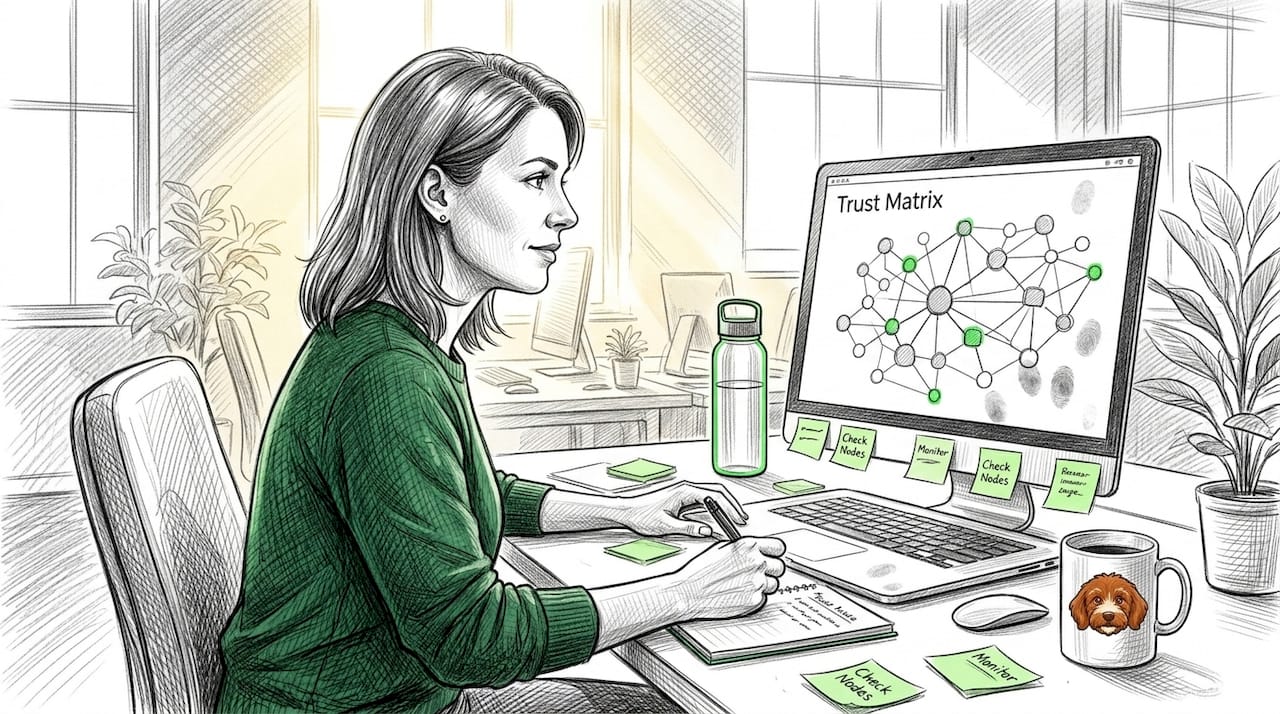

Several formal trust models exist for decentralized systems. Each one trades off accuracy, computational cost, and attack resilience differently. Knowing those trade-offs helps you pick the right tool for your architecture.

The four most referenced models in current research are:

- EigenTrust: Uses eigenvector calculations on the global trust matrix. It is well-suited for static networks but degrades when peers join and leave frequently.

- TNA-SL: Incorporates social layers and role-based weighting. Better at modeling complex agent relationships but adds overhead.

- TACS: Focuses on transaction-aware context sensitivity, weighing trust differently across service types.

- AntTrust: Combines current trust, peer recommendation, direct feedback, and collective trust aggregation into a single composite score. It is the most complete model for dynamic, adversarial environments.

Comparison of major trust models

| Model | Key factors | Attack resilience | Avg. runtime (ms) |

|---|---|---|---|

| EigenTrust | Global matrix, success rate | Moderate | Low |

| TNA-SL | Social layers, role weighting | Moderate | Medium |

| TACS | Transaction context | Moderate-High | Medium |

| AntTrust | Feedback, recommendations, collective score | High | Medium-High |

Empirical benchmarks confirm that AntTrust outperforms EigenTrust, TNA-SL, and TACS across success rate stability and malicious peer resistance, making it the strongest baseline choice for autonomous AI fleets.

How reputation-based trust is calculated and updated in practice:

- Agent A completes a transaction with Agent B and logs an outcome score.

- Agent A queries neighbors for their recent observations of Agent B.

- A weighted average combines direct experience, neighbor recommendations, and global aggregation.

- The resulting score updates Agent B’s reputation record in the distributed ledger or gossip network.

- The score decays over time, ensuring that old good behavior does not permanently shield a compromised agent.

When designing your system, pay attention to reputation system vulnerabilities such as ballot stuffing and whitewashing, where agents game the feedback mechanism. Also consider invisible agent models for scenarios where you want agents to operate with minimal footprint until trust is established.

Decentralization, blockchain, and combating collusion

Reputation systems work well under normal conditions but face real stress when groups of coordinated adversaries attempt collusion. This is where blockchain-based trust frameworks add significant value.

Blockchain enhances reputation architectures in three specific ways:

- Immutability: Once a trust record is written, it cannot be silently altered by any single peer or cluster.

- Transparency: All participants can audit the history of interactions without relying on a trusted third party.

- Decentralized enforcement: Smart contracts automatically execute trust-based access rules, removing human intervention from the critical path.

The BARM (Blockchain-based Agent Reputation Management) framework applies these properties directly to multi-agent systems. In attack simulations using Uniform Group, RA, and TPS threat strategies, BARM demonstrates robust resistance to collusion because falsifying a record requires consensus from a majority of honest nodes, which a colluding minority cannot achieve.

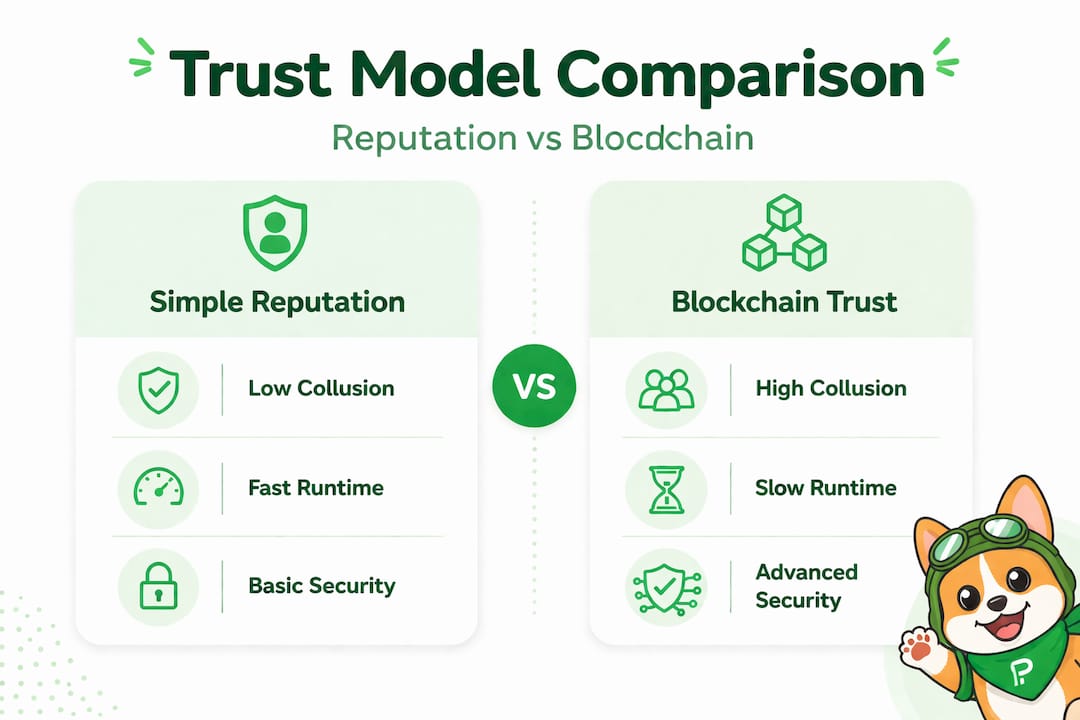

Simple reputation vs. blockchain-based trust

| Dimension | Simple reputation | Blockchain-based trust |

|---|---|---|

| Collusion resistance | Low to moderate | High |

| Immutability | No | Yes |

| Transparency | Partial | Full |

| Scalability | High | Moderate |

| Cold start cost | Low | High |

Two challenges remain significant. First, scalability: writing every trust interaction on-chain adds latency, which is problematic for high-frequency agent communication. Second, the cold start problem: new agents with no interaction history receive no trust score, making initial onboarding fragile. One practical mitigation is to require vouching from established agents before a new node gains full interaction rights.

Pro Tip: Use blockchain-based trust selectively. Apply it to high-stakes interactions, such as data exchange between agents handling sensitive model outputs, and use lighter reputation models for routine coordination traffic.

Learn more about blockchain-based trust strategies and how they fit into broader secure communication protocols for distributed AI systems. External research on cybersecurity trust centers also offers useful parallels from enterprise security design.

Adaptation and resilience: Trust under attack and changing conditions

Static trust scores are dangerous. A peer that behaved well for 1,000 interactions can be compromised on interaction 1,001. Real networks need trust models that react continuously to changing conditions, including active attacks.

Research using the CIC-IDS2017 enterprise traffic dataset shows that trust values plunge during DoS and DDoS attacks when modeled with the RNNTM (Recurrent Neural Network Trust Model) but recover clearly once the attack traffic subsides. This confirms that dynamic trust modeling can both detect attacks and signal recovery without manual intervention.

Trust variation during and after a simulated DoS attack

| Phase | Avg. trust score | Recovery time |

|---|---|---|

| Pre-attack baseline | 0.82 | N/A |

| Active DoS attack | 0.31 | N/A |

| 60 seconds post-attack | 0.61 | ~60s |

| 120 seconds post-attack | 0.78 | ~120s |

For environments with rapid topology changes such as auto-scaling agent fleets or edge inference clusters, biologically inspired models perform well. The CA (Cellular Automaton) algorithm adapts trust by modeling local interaction rules, similar to how biological systems propagate signals. It handles rapid trust fluctuations faster than prior models and is particularly suited for environments where agents enter and exit frequently.

Probabilistic models estimate trust from limited direct experience, making them more robust to malicious peers that attempt to skew global reputation through fake interactions.

For scenarios with sparse data, Bayesian trust estimation gives you a statistically grounded approach to trust prediction even when an agent has few recorded interactions.

How agents recalibrate trust in volatile conditions:

- Monitor incoming interaction outcomes in real time against expected behavior profiles.

- Flag deviations that exceed a configurable threshold, for example a sudden spike in failed responses.

- Apply a temporary trust penalty and reduce interaction priority with the flagged peer.

- Collect additional direct observations to either confirm the anomaly or clear the flag.

- If anomaly persists beyond a defined window, quarantine the peer and alert the network.

Explore trustless adaptive protocols for implementation patterns that automate steps 1 through 5. You can also review common AI network challenge solutions that address the specific failure modes emerging in production deployments. The broader context of security automation trends in 2026 shows that automated trust recalibration is now considered a baseline requirement, not an advanced feature.

Best practices for establishing mutual trust in AI-driven networks

Knowing the theory is not enough. You need a concrete implementation strategy that holds up under real adversarial conditions and scales with your agent fleet.

Follow these steps when building trust into a new system:

- Choose the right trust model for your threat environment. Use AntTrust or a hybrid model if your network faces active adversaries. Use EigenTrust as a lightweight baseline for internal, low-risk networks.

- Integrate trustworthy data sources from the start. Seed your reputation system with high-quality interaction data. Garbage in means unreliable trust scores that compound over time.

- Automate feedback collection. Manual trust updates do not scale. Build automated outcome logging into every agent interaction so scores update continuously without human input.

- Plan for flexibility. Threat landscapes change. Design your trust model as a pluggable component so you can swap algorithms without rewriting your entire agent communication stack.

- Design trust directionality intentionally. Research confirms that elevated trust precedes increases in network communication, meaning trust is a precondition for deeper collaboration, not a byproduct of it. Build this directionality into your access policies.

Avoid these common mistakes:

- Single points of trust aggregation: Any centralized trust store is a target. Distribute trust records across multiple nodes.

- Underestimating adversaries: Collusion, whitewashing, and Sybil attacks are well-documented. Assume they will occur and design accordingly.

- Over-relying on historical scores: A long positive history does not guarantee current behavior. Apply time decay and contextual weighting.

Pro Tip: Consider hybrid trust models that combine blockchain immutability for high-value interactions with lightweight local reputation scoring for routine coordination. This gives you robustness where it counts and low latency where speed matters.

Use the security checklist for AI networks to verify your implementation covers all major trust surface areas. For ongoing guidance, the AI networking best practices resource walks through production-hardening steps in detail.

Our take: Why trust frameworks are more complex—and critical—than most believe

The idea that decentralized means “no trust required” persists because it conflates system architecture with security guarantees. Removing a central authority does reduce some attack surfaces. But it simultaneously pushes the full burden of peer verification onto individual agents, and most agent implementations are not prepared for that responsibility.

In practice, the failure modes we see most often trace back to simplistic trust logic. An agent uses a binary trusted/untrusted flag rather than a continuous score. Or a team deploys EigenTrust because it is well-known, without accounting for their dynamic topology. These are not catastrophic failures on day one. They are slow degradations that surface only when an adversary has already established a foothold.

The deeper issue is that trust frameworks are treated as infrastructure decisions made once during initial design. In reality, they need to be living components that evolve as your agent network grows, as threat intelligence improves, and as interaction patterns shift. Securing agent networks across multi-cloud environments adds another layer, because trust assumptions that hold in one region or provider may not hold when agents cross network boundaries.

Our honest assessment: the teams building the most resilient autonomous systems are not the ones with the most advanced AI models. They are the ones who treat trust as a first-class engineering concern from day one, iterate on their trust models based on real interaction data, and use formal analysis to catch design gaps before adversaries do. Foundational trust is the silent differentiator between networks that scale securely and networks that fail quietly under pressure.

Ready to deploy resilient P2P networks? See how Pilot Protocol accelerates trust

You now have a clear picture of what mutual trust requires: the right model, automated feedback, adversarial resilience, and continuous adaptation. Putting all of that together from scratch is a significant engineering lift.

Pilot Protocol is built specifically to accelerate this process for AI agent deployments. The platform provides virtual addresses, encrypted tunnels, NAT traversal, and built-in trust establishment so your agents can find, verify, and communicate with peers directly, without depending on centralized brokers. Whether you are orchestrating agents across multiple clouds or building a secure data streaming pipeline, Pilot Protocol gives you the infrastructure to enforce trust at the network layer. Visit the P2P trust solutions page to see how it fits your architecture and get started with the CLI or Python and Go SDKs.

Frequently asked questions

What is mutual trust in decentralized networks?

Mutual trust means all peers evaluate and accept each other using distributed reputation protocols rather than relying on any central authority to vouch for identities or behavior.

How do decentralized networks defend against collusion?

They use blockchain-based immutable records combined with distributed reputation scoring, making it computationally and socially expensive for a minority group to manipulate the global trust state.

Can trust models recover after denial-of-service attacks?

Yes. Trust scores drop sharply during active DoS and DDoS events but recover to near-baseline levels within one to two minutes once attack traffic stops, as confirmed in enterprise network simulations.

What’s the best model for trust under dynamic network conditions?

Biologically inspired CA models and Bayesian probabilistic approaches adapt fastest to rapid changes and malicious activity, making them the preferred choice for high-churn agent environments.

How much data is needed for reliable trust estimation?

A minimum of 22 direct interactions are required to reduce trust estimation error below 0.1, giving you a concrete onboarding threshold for new agents before granting them full interaction rights.

Recommended

- Trustless protocols that secure decentralized AI systems

- Securing AI agent networks in multi-cloud environments

- Top AI agent network examples for secure, scalable connectivity

- Top AI networking challenges for decentralized systems

- What is an AI agent? A guide for UK small businesses 2026 – AI Management Agency