Why Direct P2P Connections Power Secure AI Networking

Why Direct P2P Connections Power Secure AI Networking

TL;DR:

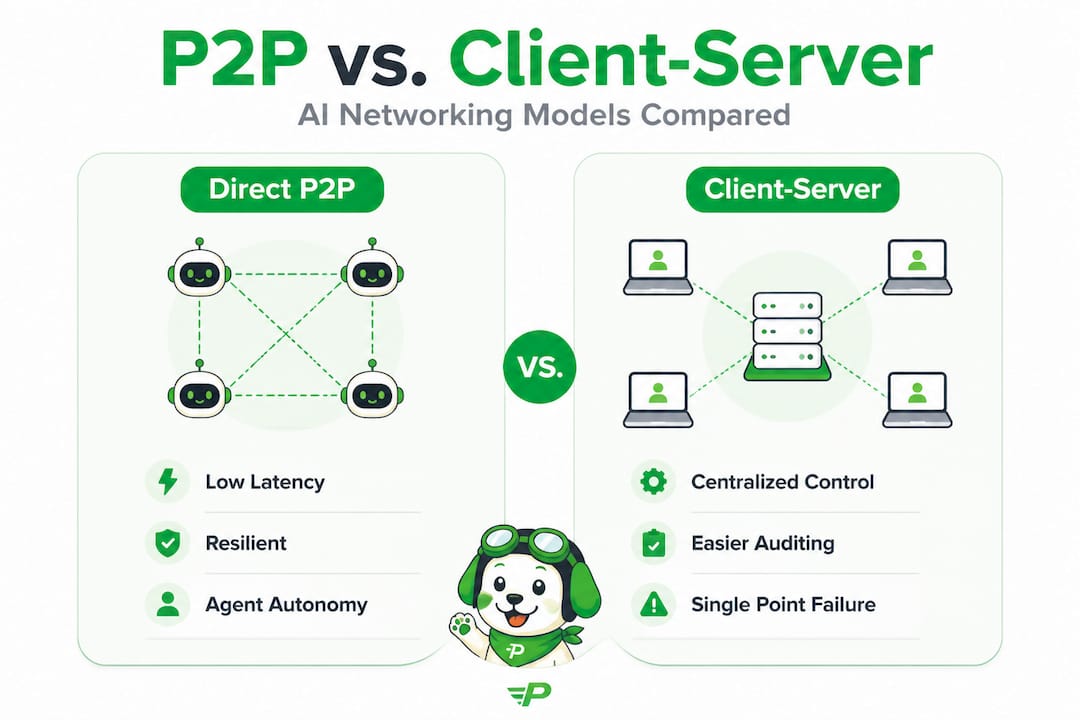

- Direct P2P communication reduces latency, eliminates single points of failure, and enables greater agent autonomy.

- Technologies like WebRTC, libp2p, and Pilot Protocol facilitate secure, NAT-traversable peer connections in multi-cloud environments.

- Hybrid architectures combining P2P data exchange with centralized logging balance scalability, security, and regulatory compliance.

Most AI agent architectures still route traffic through centralized brokers or API gateways, and that design choice quietly undermines everything you’re trying to build. When your agents span AWS, GCP, and Azure simultaneously, every hop through a central server adds latency, creates a single point of failure, and puts a hard ceiling on how autonomously your agents can actually operate. Direct peer-to-peer (P2P) connections remove that ceiling. This guide breaks down why P2P matters for AI agent networking, which technologies make it practical, and how you can deploy it across your multi-cloud infrastructure today.

Table of Contents

- Why direct peer-to-peer is critical for AI agents

- Core technologies powering direct peer-to-peer networking

- Trust models and security considerations in peer-to-peer networking

- Practical deployment: Getting AI agents connected peer-to-peer

- A fresh perspective: What most engineers miss about peer-to-peer in multi-cloud autonomous systems

- Next steps: Explore peer-to-peer solutions for your AI agents

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Direct P2P boosts agent autonomy | Peer-to-peer networking allows AI agents to communicate and operate independently without central servers. |

| Security and trust are vital | TOFU, end-to-end encryption, and robust trust models are key for safe P2P deployment. |

| Choose the right tech stack | Technologies like WebRTC, libp2p, and Pilot protocol simplify P2P implementation for multi-cloud AI environments. |

| Compliance requires hybrid designs | Pure peer-to-peer may not meet strict regulatory or audit demands, so combining models is often best. |

| NAT traversal enables wide connectivity | Auto NAT traversal solutions overcome network boundaries and are essential for scalable agent deployment. |

Why direct peer-to-peer is critical for AI agents

With the stage set, let’s get to the heart of why direct peer-to-peer matters most for AI agents operating in modern infrastructures.

In a standard client-server model, every agent message travels to a central broker before reaching its destination. That broker becomes a bottleneck. If it goes down, your entire agent fleet loses coordination. If traffic spikes, your broker becomes a chokepoint that slows every agent in the network simultaneously. Direct P2P eliminates that dependency by letting agents communicate straight to one another, reducing round-trip latency and removing the single-failure concern entirely.

P2P agent communication offers something even more valuable than lower latency: resilience at the architecture level. Agents operating in distributed environments, across different cloud providers or geographic regions, need to continue functioning even when parts of the network fail. In a P2P topology, losing one node doesn’t cascade into total network failure.

Direct P2P also enables genuinely autonomous agent behavior. An agent that must always route through a central server is not truly autonomous. It’s dependent. P2P lets agents discover each other, negotiate capabilities, and exchange data without waiting for a coordinator to approve or forward each interaction.

That said, P2P is not without challenges. You need to be clear-eyed about them before choosing this architecture:

- Security exposure: P2P security risks include malicious peers, scalability limits from churn, and higher implementation complexity compared to managed client-server setups.

- Network churn: Agents joining and leaving constantly can destabilize routing and peer discovery.

- Implementation complexity: Setting up NAT traversal, peer discovery, and encrypted channels requires more upfront engineering than pointing agents at a REST API.

“Understanding P2P’s limitations is not a reason to avoid it. It’s a prerequisite for implementing it correctly. Engineers who skip this step build fragile systems.”

Explore P2P solutions for AI architecture to see how these challenges map to specific design patterns you can adopt.

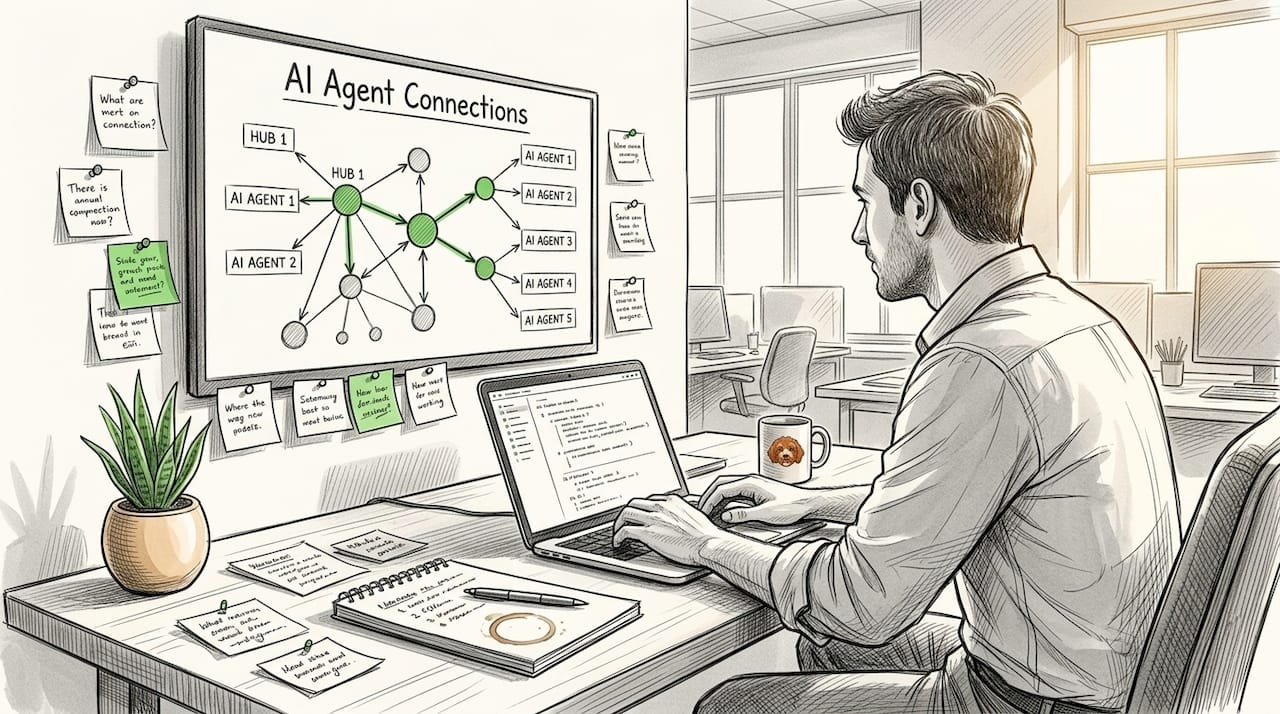

Core technologies powering direct peer-to-peer networking

Once you understand the “why,” the next question is “how,” specifically what tools and technologies make direct P2P communication possible for your AI systems.

Three technologies dominate this space: WebRTC, libp2p, and Pilot Protocol. Each has a distinct role, and choosing the right combination for your stack directly affects how much engineering overhead you carry long-term.

WebRTC was designed for browser-based real-time communication, but its signaling and ICE (Interactive Connectivity Establishment) mechanisms translate well to agent-to-agent connectivity. It handles NAT traversal through STUN and TURN servers natively, which means your agents can find and connect to each other even across restrictive firewalls. The downside is that WebRTC was built for media streams, and using it for data channels in agent networks requires careful tuning.

libp2p is a modular networking stack originally developed for IPFS. It provides peer discovery, stream multiplexing, and transport agnosticism out of the box. For AI agent fleets, libp2p’s modularity is a major advantage. You can swap transport layers without rewriting your communication logic. It also supports a wide range of peer discovery mechanisms, from mDNS for local networks to DHT for global-scale discovery.

Pilot Protocol takes a different approach by building a network-layer overlay specifically for AI agents. It provides persistent virtual addresses, encrypted tunnels, and NAT traversal for AI agents without requiring you to manage the underlying plumbing yourself. For teams who want production-ready P2P without months of infrastructure engineering, this is where the value proposition is clearest.

| Technology | NAT traversal | Agent discovery | Encryption | Best for |

|---|---|---|---|---|

| WebRTC | Built-in (STUN/TURN) | Requires signaling server | DTLS/SRTP | Real-time data exchange |

| libp2p | Relay-based | DHT, mDNS | Noise protocol | Custom, modular architectures |

| Pilot Protocol | Auto, zero-config | Built-in overlay | E2E by default | Multi-cloud AI agent fleets |

As multi-cloud AI developers know well, preferring WebRTC or libp2p for agents and using auto NAT traversal libraries like Pilot significantly reduces implementation complexity. That difference matters when your team is iterating fast.

Pro Tip: If your agents live entirely within a single VPC or private network, libp2p with mDNS discovery may be sufficient. Once you cross cloud boundaries or need zero-config NAT punch-through, Pilot Protocol’s overlay approach removes substantial operational burden.

Zero config NAT traversal is not just a convenience feature. In multi-cloud deployments, network topologies change constantly as new regions come online, agents scale out, and cloud provider firewalls apply unpredictable rules. Auto traversal means your agents stay connected without manual intervention.

For concrete use cases, review P2P networking examples that walk through real implementation patterns for AI engineers.

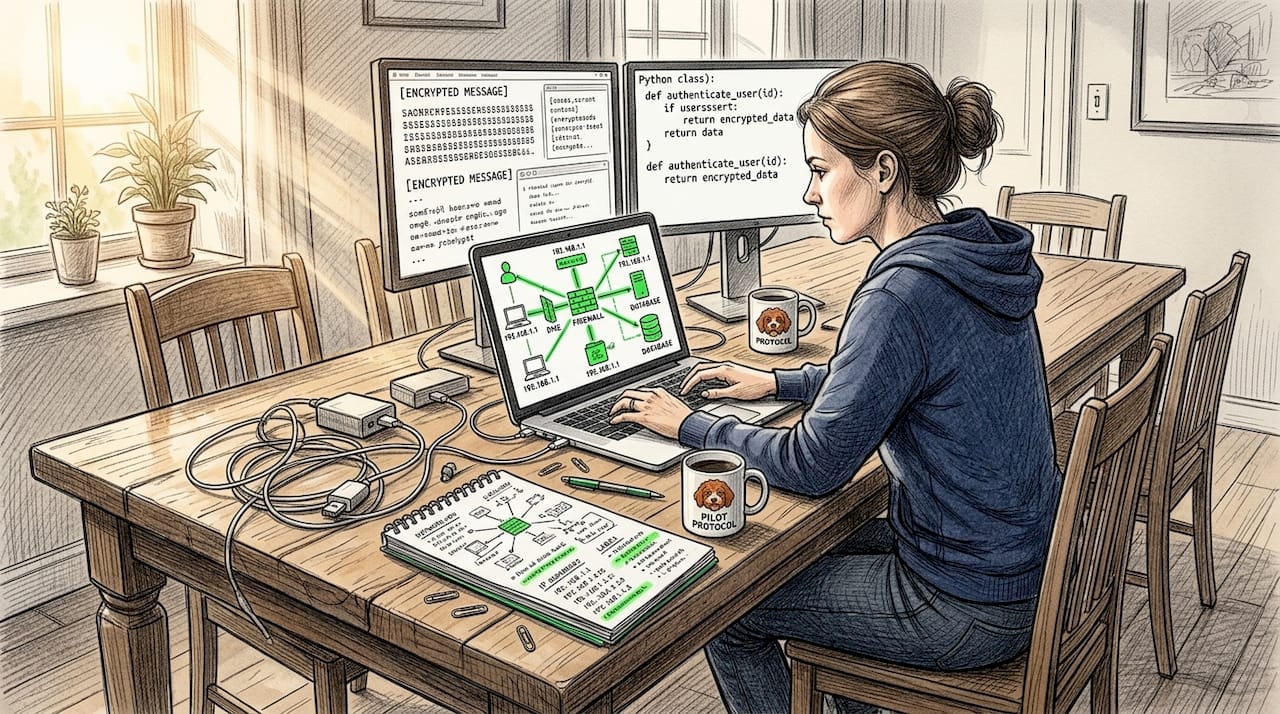

Trust models and security considerations in peer-to-peer networking

Choosing the right tech stack is only one piece. Effective trust models and security strategies are essential to thriving in P2P environments.

In a centralized system, trust is relatively straightforward: you authenticate against the server, and the server decides who gets access. In P2P, every agent is simultaneously a client and a server. That means trust must be established at the peer level, not delegated to a central authority.

Three mechanisms form the foundation of secure P2P for AI agents:

- TOFU (Trust On First Use): When an agent connects to a new peer for the first time, it stores that peer’s public key as trusted. Future connections verify against the stored key. This approach is practical and low-friction but requires careful key rotation policies.

- E2EE (End-to-End Encryption): All data exchanged between agents should be encrypted in transit, independently of any transport layer protections. This prevents network-level observers from reading agent payloads even if they can observe the traffic pattern.

- Capability discovery: Agents should negotiate what they can do before exchanging sensitive data. This limits exposure and reduces the attack surface if a peer is compromised.

You can review a full P2P security checklist to cover these points systematically before deployment.

The guidance from the peerclaw-agent project is direct: implement TOFU trust, E2EE, and capability discovery, and avoid pure P2P in scenarios that require strict compliance with audit trail requirements.

“P2P environments expose you to malicious peer risks and scalability constraints from network churn. Mitigating these is not optional in production AI systems.”

The compliance dimension deserves specific attention. If your AI agents operate in financial services, healthcare, or regulated trading environments, pure P2P often cannot satisfy audit requirements. Decentralized exchange audit trails illustrate why: every trade action must be logged, attributable, and tamper-evident. Pure P2P architectures typically lack the centralized logging infrastructure to satisfy these requirements out of the box.

In these cases, a hybrid architecture often delivers the best outcome. You retain P2P for data plane communication, where agents exchange payloads directly, while using a lightweight centralized component for control plane logging and audit. This gives you the latency and resilience benefits of P2P without sacrificing regulatory posture.

Read more about secure cloud P2P to see how this hybrid approach applies to cloud-native deployments. For a deeper look at foundational principles, trust in decentralized protocols covers the theoretical and practical dimensions of building reliable trust systems at scale.

Pro Tip: Do not rely on transport encryption alone. Implement application-layer E2EE between agents so that even if a proxy or relay node is compromised, it cannot read the payload. In Pilot Protocol, this is on by default. In DIY stacks, you need to engineer it explicitly.

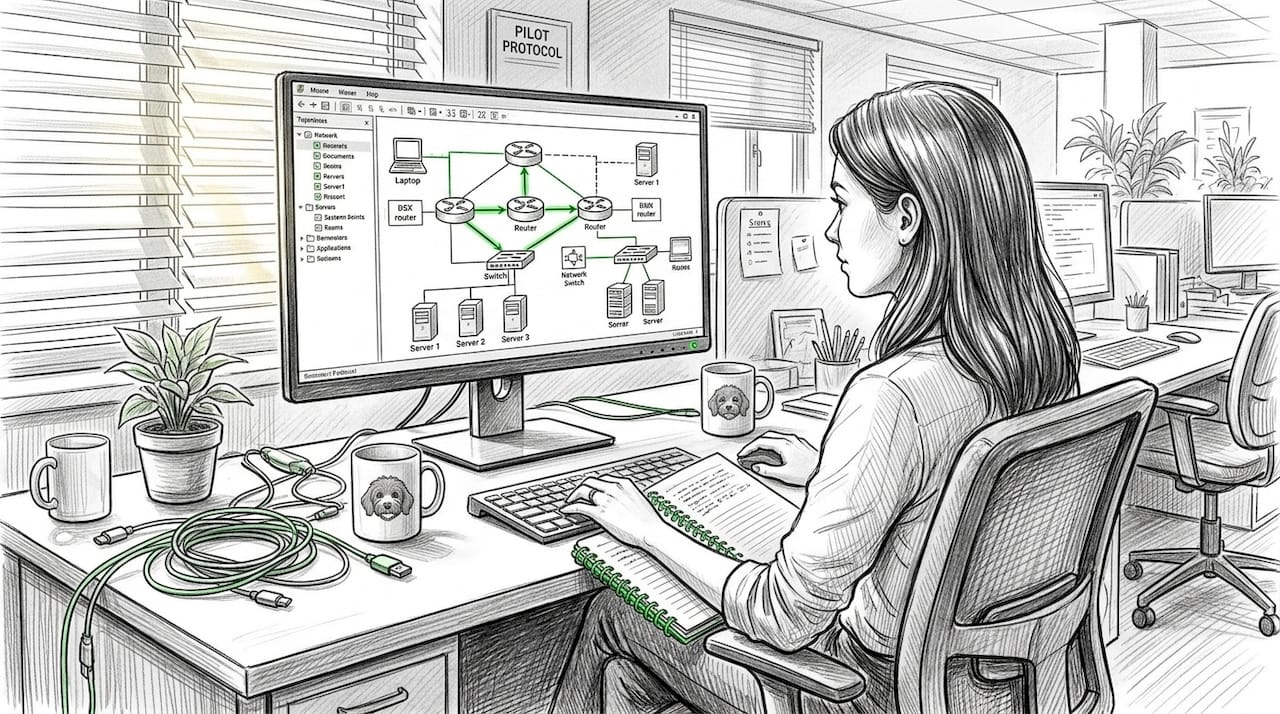

Practical deployment: Getting AI agents connected peer-to-peer

Now, let’s pull everything together with a hands-on deployment strategy and practical applications for your AI agents.

Getting agents connected via P2P in a multi-cloud environment breaks down into five concrete steps:

- Assign persistent addresses. Each agent needs a stable identity that survives restarts, IP changes, and cloud migrations. Pilot Protocol provides virtual addresses for this purpose. With libp2p, you use peer IDs derived from public keys.

- Configure peer discovery. Agents need to find each other before they can connect. In multi-cloud environments, DHT-based discovery or a rendezvous server solves this. Pilot’s overlay handles discovery automatically within the private network.

- Establish NAT traversal. Most agents sit behind NAT in cloud environments. Use auto NAT traversal libraries like Pilot to punch through network boundaries without firewall rule changes. WebRTC with STUN/TURN works well as a fallback.

- Negotiate trust. On first connection, exchange public keys and apply your TOFU policy. Store verified peer identities locally. Rotate keys on a defined schedule.

- Begin encrypted data exchange. Once the encrypted tunnel is established, wrap your existing protocols (HTTP, gRPC, or SSH) inside the P2P overlay. This preserves your existing agent logic while giving it a secure, direct communication path.

When assessing whether your environment is ready for P2P, use this framework:

| Deployment factor | P2P ready | Needs hybrid | Use centralized |

|---|---|---|---|

| Audit trail required | No | Yes | Yes (strict) |

| Cross-cloud agents | Yes | Yes | Possible |

| Real-time data exchange | Yes | Yes | No |

| Agent count | Any | Any | Small |

| Compliance environment | Light | Moderate | Heavy |

Real-world scenarios make these decisions clearer. AI trading agents face specific constraints: speed is critical, but so is auditability. A P2P overlay for the data plane, combined with a lightweight audit logger for the control plane, handles both requirements. Autonomous agent fleets performing distributed data collection or inference can often go fully P2P without any centralized component at all.

Learn more about agent discovery in P2P networks and explore direct P2P for AI agents to see how Pilot structures this deployment process from end to end.

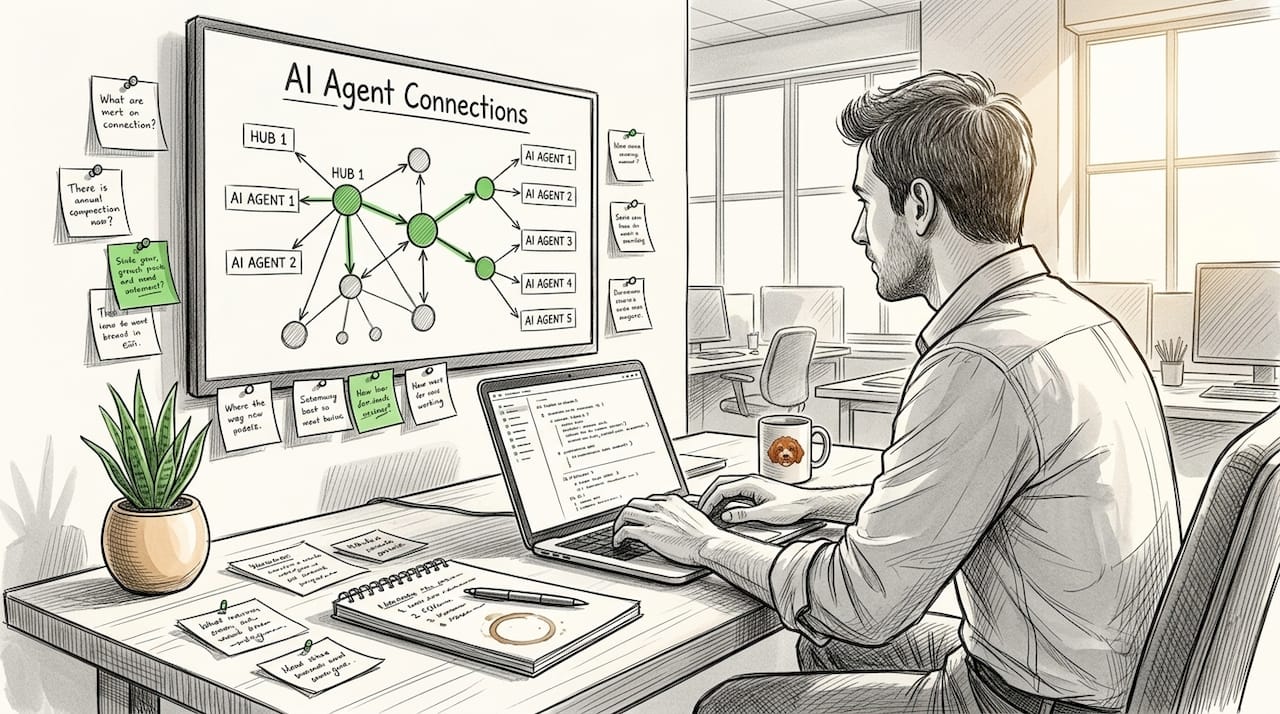

A fresh perspective: What most engineers miss about peer-to-peer in multi-cloud autonomous systems

Finally, let’s consider what most don’t: the deeper lessons learned by those who have built these systems and navigated complex trade-offs.

Most engineers first reach for P2P because they want to reduce infrastructure costs. Eliminating the central broker sounds like it removes a line item. That’s true, but it’s not the primary reason experienced teams choose P2P. The real value is architectural autonomy, the ability for your agent fleet to grow, adapt, and operate without every behavior being funneled through a system that someone has to maintain, scale, and recover.

When your agents communicate directly, you stop paying the coordination tax. Agents can form ad-hoc coalitions, share state directly, and reconfigure without waiting for central orchestration. That is what “autonomous” actually means in practice.

The trap most engineers fall into is treating P2P as binary: either fully decentralized or fully centralized. Real production systems rarely work that way. The teams who build durable architectures use decentralization where it delivers speed and resilience, and use centralization where it delivers accountability and auditability. Decentralized protocol trust isn’t about eliminating all central systems. It’s about reducing unnecessary dependencies while retaining the oversight mechanisms your organization actually needs.

The other thing most engineers underestimate is the regulatory timeline. You might build a fully decentralized agent network today that works perfectly from a technical standpoint, and then face a compliance audit eighteen months from now that requires you to retrofit audit logging into a system not designed for it. Building in a hybrid control plane from the start is far cheaper than retrofitting it later.

Future architectures for AI agent networks will need to balance three forces: decentralization for scale and resilience, encryption for security and privacy, and structured audit trails for regulatory and operational accountability. None of these forces cancel each other out. The engineers who recognize that early will build systems that last.

Next steps: Explore peer-to-peer solutions for your AI agents

If you’re ready to explore further, here’s where you can find actionable guides and next steps.

Direct P2P networking for AI agents is not a theoretical concept. It is a deployable, production-ready architecture that solves real problems in multi-cloud environments right now.

Pilot Protocol for AI agents gives your agent fleet persistent addresses, encrypted tunnels, automatic NAT traversal, and mutual trust establishment without requiring you to build any of that infrastructure yourself. You get Go and Python SDKs, a CLI, and a web console to manage your network from day one. For engineers who want to understand the underlying design principles, the network-layer infrastructure overview details the technical problem Pilot was built to solve, written for engineers who want to go deep before committing to a platform.

Start building your secure, autonomous agent network today.

Frequently asked questions

What are the main security risks in peer-to-peer connections?

Malicious peers, scalability limits from network churn, and higher complexity in enforcing consistent security policies are the primary risks in P2P networking. Mitigating these requires TOFU trust, end-to-end encryption, and careful peer verification at connection time.

Which technologies simplify direct P2P for AI agents?

WebRTC, libp2p, and Pilot are the leading options, with auto NAT traversal libraries like Pilot reducing implementation complexity significantly for multi-cloud deployments. Each technology has a distinct strength, so the right choice depends on your architecture’s scale and operational requirements.

Can peer-to-peer networking meet strict regulatory compliance?

Pure P2P often lacks the centralized audit trails required for strict regulatory compliance, making hybrid architectures the preferred approach in regulated industries. Retaining a lightweight control plane for logging while using P2P for the data plane is the most practical solution.

How do AI agents handle NAT traversal in multi-cloud?

Auto NAT traversal libraries like Pilot enable agents to connect across cloud boundaries without manual firewall configuration or static IP assignments. This is essential in multi-cloud environments where network topology changes frequently and agents need to stay connected automatically.

Recommended

- Cloud networking: Secure peer-to-peer for distributed AI

- Top encrypted tunnel advantages for P2P AI networks

- Direct Peer-to-Peer for AI Agents — Pilot Protocol

- Peer-to-peer networking examples every AI engineer should know

- AI-Powered EAs Vs Traditional Robots: Which Dominates Prop Firm Challenges In 2025? | FxShop24 Marketplace