How to wrap legacy protocols for P2P AI networks

How to wrap legacy protocols for P2P AI networks

TL;DR:

- Legacy protocols not designed for decentralized environments require modern overlay wrappers for secure, reliable communication.

- Proper assessment, tooling, and phased wrapping enable incremental modernization without disrupting existing AI infrastructure.

- Protocol wrapping offers a practical, low-risk alternative to full protocol replacement, facilitating scalable AI agent networks.

Distributed AI systems are only as capable as the communication layer beneath them. When your agents need to coordinate across clouds, regions, and organizational boundaries, legacy protocols like HTTP/1.0, raw TCP, and custom binary formats create real friction. These protocols were not designed for peer-to-peer environments where agents must find each other dynamically, establish trust, and exchange data without a central broker. This guide walks you through a practical, step-by-step approach to wrapping legacy protocols inside modern overlays so your AI agent fleets can communicate securely, reliably, and at scale.

Table of Contents

- Assessing legacy protocols and architecture needs

- Setting up your environment and prerequisites

- Wrapping legacy protocols for peer-to-peer communication

- Testing, verifying, and optimizing the wrapped solution

- Why protocol wrapping beats total legacy replacement for P2P AI

- How Pilot Protocol accelerates legacy integration

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Legacy protocols still matter | Many AI systems rely on legacy protocols that need modern integration, not replacement. |

| Wrapping enables interoperability | Protocol wrapping allows legacy and modern systems to share data securely in distributed architectures. |

| Test and optimize thoroughly | Verification and continuous performance improvement are essential for reliable real-world deployments. |

| Wrapping beats replacing | Wrapping offers easier, faster integration with less disruption than wholesale protocol replacement. |

Assessing legacy protocols and architecture needs

Before you write a single line of wrapper code, you need a clear picture of what you’re working with and what your distributed AI architecture actually requires.

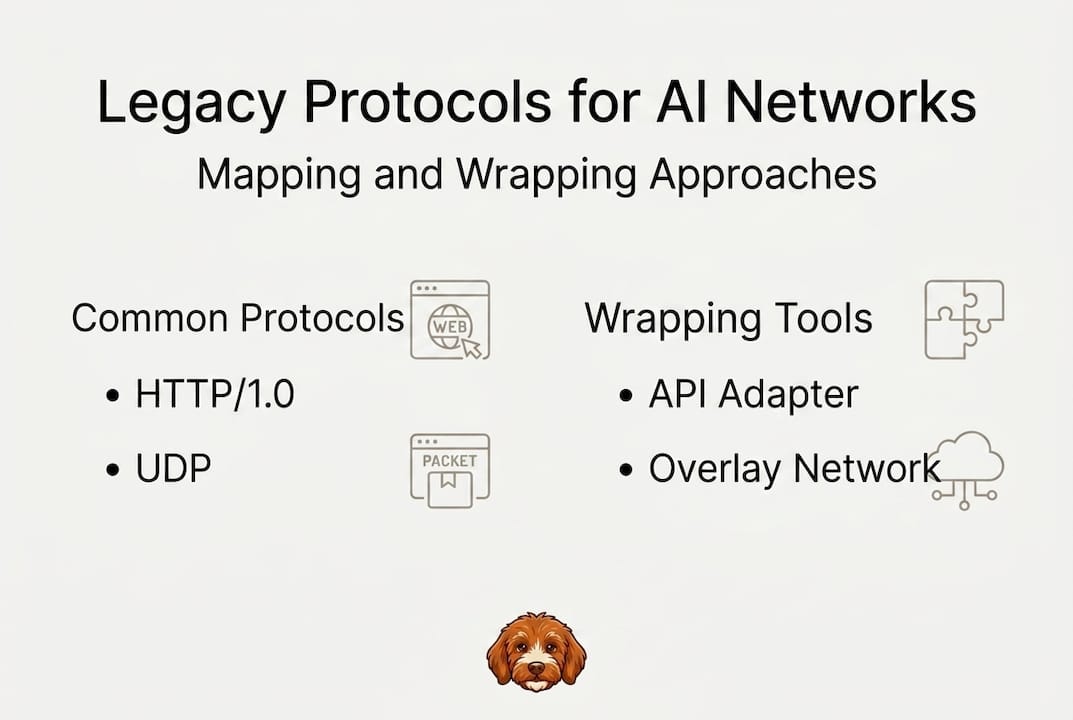

What counts as a legacy protocol?

A legacy protocol is any communication standard that was not designed for dynamic, decentralized, peer-to-peer environments. Common examples include:

- TCP over fixed IP addressing, where agents depend on static host discovery

- HTTP/1.0, which lacks persistent connections and modern security primitives

- Custom binary protocols built for closed industrial or enterprise systems

- SOAP/XML-RPC, widely found in older enterprise automation stacks

- Modbus and OPC-UA, common in industrial IoT and SCADA environments

The problem statement on network-layer infrastructure for autonomous agent communication outlines clearly how extending legacy protocols for autonomous agent communication requires careful architectural analysis before any implementation begins.

Identify your system constraints

Map your constraints before designing your wrapping strategy. Key dimensions to assess:

- Security requirements: Does your deployment mandate mutual TLS, end-to-end encryption, or token-based trust?

- Latency tolerance: Real-time inference agents have very different latency budgets than batch data pipelines.

- Interoperability: Will wrapped agents need to talk to third-party services or only to each other?

- NAT and firewall traversal: Many enterprise environments block direct connections, requiring punch-through mechanisms.

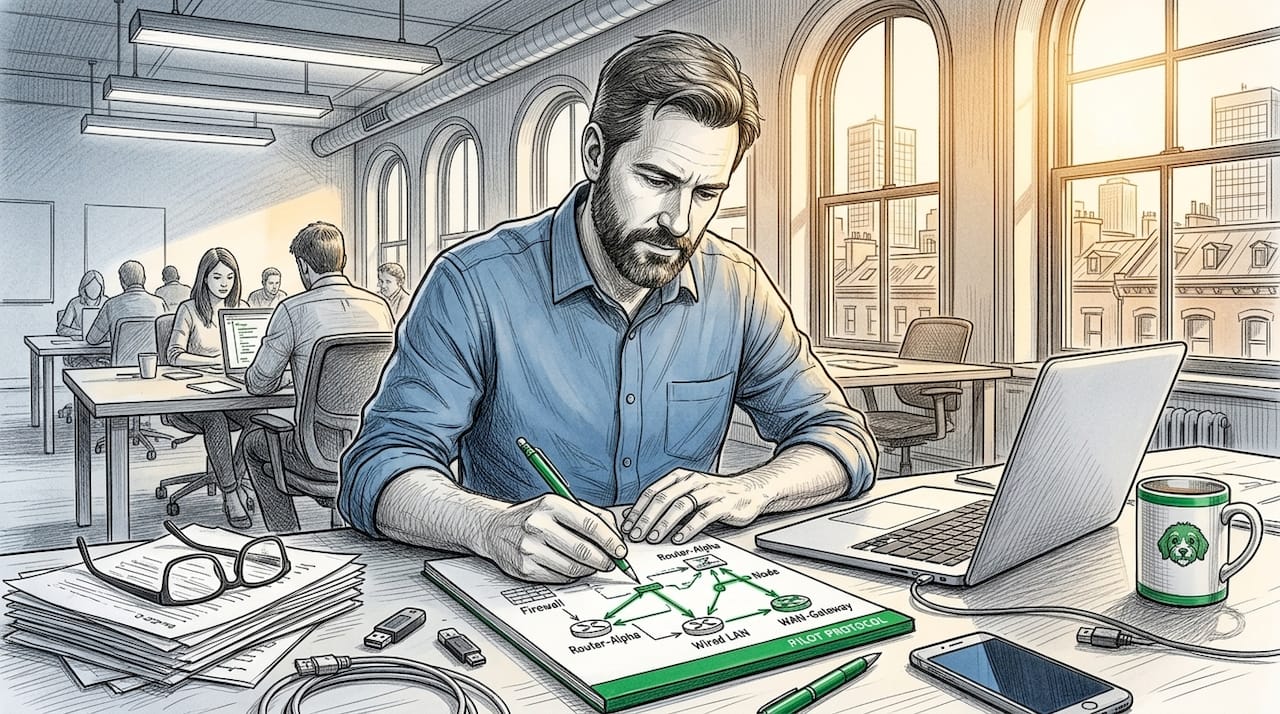

Map legacy protocols to your AI architecture

Draw a clear map of where each legacy protocol operates in your system. Which agents send? Which receive? Where does the legacy endpoint terminate, and where does modern infrastructure begin? This map becomes your integration blueprint.

Understanding protocol wrapping for AI systems at this stage will save you from rework later. You need to know exactly which boundaries your wrapper must bridge.

Comparison of common legacy protocols for AI networking

| Protocol | Transport | Encryption | Connection model | Wrapping complexity |

|---|---|---|---|---|

| HTTP/1.0 | TCP | None native | Request/response | Low |

| Raw TCP | TCP | None native | Stream | Medium |

| SOAP/XML-RPC | HTTP | Optional | Request/response | Medium |

| Modbus | TCP/Serial | None | Polling | High |

| Custom binary | Varies | Varies | Varies | High |

The overlay network for AI agents specification gives you a reference architecture to map these protocols against, so you can decide which wrapping pattern fits each protocol type in your environment.

Setting up your environment and prerequisites

After clarifying your protocol and architectural goals, you need the right stack and setup in place before writing any wrapper code.

Tools you need

Gather these tools before starting:

- Protocol analyzers: Wireshark or tcpdump to capture and inspect raw traffic from legacy endpoints

- Overlay frameworks: Go-based overlay libraries or full platforms like Pilot Protocol for NAT traversal and encrypted tunneling

- Test networks: Isolated Docker or virtual machine networks to simulate multi-hop agent communication

- Load testing tools: tools like wrk or k6 to generate realistic traffic volumes against your wrappers

- Certificate management: CFSSL or step-ca for provisioning mutual TLS certificates in your test environment

Overview of key frameworks

Two commonly used infrastructure components in distributed AI setups are messaging and caching layers.

RabbitMQ as a messaging layer gives you queued, reliable message delivery and works well as a bridge between legacy producers and modern AI consumers. You can use it to buffer traffic from legacy endpoints before it enters your peer-to-peer overlay.

Redis for distributed data handles shared state, session metadata, and pub/sub coordination between agents. When wrapping stateful legacy protocols, Redis is often the right choice for session-state mapping across nodes.

The Building a Userspace TCP-over-UDP Stack in Pure Go article describes how interface layers for legacy protocols work inside modern software projects, which directly informs how you structure your adapter components.

Environment compatibility table

| Environment | Wrapping approach | Overlay compatible | Notes |

|---|---|---|---|

| Docker Compose | Sidecar proxy | Yes | Good for local dev and staging |

| Kubernetes | DaemonSet or sidecar | Yes | Use NetworkPolicy for isolation |

| Bare metal | Systemd service | Yes | Requires manual cert rotation |

| Multi-cloud | Overlay agent | Yes | NAT traversal mandatory |

| Edge/IoT | Lightweight binary | Partial | Resource constraints apply |

Running HTTP services over encrypted overlay is a practical reference for environment setup when your legacy endpoint speaks HTTP.

Before-you-begin checklist

Complete these items before writing wrapper code:

- [ ] Capture and document all legacy protocol traffic with a protocol analyzer

- [ ] Define success criteria: latency targets, throughput minimums, uptime requirements

- [ ] Provision test certificates for mutual TLS

- [ ] Stand up an isolated test network with at least two simulated agent nodes

- [ ] Confirm overlay framework installation and basic connectivity

- [ ] Document current endpoint addresses and port configurations

Wrapping legacy protocols for peer-to-peer communication

Once your environment is ready, move on to the actual process of wrapping and integrating your legacy protocols into your peer-to-peer AI network.

The core wrapping process

Protocol wrapping means encapsulating legacy traffic inside a modern transport layer that adds encryption, peer discovery, and NAT traversal without modifying the original endpoint software. The direct communication protocols guide for AI agents covers approaches for connecting legacy communication protocols with modern solutions in detail.

Follow these steps:

-

Build the ingress adapter. Create a listener on the legacy endpoint’s expected address and port. This adapter receives raw legacy protocol traffic and translates it into a format your overlay can carry. For HTTP/1.0, this means receiving the raw request and re-emitting it over an encrypted tunnel.

-

Adapt the address and transport layers. Legacy protocols often assume fixed IP addresses. Replace these with virtual addresses provided by your overlay. The Pilot Protocol overlay assigns persistent virtual identities to each agent, so address resolution remains stable even as physical IPs change.

-

Map session state. Stateful protocols require you to maintain session context across the wrapper boundary. Store session identifiers and connection metadata in Redis or a lightweight in-memory store so both sides of the wrapper stay synchronized.

-

Apply encryption and authentication. Wrap every session with mutual TLS or the overlay’s native encryption mechanism. Never pass legacy plaintext traffic through the overlay without encryption. Follow secure network infrastructure best practices to configure trust policies correctly.

-

Handle protocol-specific quirks. HTTP/1.0 lacks chunked transfer encoding. Modbus has strict polling intervals. Document these behaviors and add protocol-specific handling in your adapter layer so the wrapper does not break existing endpoint expectations.

-

Expose the wrapped service. On the consumer side, present the wrapped service on a local virtual address. Agents connect to this address as if the legacy endpoint were local, while the overlay handles all routing and encryption transparently.

Example: wrapping HTTP/1.0 for encrypted overlay use

Suppose you have an HTTP/1.0 data source that an AI inference agent needs to poll. You stand up an ingress adapter in Go that listens on "localhost:8080`, receives GET requests from the agent, and forwards them over an encrypted overlay tunnel to the actual legacy endpoint. The response travels back through the same tunnel. The agent never knows it’s talking to a 1990s-era HTTP service.

You can use FastAPI for API integration as a thin translation layer if your legacy endpoint returns non-standard response formats that modern agents cannot parse directly.

Pro Tip: Test each encapsulation component in isolation before connecting it to the full overlay. Verify that your ingress adapter correctly parses legacy traffic, then verify the overlay tunnel separately, and only then combine both components. This isolation approach catches integration bugs early and keeps debugging manageable.

Testing, verifying, and optimizing the wrapped solution

Wrapping is only half the process. Verification and performance tuning ensure your solution works correctly in real distributed settings, not just in a controlled lab.

Build your testing suite

Design test scenarios that reflect actual AI workload patterns:

-

Baseline connectivity test: Confirm that a wrapped agent can reach the legacy endpoint and receive a valid response end-to-end.

-

Message integrity check: Send a known payload through the wrapper and verify that the received payload matches byte-for-byte. Any modification indicates a serialization or transport bug.

-

Latency measurement: Record round-trip time under low load, moderate load, and peak load. Compare against your baseline latency requirements from the assessment phase.

-

Throughput test: Push your wrapper to its throughput ceiling using a load testing tool. Identify the request rate at which latency begins to degrade.

-

Connection resilience test: Simulate network interruptions by dropping packets or killing overlay nodes. Verify that agents reconnect automatically and resume communication without data loss.

-

Security audit: Attempt to intercept traffic between agents and confirm that all data is encrypted. Verify that unauthorized agents cannot join the overlay or access wrapped services.

Critical metrics to track

- Message integrity rate (target: 100%)

- P50, P95, and P99 latency in milliseconds

- Requests per second at target latency

- Reconnection time after node failure in seconds

- Encryption coverage across all wrapped sessions

The Pilot Protocol revision details document outlines network performance measurement approaches specifically suited to distributed AI agent environments, which align with these metrics.

Analyze results and optimize

After your first test run, look for these common issues:

- Session mapping overhead: If latency spikes correlate with new connection attempts, your session-state store is a bottleneck. Switch to a local in-memory cache for hot sessions.

- Serialization costs: Protocol format translation between legacy and modern formats adds CPU overhead. Profile your adapter and optimize the hot path.

- Overlay routing inefficiency: Agents in the same region routing through distant overlay nodes add unnecessary latency. Use topology-aware routing if your overlay supports it.

Always measure performance at the overlay layer and at the legacy protocol layer separately before combining results. Aggregated metrics hide which component is the actual bottleneck.

Pro Tip: Monitor for protocol-specific bottlenecks by adding per-protocol metrics to your observability stack. HTTP/1.0 wrappers often suffer from connection-per-request overhead, while binary protocol wrappers sometimes accumulate fragmented packets. Use adjustable overlay buffer sizes to tune for each protocol’s behavior independently.

Benchmarking HTTP vs UDP Overlay for Agent Communication provides specific benchmark data for wrapped protocol performance, covering both throughput and latency under realistic AI agent workloads.

Why protocol wrapping beats total legacy replacement for P2P AI

Here is the perspective that most integration guides skip: the instinct to replace legacy protocols entirely is almost always wrong for distributed AI systems.

Full protocol replacement means rewriting endpoint software, retraining teams, and introducing new failure modes at the exact moment you are also scaling agent fleets. Every new protocol brings its own bugs. Every rewrite introduces regressions. Large AI infrastructures that attempted full protocol replacement mid-deployment have consistently reported instability windows that cost more than the technical debt they were trying to eliminate.

Wrapping, by contrast, gives you controlled modernization. You preserve existing investments, keep legacy endpoints operational, and add modern capabilities incrementally. You can validate each wrapped component before extending to the next. The reasons why protocol wrapping works come down to one principle: your agents need reliable communication now, not after a six-month replacement project.

The hard-won lesson from large AI infrastructure deployments is that incremental integration, done with clear boundaries and good observability, consistently outperforms big-bang replacement on every practical metric: uptime, delivery speed, and operational stability.

How Pilot Protocol accelerates legacy integration

If you want to move faster on legacy protocol wrapping, Pilot Protocol gives you the infrastructure components you need without building them from scratch.

Pilot Protocol provides ready-made encrypted overlays, NAT traversal, virtual addressing, and trust establishment specifically designed for AI agent networks. You can wrap existing HTTP, gRPC, and SSH traffic inside the overlay in a fraction of the time it takes to build custom adapter layers. Your agents get persistent virtual identities, mutual authentication, and cross-cloud connectivity out of the box. Explore Direct Peer-to-Peer for AI Agents to see how Pilot Protocol fits your deployment, and visit the Pilot Protocol overview to get started today.

Frequently asked questions

What is protocol wrapping in the context of AI networks?

Protocol wrapping means encapsulating legacy or traditional communication protocols inside modern overlay networks, enabling secure and interoperable AI agent communication without modifying the original endpoint software. The network-layer infrastructure problem statement covers this extension approach for autonomous agent communication.

Do I need to modify the existing legacy endpoint software?

Typically, no. Protocol wrapping operates at the interface or transport layer, so underlying endpoint software often requires minimal or no modification. The userspace TCP-over-UDP stack article describes how these interface layers work inside existing projects.

Is protocol wrapping compatible with encrypted peer-to-peer overlays?

Yes. Protocol wrappers encapsulate legacy traffic within encrypted overlay tunnels, preserving both security and legacy compatibility simultaneously. HTTP services over encrypted overlay demonstrates this approach with working examples.

What are the main benefits of protocol wrapping over protocol replacement?

Protocol wrapping is faster to deploy, less disruptive to existing systems, and maintains legacy compatibility while improving interoperability for distributed AI networks. Full details on the security and scalability advantages are covered in protocol wrapping for secure peer-to-peer AI systems.

How do I troubleshoot performance issues with wrapped protocols?

Analyze traffic at each layer separately, review overlay routing logs, and compare your results against published benchmarks. The benchmarking guide for HTTP vs UDP overlay provides a practical reference for isolating and resolving bottlenecks in wrapped protocol deployments.